On-Device AI Inference vs Cloud: Guide

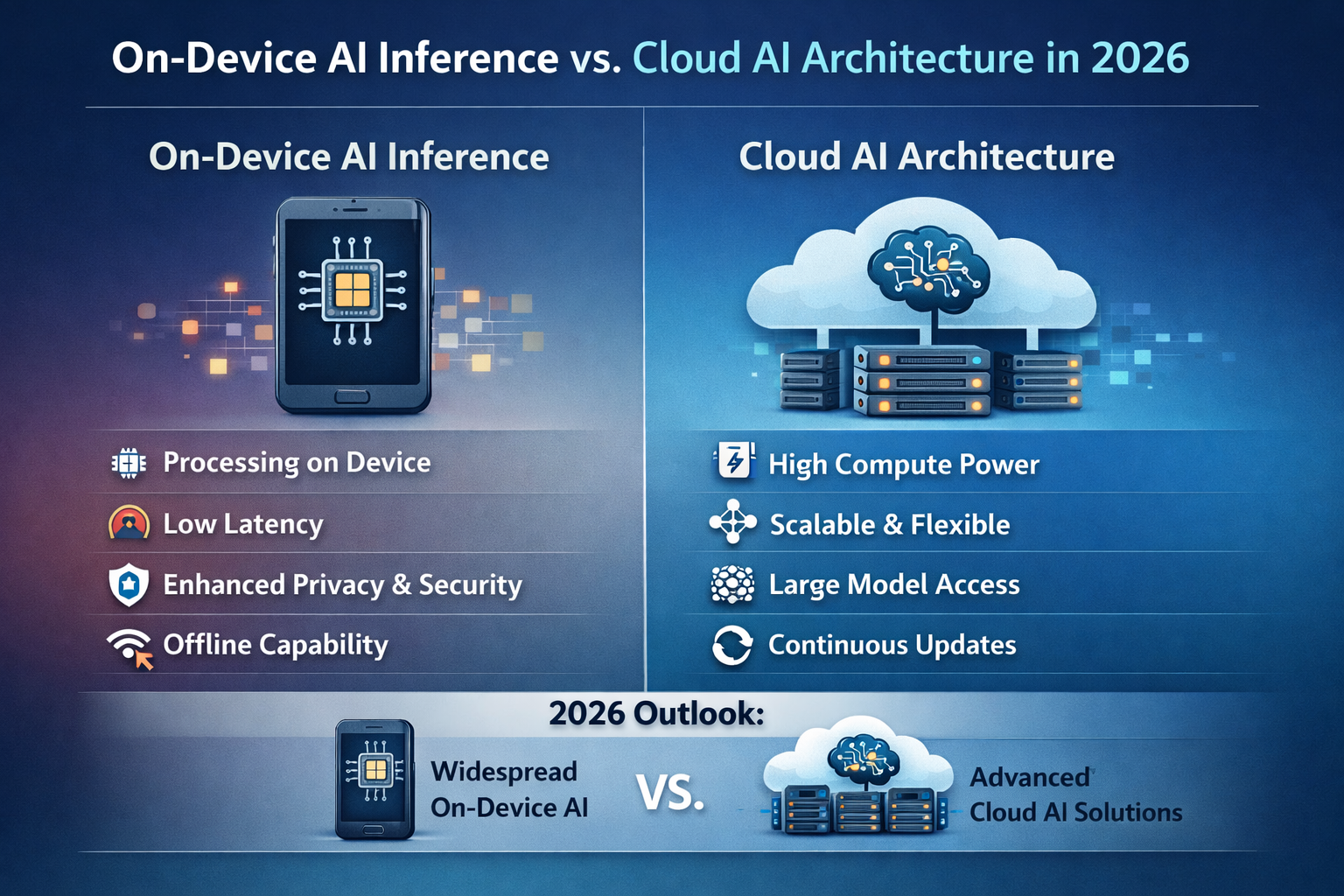

On-device AI inference means running trained AI models directly on user devices such as phones, AI PCs, gateways or cars instead of sending data to cloud servers for every prediction. Compared with cloud-only inference, it reduces latency and bandwidth use, improves privacy and data residency control, and can significantly lower recurring cloud costs for latency-sensitive AI applications.

Introduction

AI models keep getting larger, but cloud GPU prices, data-centre energy use and regulatory pressure are all rising in the US, UK, Germany and across the EU. At the same time, devices from iPhones to industrial gateways now ship with powerful neural processing units (NPUs), so it’s finally practical to move a big slice of AI inference out of the cloud and onto the edge.

Recent market estimates already value the global edge AI segment at around $25–30 billion in 2025, with forecasts above $110 billion by the early 2030s.

On-device AI inference means you deploy a trained model into an app, device or gateway so predictions happen locally, near where data is generated. Instead of round-tripping to a central cloud, you send fewer, richer events for retraining and monitoring.

In this guide, we’ll look at when to move AI inference to devices, how to choose hardware and tooling, and how to design a hybrid edge cloud AI architecture that actually fits real-world enterprises.

Mak It Solutions already helps organisations design compliant, performance-focused digital platforms across the USA, UK, Germany and the wider EU, from web development to mobile app development services.

What Is On-Device AI Inference?

Simple definition vs cloud-based AI inference

On-device AI inference is the process of running AI models directly on end-user or edge devices phones, laptops, gateways, vehicles or sensors so they can make predictions locally, without sending raw data back to a central cloud model each time.

In cloud-based AI inference, the heavy compute happens in a data centre; devices mainly stream input data and wait for the result. The key difference is where the compute and data processing happen: at the edge vs in the cloud.

Edge AI, on-device AI and AI inference at the edge.

You’ll see several overlapping phrases.

Edge AI / AI inference at the edge models run on compute that sits close to where data is produced: industrial gateways, 5G base stations, in-store servers, connected vehicles.

On-device AI models run on the end device itself: smartphones (e.g. Apple iPhone with A18 Neural Engine), AI PCs, wearables, in-cabin automotive computers.

Edge AI vs cloud AI “edge” focuses on local, low-latency compute; “cloud” focuses on centralised, elastic compute for heavy training and large shared models.

Typical edge locations include

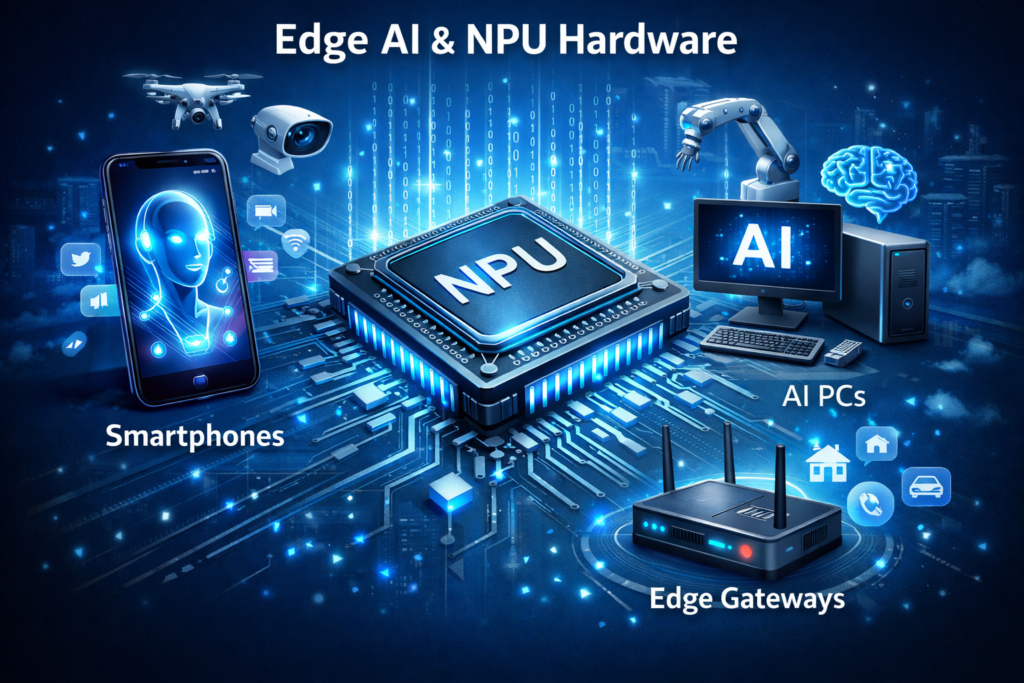

Phones and tablets e.g. Qualcomm Snapdragon NPUs in Android flagships, iPhones with Apple Neural Engine.

AI PCs and workstations x86 or Arm CPUs with integrated NPUs for local copilots and on-device generative AI assistants.

Industrial gateways and smart sensors rugged boxes from vendors such as Siemens or Bosch, or STMicroelectronics-based microcontrollers in factories and smart grids.

Connected vehicles and EV fleets embedded NVIDIA or Arm-based compute in cars, trucks and charging infrastructure.

Why 2026 is the tipping point for on-device AI

By 2026, several trends converge

AI PCs and smartphones ship with serious NPUs Apple’s A18 Neural Engine and Qualcomm’s latest Hexagon NPUs deliver tens of TOPS of dedicated AI compute in consumer hardware.

Industrial edge accelerators mature rugged GPUs and NPUs from NVIDIA and others power vision and control loops on the factory floor.

Data volumes and regulations explode GDPR/DSGVO, UK-GDPR and sector rules like HIPAA and PCI DSS push you toward data minimisation and strict data residency.

Data-centre energy costs bite data centres already account for roughly 1–2% of global electricity consumption, with AI expected to push that share up significantly by 2030.

Put together, 2026 is the year many teams stop asking “if we should move inference on-device” and start asking “which workloads go edge vs cloud.”

Why Inference Is Moving from Cloud to Devices in 2026

Cloud cost pressures and savings from on-device AI

The main benefits of moving AI inference from cloud to devices in 2026 are lower per-request costs, reduced latency, better reliability and stronger privacy by design. For many organisations, a hybrid edge cloud AI architecture gives the best balance of cost, performance and control.

Public clouds typically bill per 1,000 inferences plus network egress. For a busy mobile app or B2B SaaS product, those fees can rival or exceed your training spend within months. As models grow and more latency-sensitive AI applications go live, continuously hitting large cloud models becomes eye-wateringly expensive.

Think about a few concrete patterns:

A US bank in New York running card-fraud checks on every tap can move first-pass scoring into the card terminal or phone wallet, streaming only high-risk events back to Amazon Web Services (AWS) or Microsoft Azure.

A UK retailer in London or Manchester can run product-recognition models on in-store cameras at the edge, sending only aggregated metrics to the cloud instead of 24/7 video streams.

A German manufacturer in Munich can run anomaly detection on industrial sensors at the gateway, cutting bandwidth from terabytes of raw telemetry to kilobytes of alerts.

Across these examples, shifting inference on-device often cuts recurring cloud inference costs by 30–70% for high-volume workloads, especially where every transaction or sensor tick currently calls a cloud API.

Latency, reliability and offline AI inference at the edge.

Cloud inference always adds unpredictable network hops. For latency-sensitive AI applications fraud checks in card terminals, AR/VR overlays, industrial control loops, real-time translation in airports those extra tens of milliseconds can break the experience or even safety constraints.

Local inference on phones, AI PCs or gateways.

Delivers near-zero latency for UX-critical tasks (e.g. AR annotations in a startup’s headset)

Keeps robots and PLCs safe in remote plants with patchy 5G or private LTE.

Allows offline inference on trains, ships and EV fleets, syncing back when bandwidth is available.

Privacy, data residency and energy as key drivers

Running models on-device supports privacy and data minimisation: raw biometric, health or payment data never leaves the device; only signals, scores or aggregates go to the cloud. That’s powerful for.

US healthcare providers trying to stay HIPAA-compliant while rolling out bedside decision support or remote monitoring.

UK public sector and the NHS, who must comply with UK-GDPR and local data residency policies.

German industry under DSGVO, BaFin guidance and sector rules for energy, mobility and finance.

Energy-wise, each cloud GPU inference run in a distant data centre carries overhead in cooling, networking and power distribution. Edge NPUs and energy-efficient AI chips can often deliver better joules-per-inference, which matters now that AI data centres already consume hundreds of terawatt-hours per year.

Architectures, Chips and Devices for Edge AI On Device

NPUs, GPUs and edge AI chips in phones, AI PCs and gateways

Modern on-device AI relies on specialised hardware

NPUs (Neural Processing Units) highly parallel, low-power accelerators optimised for matrix operations. Smartphone and PC NPUs from Qualcomm, Apple and others now reach tens of TOPS, and over 80% of recent Qualcomm SoCs ship with an NPU.

GPUs still critical for heavy vision and graphics, especially on NVIDIA-powered edge gateways in factories and smart cities.

Dedicated edge AI chips e.g. Arm-based SoCs in industrial gateways, routers and cameras.

Map hardware to workloads

Vision

GPUs and NPUs in cameras, robots, connected vehicles.

Speech and translation

NPUs in phones and AI PCs for low-latency transcription and on-device generative AI assistants.

Recommendations and ranking

NPUs in retail apps and terminals for personalisation at the edge.

TinyML and compact transformer models on microcontrollers

Not every device has a beefy NPU. TinyML and compact transformer models bring inference to microcontrollers with kilobytes of RAM, like STMicroelectronics-based sensors in European smart grids or factory lines. Techniques include:

Quantisation (INT8 / INT4) and pruning to reduce model size.

Architecture tricks such as efficient transformer variants and knowledge distillation.

Event-driven, duty-cycled designs so battery-powered sensors only wake models when necessary.

This enables DSGVO-compliant edge AI inference in Germany where raw sensor or audio never leaves the device; only compressed signals are sent back for analytics in a BI stack or data warehouse.

Tooling and frameworks for AI inference at the edge

The edge ecosystem is finally usable for real teams.

TensorFlow Lite, ONNX Runtime, Core ML, PyTorch Mobile.

Vendor SDKs for Qualcomm, NVIDIA, Arm, Apple and industrial gateways.

Cloud-to-edge deployment with AWS IoT Greengrass, Azure IoT Edge and Google Cloud Vertex AI.

You convert and optimise models in your MLOps pipeline, push them to edge fleets, and monitor them alongside cloud models. Mak It Solutions often pairs this with business intelligence services so product and operations teams can see edge inferences and outcomes in one place.

Edge AI vs Cloud AI: Designing a Hybrid Architecture

Latency, bandwidth and cost trade-offs of edge vs cloud

Edge AI excels at low-latency, privacy-sensitive, bandwidth-heavy workloads. Cloud AI shines at global scale, large shared models and heavy retraining.

For example, if you look at edge AI vs cloud AI for UK telecom 5G rollouts, the radio network and base station need local inference for beamforming and quality-of-service decisions, while the cloud crunches longer-term planning and SON optimisation.

Pure-cloud architectures become bottlenecks when.

Round-trip latency exceeds your UX or safety budget.

Bandwidth costs scale faster than revenue.

Regulations forbid streaming raw data outside a country or region.

Hybrid edge cloud AI patterns that actually work

In practice, most enterprises in 2026 end up with hybrid edge cloud AI patterns such as.

Local inference, cloud retraining gate-level fraud scoring on POS devices, with cloud models retrained on aggregated risk events for US banks in New York or San Francisco.

Cloud fallback an edge model makes a fast decision; uncertain cases or model drift route to a more powerful cloud model.

Model distillation a large cloud model trains a smaller edge model that runs in terminals, phones or gateways.

Examples

UK telecom and 5G providers in London route RAN optimisation to near-edge servers, falling back to cloud analytics during off-peak hours.

Industrie 4.0 factories in Berlin and Munich use Bosch and Siemens gateways for real-time control, with AWS or Azure used for global fleet learning and dashboards.

Deciding which AI models run on-device vs in the cloud

A business can decide which AI models to run on devices and which to keep in the cloud by scoring each workload across five dimensions: latency, privacy sensitivity, data volume, regulatory risk and hardware availability. High-latency-sensitivity, high-privacy, high-volume workloads are prime candidates for on-device AI; low-volume, batch, cross-tenant workloads stay in the cloud.

Practical checklist.

Latency

Does the user or control loop need sub-50ms responses?

Privacy & compliance

Is the data covered by GDPR/DSGVO, UK-GDPR, HIPAA, PCI DSS or sector rules?

Data volume

Are you streaming images, video or high-frequency sensor data?

Hardware

Do target devices have NPUs, GPUs or at least vector-capable CPUs?

Operational model

Can your team manage updates, observability and security on a distributed fleet?

On-Device AI Inference Use Cases Across US, UK and Europe

Healthcare and life sciences.

In healthcare, on-device AI inference keeps sensitive data local while enabling real-time decisions. Examples include:

Medical imaging triage on scanners at US hospitals, where first-pass AI runs on-prem or at the edge for HIPAA-compliant workflows.

Bedside decision support apps for clinicians running in secure enclaves on AI PCs.

Remote monitoring wearables with on-device models for arrhythmia detection or fall risk, sending only alerts to the cloud.

In the NHS or UK private providers, on-device inference combined with UK-GDPR controls supports data minimisation and clear audit trails for edge AI pipelines.

Finance, payments and retail.

For finance and retail, edge AI hits both revenue and risk.

On-device AI inference for US healthcare providers extending into payment flows, with mobile apps that tokenise and score transactions locally before hitting backend services.

PCI DSS-aligned POS terminals across London, Frankfurt and New York running local fraud and scam detection models on devices, sending enriched features to the cloud.

In-store analytics in Paris or Amsterdam, where cameras analyse footfall and shelf interaction on edge gateways, avoiding streaming identifiable video to the cloud.

Open Banking APIs and real-time payments mean milliseconds matter. On-device scoring reduces false positives, while hybrid architectures let you centralise risk policies and monitoring.

Manufacturing, automotive and smart infrastructure in Europe

Europe’s Industrie 4.0 factories and smart infrastructure are natural homes for edge AI:

Industrial IoT and robotics in German plants from Munich to Berlin use gateways from Siemens or Bosch to run predictive maintenance models on vibration data.

Connected cars and EV fleets across the EU rely on on-device AI for perception, driver assistance and route optimisation, with cloud services aggregating fleet data for retraining.

Smart city deployments in Paris or Amsterdam use edge AI for traffic optimisation, parking, lighting and incident detection.

Mak It Solutions often pairs these deployments with multi-cloud strategy design so European clients can balance sovereignty, BaFin guidance and cost across AWS, Azure and local sovereign clouds.

Compliance, Security and Governance for Edge AI

Designing GDPR/DSGVO and UK-GDPR-compliant edge AI pipelines

On-device AI can directly support GDPR/DSGVO and UK-GDPR principles like data minimisation, purpose limitation and storage limitation.

Key patterns.

Process data locally where it’s collected, especially in Germany where regulators stress DSGVO-compliant edge AI inference in critical sectors.

Use the cloud for anonymised aggregates, global analytics and model retraining under the EU AI Act’s high-risk AI obligations.

Align with NIS2 for critical infrastructure by treating edge nodes as part of your essential network assets.

Securing models and data on millions of devices

Security for edge AI is part crypto, part DevOps, part MLOps.

Harden model artefacts with signed binaries, encrypted storage and secure enclaves/TEEs.

Use mutual TLS and certificate-based authentication for model update channels.

Implement staged rollouts, health checks and rollback strategies across fleets.

Monitor behaviour both at the edge (basic health, drift signals) and in the cloud (aggregated metrics, abuse detection)

Mak It Solutions builds on experience from phishing-resistant MFA programmes to design identity, device trust and zero-trust access for edge AI pipelines.

Working with auditors and regulators in US, UK and EU

For auditors and regulators, edge AI doesn’t remove requirements; it just moves them closer to the user:

SOC 2 and PCI DSS assess controls around data handling, encryption, access and change management—edge nodes are in scope.

Sector regulators like BaFin, the FCA, NHS bodies or US state health departments expect evidence of risk assessment, DPIAs and technical safeguards in edge AI deployments.

Document your edge AI controls once and re-use them: model inventory, data flows, encryption, update pipeline, incident response.

How to Get Started with On-Device AI Inference in 2026

Assessing which workloads belong on-device

Start with a structured assessment instead of jumping straight to hardware shopping.

Inventory AI workloads models in mobile apps, web apps, contact centres, factories, vehicles.

Score by latency, privacy, cost and hardware readiness – highlight workloads with high latency sensitivity and high cloud costs.

Identify quick wins mobile apps, AI PC-based tools and industrial gateways where you already control the device fleet.

This is exactly the type of discovery Mak It Solutions runs for clients across the USA, UK and EU before committing to significant edge hardware spend.

Building your hybrid edge cloud roadmap

Next, outline a realistic roadmap.

Pilot – one or two use cases (e.g. on-device AI inference for US healthcare providers, or fraud scoring in a London fintech).

Reference architecture standardise your hybrid edge cloud AI architecture, observability and security baselines.

Scale and governance formalise model lifecycle management, compliance templates and incident response.

You’ll likely lean on existing cloud investments AWS, Azure or GCP for fleet management, registries and MLOps, while using partners for device integration.

From pilot to production.

Most teams don’t want to become hardware and firmware experts overnight. Work with:

Specialist edge hardware vendors and chipmakers (NVIDIA, Qualcomm, Arm ecosystem partners).

Cloud providers for secure distribution and monitoring.

An implementation partner like Mak It Solutions that understands cloud, mobile, data and compliance together see our services overview and About Us.

If you’re planning an on-device AI or edge AI initiative, our Editorial Analytics Team can help you turn this guide into a scoped roadmap covering architecture, TCO and compliance.

Key Takeaways

On-device AI inference moves compute to devices, cutting latency and bandwidth while improving privacy and compliance for regulated industries.

2026 is a tipping point thanks to powerful NPUs in consumer and industrial devices, rising data-centre energy costs and stricter regulations in the US, UK, Germany and EU.

The most effective approach is usually a hybrid edge cloud AI architecture, where latency-sensitive AI applications run locally and heavy training stays in the cloud.

Compliance frameworks like GDPR/DSGVO, UK-GDPR, HIPAA, PCI DSS, SOC 2, the EU AI Act and NIS2 all influence where and how you run inference and store data.

A practical roadmap starts with workload assessment, quick-win pilots and a scalable reference architecture, supported by the right partners and platforms.

If you’re wrestling with where edge AI fits into your roadmap mobile apps, AI PCs, industrial gateways or connected vehicles you don’t have to figure it out alone. Mak It Solutions helps teams in the USA, UK, Germany and across the EU design secure, compliant hybrid edge cloud AI architectures that work in production.

Share your key workloads, regulators and target devices, and our team will help you shape a concrete on-device AI inference plan for 2026.( Click Here’s )

FAQs

Q : How much latency can on-device AI inference realistically save for a mobile or web app?

A : On-device AI inference can cut round-trip latency from hundreds of milliseconds to just a few milliseconds because predictions happen directly on the device instead of travelling to a remote data centre. In practice, many teams see 5–20x faster responses for latency-sensitive AI applications like fraud checks, AR overlays or smart camera filters. The exact improvement depends on network quality, device hardware and how efficiently the model is implemented.

Q : Do we need AI PCs with NPUs for developers, or can existing laptops handle edge AI workloads?

A : You don’t strictly need AI PCs with NPUs to start building edge AI; most developers can prototype using existing CPUs/GPUs and device simulators. However, AI PCs with built-in NPUs make it easier to test neural processing unit (NPU) performance and battery impact for real workloads like on-device generative AI assistants. In 2026, it’s sensible to give at least part of your engineering team NPU-capable machines if edge AI is a core product pillar.

Q : What are the hidden costs of keeping all AI inference in the cloud instead of moving some to devices?

A : Hidden costs include steadily rising per-inference charges, bandwidth egress fees and the need to overprovision capacity for peak loads. There’s also an opportunity cost: higher latency can reduce conversion rates in apps, and regulators may require expensive data-locality workarounds if all raw data flows to central regions. Over a few years, these costs can easily exceed the investment needed to move suitable workloads to on-device AI inference.

Q : How does on-device AI inference impact model accuracy and monitoring compared with cloud-only deployments?

A : On-device models are often smaller and more optimised, so there can be a slight drop in accuracy compared with huge cloud models. Techniques like distillation and quantisation-aware training help close that gap. The bigger shift is in monitoring: you need privacy-preserving telemetry, such as aggregated error metrics, sampled inputs or feature distributions, instead of raw data logs. With a solid MLOps stack, you can still detect drift, trigger retraining and roll out updated edge models safely.

Q : What are best practices for securely updating AI models across thousands of edge devices in multiple countries?

A : Treat model updates like software releases. Sign every model artefact, encrypt transport and deliver via secure, authenticated channels (for example, device management or MDM tools). Use staged rollouts starting with internal fleets, then a small percentage of users combined with automatic rollback if health checks fail. Maintain clear inventories of which models run on which devices in which countries so you can prove GDPR/DSGVO, UK-GDPR, HIPAA or PCI DSS compliance during audits. Logging every model version and deployment event is essential.

[…] Cloud helps, but it doesn’t eliminate risk: a misconfigured security group in Virginia or a DNS issue impacting an edge POP in Oregon can still bring down users in New York, London or Berlin. That’s why you need an architecture that assumes failure, not a hope that “our region never goes down.” […]