AI-Native Development Platforms for Faster Teams

AI-Native Development Platforms for Faster Teams

AI-Native Development Platforms for Faster Teams

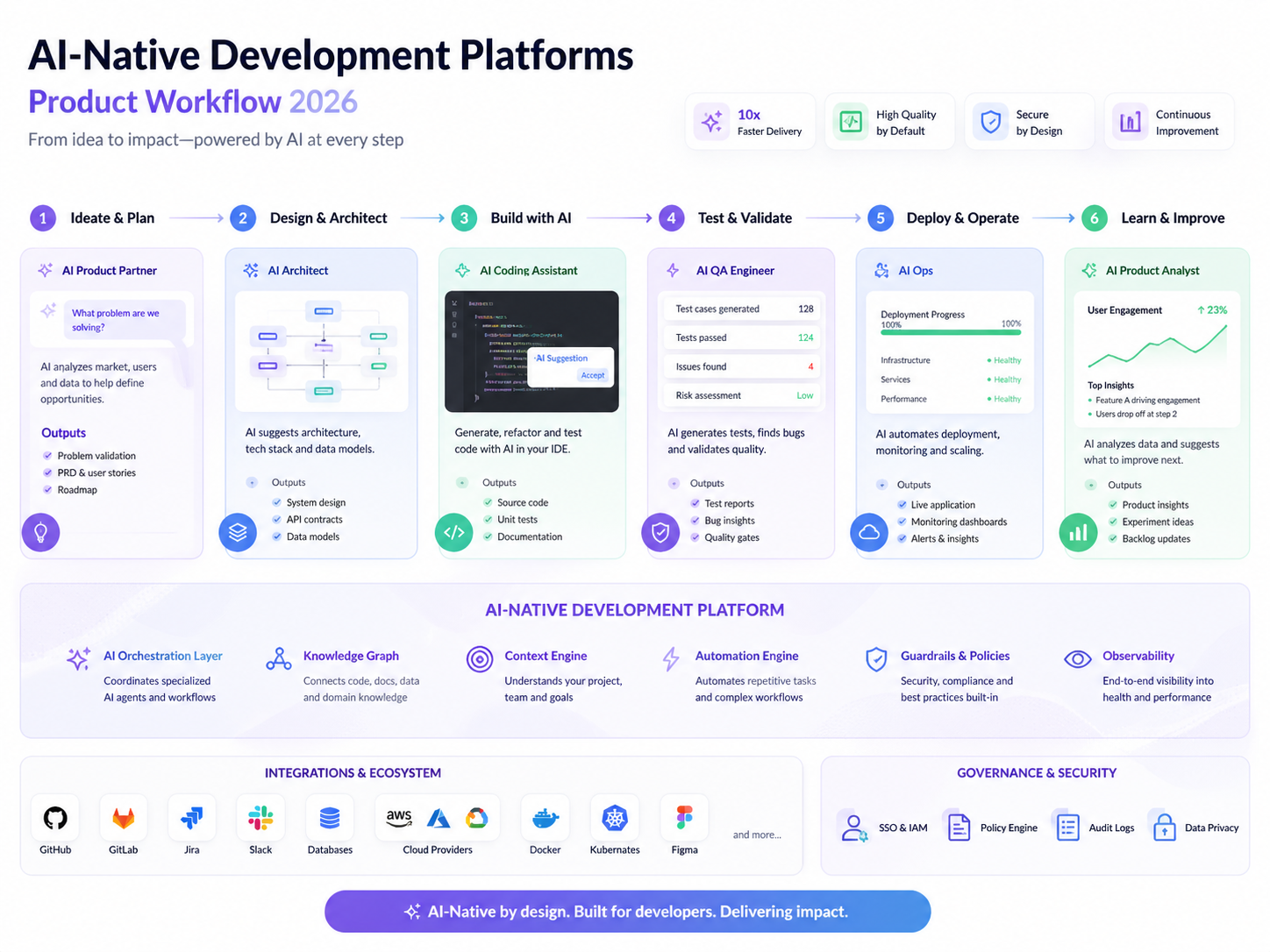

AI-native development platforms are becoming the new operating layer for product teams that need faster delivery without losing control of quality, security, or compliance. They bring AI into product discovery, prototyping, coding, testing, deployment, documentation, and governance—not just code suggestions.

For product teams, the main value is simple: AI-native development platforms help turn ideas into validated software faster while keeping humans responsible for product judgment, architecture, risk, and customer impact. That matters whether you are building a SaaS MVP in the United States, a fintech workflow in the United Kingdom, or an enterprise platform in Germany or the wider EU.

The shift is already visible. Stack Overflow’s 2025 Developer Survey reports that 84% of respondents use or plan to use AI tools in development, while 51% of professional developers use them daily.

What Are AI-Native Development Platforms?

AI-native development platforms are software environments where AI is built into the full product lifecycle. Instead of sitting beside the team as a chatbot or code assistant, AI becomes part of the workflow that connects research, design, backlog planning, engineering, QA, release management, and governance.

That means AI can help teams.

Summarize user research and support tickets

Draft user stories and acceptance criteria

Generate prototype flows

Suggest technical approaches

Create tests and documentation

Review risks before release

Maintain audit trails for regulated work

In practice, these platforms help teams move from “AI writes snippets of code” to “AI supports the whole product delivery system.”

AI-Native vs AI-Assisted Development

AI-assisted development usually means a developer uses an AI coding tool inside an IDE. It can speed up repetitive coding tasks, explain unfamiliar code, or suggest fixes.

AI-native development goes further. It connects AI with product requirements, design systems, repositories, CI/CD pipelines, cloud environments, analytics, monitoring, and compliance workflows.

A team using Mak It Solutions’ web development services might use AI-assisted tools to speed up front-end implementation. An AI-native approach would also connect the product brief, user stories, test cases, deployment checks, release notes, and post-launch analytics.

That wider connection is where the real operating-model change begins.

Why Product Teams Are Moving Beyond Coding Tools

Most product bottlenecks do not happen only in code.

They happen when discovery is unclear, requirements keep changing, QA is rushed, documentation falls behind, or compliance teams are brought in too late. AI-native development platforms help reduce those gaps by giving product managers, designers, engineers, QA, security, and compliance teams a shared workflow.

AI-native development platforms embed AI across discovery, prototyping, coding, testing, deployment, monitoring, and governance. They are different from basic coding assistants because they support the full AI-powered SDLC, not only code generation.

How AI-Native Product Teams Work Differently

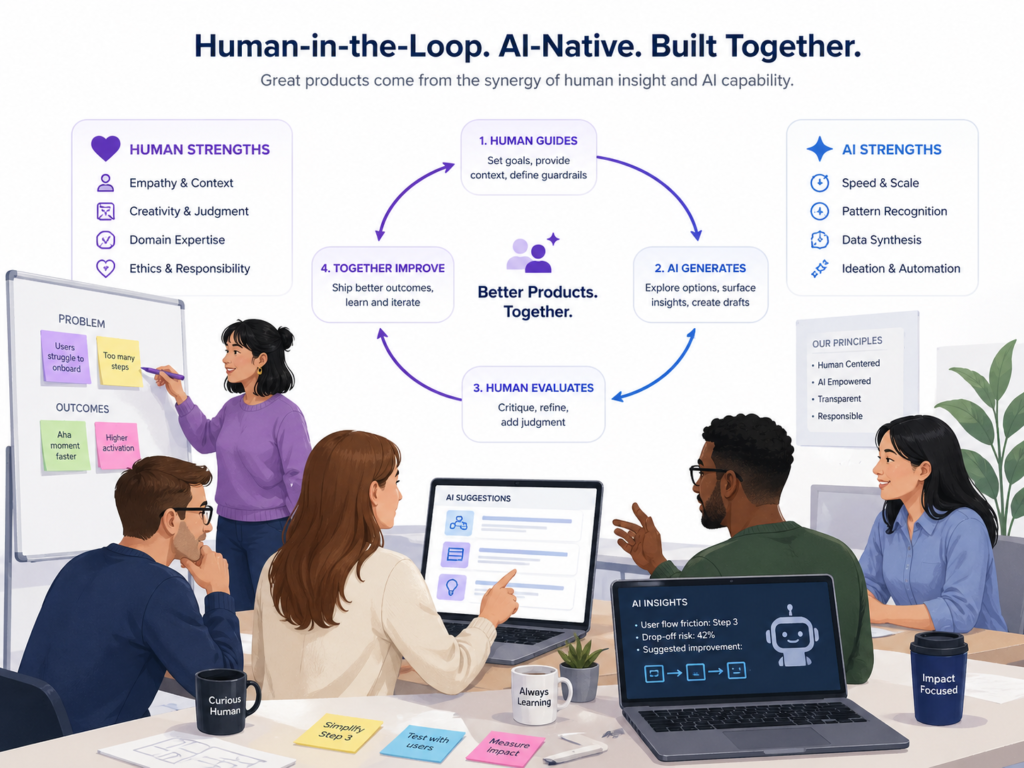

The best product teams do not use AI to remove human judgment. They use it to shorten feedback loops, make options clearer, and reduce repetitive handoffs.

That distinction matters. AI can generate drafts, tests, prototypes, summaries, and recommendations, but people still need to decide what is right for the customer, the business, and the system.

Google Cloud’s 2025 DORA research found that 90% of respondents use AI at work, more than 80% believe it improves productivity, and 30% report little or no trust in AI-generated code. That is a useful reality check: adoption is high, but trust still depends on review, context, and strong engineering practices.

Product Managers Become AI-Enabled Builders

Product managers can now move from vague feature ideas to more concrete artifacts. They can turn interview notes into problem statements, convert feedback into backlog themes, draft user stories, and generate prototype flows before engineering time is committed.

This does not replace engineering. It makes product thinking easier to test.

For example, a PM in Austin might use an AI-native platform to turn customer interview notes into a clickable workflow. Engineers can then review the architecture, edge cases, permissions, data model, and delivery effort before the team commits to a build.

Designers, Engineers, and PMs Share Faster Feedback Loops

AI-native development platforms help cross-functional teams work from the same context. A designer in London, an engineer in Manchester, and a data analyst in Dublin can review the same AI-generated prototype, test plan, and backlog structure.

That is especially useful for distributed SaaS, mobile app, and analytics teams.

When the product needs iOS, Android, or cross-platform delivery, these workflows can also connect with mobile app development services so discovery, design, and build decisions stay aligned.

Human-in-the-Loop Workflows Keep Quality in Check

Human-in-the-loop product development means people approve AI outputs before those outputs affect customers, revenue, safety, regulated data, or production systems.

That review layer is not a formality. It is the difference between useful acceleration and unmanaged risk.

Mak It Solutions has also covered scalable review patterns in its guide to human-in-the-loop AI workflows. For AI-native product teams, this kind of review is essential for code quality, security, privacy, UX, and compliance.

Core Capabilities to Look For in AI-Native Development Platforms

A strong AI-native platform should improve the full product operating model, not just developer speed.

Look for capabilities that support collaboration, traceability, integration, security, and governance.

AI Prototyping, MVP Creation, and Product Discovery Automation

Useful AI-native platforms can turn messy inputs into structured product work. That includes research notes, support tickets, sales feedback, analytics events, stakeholder requests, and market assumptions.

Strong capabilities include.

Prototype generation

Persona and segment summaries

Journey maps

User story drafts

MVP scope suggestions

Acceptance criteria

Test case drafts

Product risk summaries

For MVP delivery, teams can pair AI prototyping with Mak It Solutions’ services hub to connect strategy, design, web, mobile, analytics, and delivery planning.

Agentic Development Workflows and Autonomous Software Agents

Agentic development workflows use AI agents to plan and execute multi-step tasks. These agents may create branches, suggest code changes, run tests, open pull requests, generate documentation, or summarize issues.

That sounds powerful because it is. It also needs limits.

Agents should work inside permissioned environments with activity logs, review gates, rollback options, and clear ownership. The faster the agent can act, the more important the guardrails become.

AI-Powered SDLC.

The biggest value often appears after the first prototype.

AI-native development platforms can help teams generate tests, run regression checks, draft release notes, summarize monitoring signals, identify risky changes, and keep documentation closer to the actual product.

For data-heavy products, this connects naturally with business intelligence and analytics content so product decisions are tied to trusted metrics rather than guesswork.

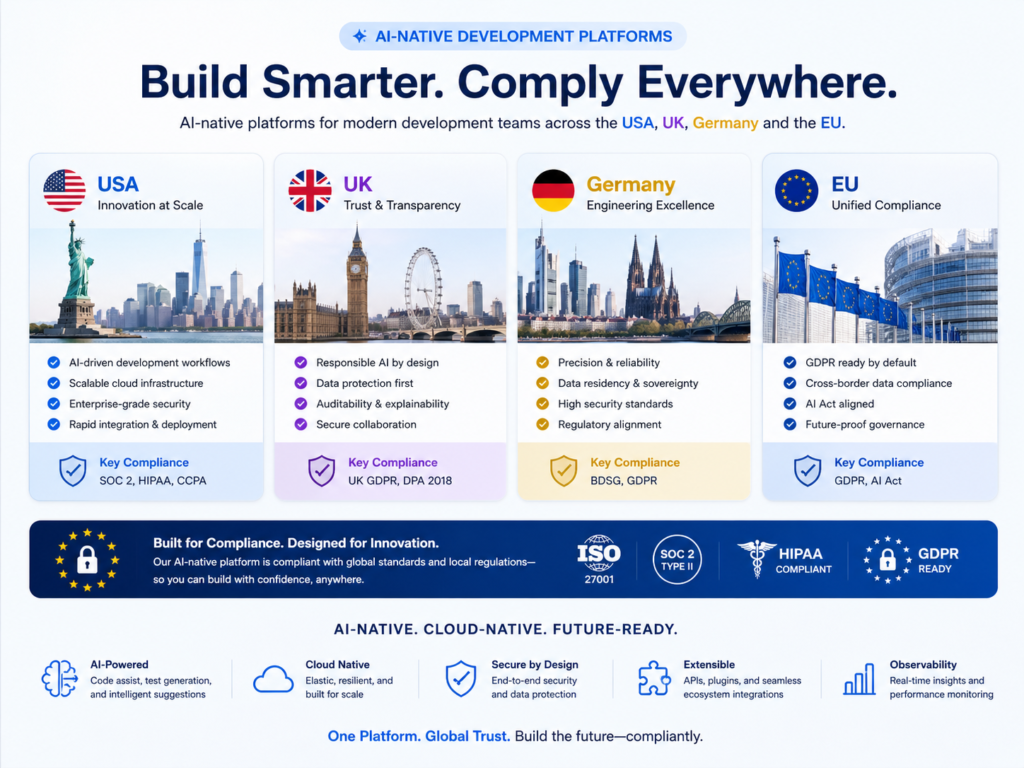

AI-Native Development Platforms by Region.

AI-native development platforms need to fit the region where the product will operate. A workflow that works for a SaaS startup in Seattle may need stronger controls before it is used for NHS, BaFin, or EU financial-sector workloads.

USA.

In the United States, buyers often care about fast MVP delivery, SOC 2 readiness, HIPAA safeguards for healthcare, PCI DSS for payments, and enterprise security reviews.

For a New York healthtech company or Boston SaaS team, AI-native workflows should include secure prompts, private repositories, access controls, audit logs, vendor risk review, and clear rules about sensitive data.

The practical question is not only “Can this platform build faster?” It is also “Can we prove what happened, who approved it, and what data was used?”

UK.

In the United Kingdom, teams building for London fintech, Manchester health services, NHS suppliers, FCA-regulated firms, or Open Banking use cases need stronger controls around privacy, fairness, explainability, model oversight, and operational resilience.

A UK product team should review how the platform handles personal data, where logs are stored, whether AI outputs are explainable enough for review, and how human approvals are recorded.

For regulated teams, AI-native does not mean “move fast and hope.” It means move faster with a better evidence trail.

Germany and the EU.

In Germany and the wider European Union, teams need to think about GDPR/DSGVO, BaFin expectations, the EU AI Act, DORA for financial entities, and cloud data residency.

The EU AI Act entered into force on 1 August 2024 and follows a staged application timeline. The European Commission states that it becomes fully applicable on 2 August 2026, with some provisions applying earlier and some high-risk system rules extending to 2 August 2027.

DORA has also been in application since 17 January 2025 for many EU financial entities, focusing on digital operational resilience and ICT risk management.

For teams in Berlin, Munich, Frankfurt, Amsterdam, Dublin, Paris, Stockholm, or Madrid, platform selection should include cloud region strategy, encryption, processor review, access control, audit exports, and model governance.

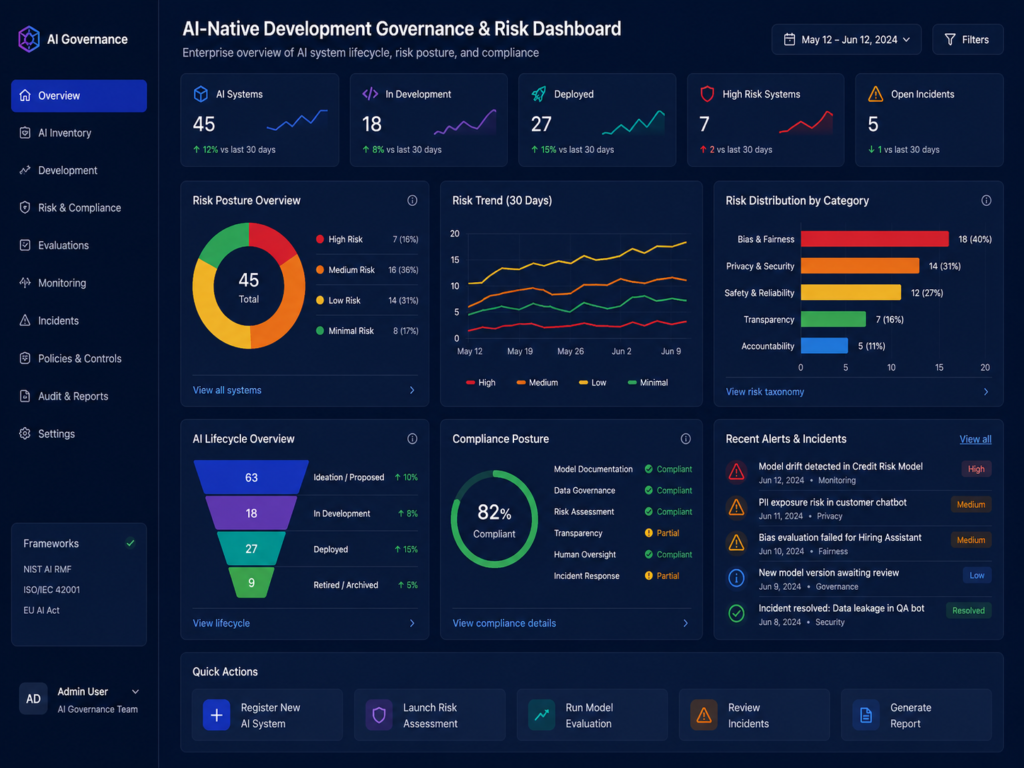

Governance, Security, and Compliance Risks

AI-native development creates risk when teams move faster than their controls.

The goal is not to slow innovation. The goal is to make AI use visible, reviewable, and safe enough for real products.

Responsible AI Governance for Product Teams

Responsible AI governance means defining who can use AI, what data can be entered, which tools are approved, what outputs require review, and how incidents are handled.

Practical controls include.

Approved AI use-case lists

Restricted data policies

Model and vendor inventories

Prompt and activity logging

Human approval workflows

Risk scoring

Incident response plans

Exportable compliance evidence

This is especially important when AI-generated outputs influence user experience, payments, healthcare workflows, financial decisions, or regulated customer data.

Privacy-by-Design for Regulated Industries

Privacy-by-design means thinking about data before the product ships. Teams should minimize data, control access, document flows, define retention, and review vendors early.

For healthcare, fintech, insurance, education, and government-adjacent products, privacy cannot be patched in at the end.

Teams can also learn from Mak It Solutions’ privacy architecture thinking in privacy-by-design for IoT, even when the target market is the US, UK, or EU.

The Main Risks to Watch

The main risks in AI-native development platforms are data leakage, weak audit trails, hallucinated outputs, insecure code, over-permissioned agents, and unclear ownership.

The fastest mature teams treat these as design constraints. They restrict sensitive data in prompts, review AI-generated code, require approvals before release, and make ownership visible across the workflow.

How to Adopt AI-Native Development Without Breaking Quality

Product teams can adopt AI-native development safely by starting with low-risk workflows, adding guardrails, and expanding only when quality improves.

A gradual approach works better than trying to automate the whole SDLC at once.

Start with Discovery, Internal Tools, and Low-Risk Prototypes

Begin with workflows where the upside is clear and the risk is manageable.

Good starting points include.

Discovery summaries

Internal dashboards

Admin tools

Support triage

Product analytics assistants

Low-risk prototypes

Documentation drafts

Test case generation

Avoid starting with regulated decisioning, payment flows, clinical recommendations, legal conclusions, or production security automation unless your governance model is already mature.

Create Guardrails for PMs, Designers, and Engineers

A simple adoption process can work well.

Define approved AI use cases and restricted data.

Choose tools with access control and audit logs.

Require human review for code, UX, legal, security, and compliance outputs.

Track quality, cycle time, defect rates, rework, and user impact.

Expand only when the workflow performs reliably.

This keeps the team focused on outcomes, not just novelty.

Use Recognized Controls Where They Fit

Framework alignment helps teams avoid vague AI policies.

Use NIST AI RMF for AI risk management, ISO 27001 for security management, SOC 2 for trust controls, GDPR/UK-GDPR for personal data, HIPAA for US healthcare, and PCI DSS for payment environments.

For EU financial teams, DORA should also be part of the review because it focuses on operational resilience, ICT risk, third-party risk, and disruption response.

Choosing the Right AI-Native Development Platform

The right AI-native development platform is the one your team can actually govern, integrate, and scale.

A polished demo matters less than workflow fit, security, observability, and adoption by real users.

Evaluation Criteria.

Prioritize platforms that support.

Secure data handling

SSO and role-based access

Repository integrations

Design tool support

CI/CD compatibility

Cloud region options

Monitoring and alerting

Human review gates

Exportable audit logs

Clear vendor data terms

For engineering-heavy teams, review integrations with tools such as GitHub, GitLab, Jira, Linear, Figma, AWS, Azure, Google Cloud, Cursor, Gemini Enterprise, and analytics platforms.

Questions to Ask Before Buying or Building

Before choosing a platform, ask.

Does it support our compliance model?

Does our data train vendor models?

Where are prompts, logs, and outputs stored?

Can we control access by role?

How does human approval work?

Can we export audit evidence?

How are generated outputs tested?

What happens if an agent makes a bad change?

Do we need a commercial platform, custom internal tooling, or a hybrid model?

A team already investing in back-end development services may benefit from custom AI workflow orchestration around existing APIs, databases, and internal systems.

Concluding Remarks

AI-native development platforms can help product teams move from idea to release faster, but speed only helps when the work is secure, testable, and governed.

For US, UK, German, and EU teams, the winning approach is not blind automation. It is a practical operating model where AI accelerates discovery, delivery, and documentation while humans stay accountable for product quality, risk, and customer trust.

Want to see where AI-native development can safely speed up your roadmap? Start with Mak It Solutions’ services page or request a readiness assessment for your next SaaS, cloud, mobile, or AI product initiative.

Key Takeaways

AI-native development platforms support discovery, prototyping, coding, testing, deployment, monitoring, and governance.

Product teams in the US, UK, Germany, and the EU need region-aware controls for HIPAA, PCI DSS, UK-GDPR, GDPR/DSGVO, BaFin, DORA, and the EU AI Act.

Human-in-the-loop workflows are essential for quality, safety, auditability, and trust.

The safest adoption path starts with low-risk workflows before moving into regulated or customer-impacting systems.

The right platform should integrate with your product stack, cloud environment, and governance model.

FAQs

Q : Can product managers use AI-native development platforms without coding experience?

A : Yes. Product managers can use AI-native platforms to summarize research, draft user stories, generate prototype flows, explore backlog options, and create testable MVP concepts. They do not need to become full-time engineers, but code, architecture, security, and compliance decisions should still involve experienced technical reviewers.

Q : Are AI-native platforms replacing software engineers?

A : They are changing the role more than replacing it. Engineers spend less time on repetitive scaffolding and more time reviewing architecture, validating AI-generated code, improving systems, and solving harder product problems. In regulated environments, engineers become even more important because someone must verify security, reliability, data handling, and auditability.

Q : What products are best suited for AI-native development workflows?

A : AI-native workflows work well for SaaS dashboards, internal tools, admin portals, mobile MVPs, workflow automation, analytics products, customer support tools, and prototype-heavy discovery. They are less suitable as a first experiment for high-risk clinical, financial, safety-critical, or legal decision systems unless strong governance is already in place.

Q : How do AI-native platforms handle sensitive customer or healthcare data?

A : Good platforms support access controls, encryption, audit logs, retention settings, vendor agreements, and restrictions on model training. For US healthcare, teams should review HIPAA obligations and business associate requirements. For UK and EU users, teams should review UK-GDPR, GDPR/DSGVO, data residency, processor terms, and whether personal data is sent to external AI services.

Q : Should enterprises buy an AI-native platform or build internal AI development tools?

A : Enterprises should buy when they need speed, standard integrations, and lower setup effort. They should build when data sensitivity, governance, proprietary workflows, or integration complexity require deeper control. Many mature teams use a hybrid model: commercial tools for general productivity and custom internal agents for sensitive workflows.