AI Agent Identity Management: GCC Security Guide

AI Agent Identity Management: GCC Security Guide

AI Agent Identity Management: GCC Security Guide

AI agent identity management gives every AI agent a unique identity, owner, permission set, lifecycle, and audit trail. For GCC businesses in Saudi Arabia, the UAE, and Qatar, it helps secure AI workflows while supporting compliance, accountability, and trust.

As agentic AI moves into fintech, government services, logistics, healthcare, e-commerce, and cloud operations, identity can no longer be treated as a back-office IT detail. If an AI agent can call APIs, update records, analyze customer data, or trigger workflows, it needs the same governance discipline as any other powerful digital identity.

Why AI Agent Identity Management Matters Now

Across Riyadh, Dubai, Abu Dhabi, Doha, and Jeddah, AI agents are moving beyond chatbot-style support. They can now connect with SaaS platforms, cloud services, databases, payment workflows, customer support systems, and business intelligence tools.

That creates a simple security question: who, or what, performed the action?

The risk begins when an AI agent uses a shared employee login, unmanaged API key, or long-lived service account. Once that happens, accountability becomes weak. A regulated bank, for example, may struggle to prove whether a transaction review was completed by an employee, a bot, or an autonomous workflow.

AI agent identity management solves this by assigning every agent.

A unique digital identity

A named human owner

A clear business purpose

Least-privilege permissions

Session and token controls

Logs, reviews, and retirement rules

For GCC leaders, this is not just a technical upgrade. It is the bridge between AI innovation and secure enterprise identity and access management.

What Is AI Agent Identity Management?

AI agent identity management means treating AI agents as governed enterprise identities inside IAM. They should sit alongside employees, contractors, applications, workloads, service accounts, and privileged users.

If your organization is already modernizing platforms through secure web development services or cloud-native applications, agent identity should be part of the same governance model from day one.

AI Agent Identity vs Human Identity

Human identities usually map to real people. AI agent identities map to software that may act continuously, at machine speed, across multiple systems.

That difference matters.

A person may log in, complete a task, and log out. An AI agent may run in the background, consume data, call APIs, generate decisions, or trigger business actions without constant human supervision.

Because of that, AI agents need:

| Area | Human Identity | AI Agent Identity |

|---|---|---|

| Ownership | Individual user | Human business and technical owner |

| Access pattern | Session-based | Continuous or event-driven |

| Speed | Human-paced | Machine-speed |

| Risk | Misuse, phishing, privilege abuse | Token sprawl, runaway actions, hidden automation |

| Control need | MFA, role access, reviews | Least privilege, short-lived credentials, logs, lifecycle controls |

How It Connects to Non-Human Identity Management

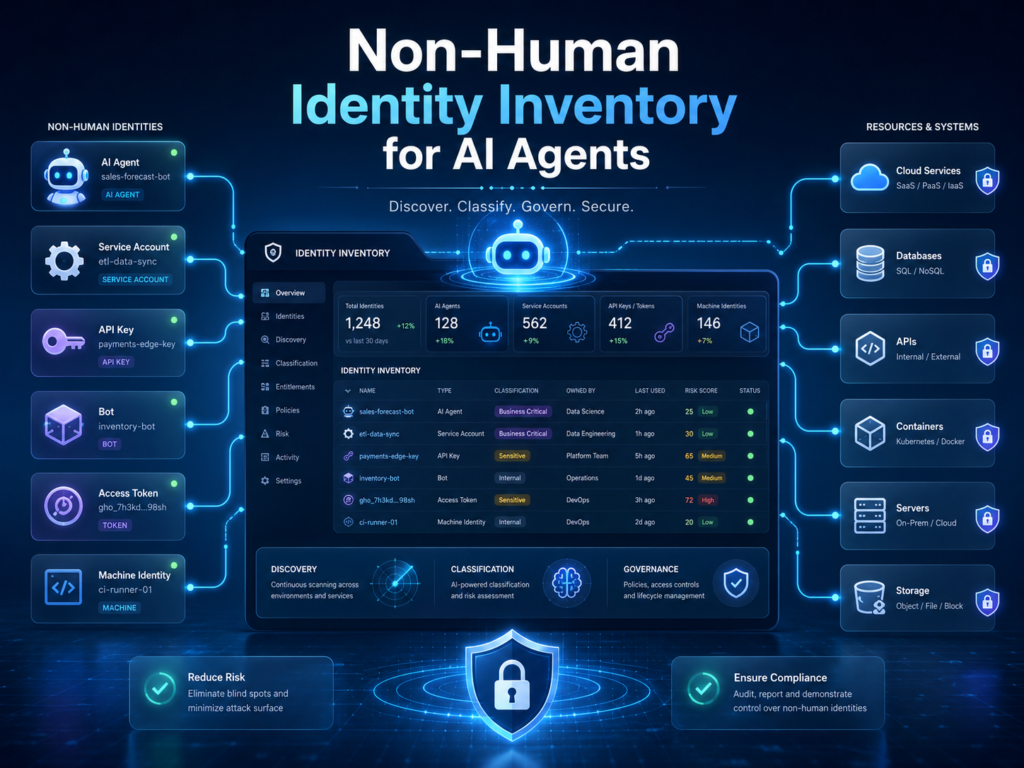

AI agents are part of non-human identity management. This includes service accounts, API keys, OAuth tokens, bots, automation scripts, workloads, and machine identities.

In practice, AI security, machine identity security, privileged access management, and Zero Trust architecture now need to work together. Treating each area separately leaves gaps attackers can exploit.

Why AI Agents Create New Identity Security Risks

Shadow AI Agents and API Token Sprawl

Shadow AI agents appear when teams connect tools quickly without central approval. A Dubai e-commerce team, for example, may connect an AI assistant to CRM, inventory, email marketing, and payment dashboards.

That may improve productivity. But without token governance, one leaked API key can expose customer records, order data, discount controls, or refund workflows.

The risk is not only that an AI agent has access. The bigger issue is that the business may not know.

Which agents exist

Who approved them

What data they can access

Whether their permissions are still needed

How to shut them down during an incident

Shared Credentials Break Accountability

Shared credentials hide who or what performed an action. This is especially dangerous for banks, fintech platforms, healthcare systems, government portals, and cloud teams.

In Saudi Arabia, SAMA’s Cyber Security Framework focuses on structured cybersecurity controls and maturity assessment for regulated financial institutions. That makes traceability, access control, and governance evidence essential for financial-sector environments.

A Riyadh fintech using AI for onboarding should not allow an AI workflow to operate through a generic employee account. Each agent should have its own identity, scoped permissions, and logs that show what it accessed and why.

High-Risk Sectors Need Stronger Controls

AI agent identity failures can create different problems across sectors.

Banks and fintech: fraud review gaps, payment workflow abuse, weak audit trails

Healthcare: exposure of sensitive patient records

Government: damage to public trust and citizen-service integrity

Cloud platforms: unmanaged access across workloads, APIs, and data regions

E-commerce: customer data leakage, unauthorized refunds, or inventory manipulation

For cloud teams, regional infrastructure also matters. AWS lists Middle East regions including Bahrain and the UAE, Google Cloud opened its Doha region, and Azure supports regional data residency planning through Azure geographies.

Compliance-Ready AI Access Control in Saudi Arabia, UAE, and Qatar

Saudi Arabia.

In Saudi Arabia, AI agent identity management should support regulated-sector cybersecurity, data governance, and operational accountability.

A practical KSA example: a Riyadh fintech using AI to review onboarding documents should give each AI agent a separate identity, restrict access to approved datasets, log every action, and review permissions before expanding the workflow.

For financial entities, this supports SAMA-style cybersecurity maturity and control evidence. For public-sector and data-heavy organizations, it also helps align AI adoption with stronger governance expectations.

UAE.

In the UAE, identity governance must fit digital trust, privacy, and regulated financial environments such as ADGM and DIFC.

UAE Pass is described as the UAE’s secure national digital identity for citizens, residents, and visitors, with services that include digital access and document signing.

Enterprises can apply the same trust principle internally: every digital actor should be identifiable, accountable, and governed.

For a Dubai e-commerce brand, that means an AI agent should not freely access customer profiles, marketing platforms, payment data, and refund tools without policy-based controls.

Qatar.

In Qatar, AI identity governance should connect to technology risk, IAM, logging, and operational controls.

QCB technology risk material references effective identity and access management processes for banks, and QCB also requires financial services organizations to maintain necessary technical security controls.

A Doha SME using regional cloud workloads can support business and data governance goals by limiting AI agents to approved systems, approved regions, and approved workflows.

How to Govern Non-Human Identities and AI Agents Together

The safest approach is to govern every non-human identity in one inventory. That includes.

AI agents

Service accounts

API keys

OAuth tokens

Automation bots

Cloud workload identities

AI coding assistants

Scripts and scheduled jobsAgents embedded in business apps

Teams exploring AI cost control for GCC cloud environments should track identity risk alongside spend. Unused AI agents and forgotten tokens can create both cost waste and security exposure.

Build a Unified Inventory

A useful inventory should answer five questions.

Which AI agents and non-human identities exist?

Who owns each one?

What systems and data can they access?

What permissions do they hold?

When does access expire or require review?

This inventory helps security teams support SOC alerts, compliance reviews, audits, and incident response.

Assign Owners, Permissions, Expiry Dates, and Review Cycles

Every AI agent should have.

A named business owner

A technical owner

An approved purpose

A defined permission set

An expiry date

A review cycle

Logging requirements

Deprovisioning rules

For GCC businesses, this turns AI governance from a policy document into practical evidence.

Enterprise IAM Architecture for AI Agent Identity Management

Use Zero Trust for AI Agents

Zero Trust for AI agents means never assuming an agent is safe because it sits inside the network.

Verify the agent, session, workload, policy, action, and data request every time. This is especially important when an agent can move between SaaS platforms, cloud services, internal databases, and business workflows.

Apply Least Privilege by Default

AI agents should start with no access by default. Access should be granted only for a specific purpose and revoked automatically when the task ends.

For example, a customer-support AI agent may read ticket history. It should not export full databases, change payment details, approve refunds, or access HR records unless those actions are explicitly approved and controlled.

For complex builds, Mak It Solutions’ mobile app development services and business intelligence guidance can support secure workflow design.

Strengthen PAM, Logging, and Anomaly Detection

Privileged access management should cover AI agents that can perform sensitive actions. Logs should show.

What the agent did

When it acted

What data it accessed

Which system approved the action

Which human owner is accountable

Whether the action matched expected behavior

Anomaly detection can flag unusual activity, such as an AI agent accessing new systems, exporting large datasets, using stale tokens, or acting outside business hours.

How GCC Enterprises Should Start Implementing AI Agent Identity Management

Discover All AI Agents and Non-Human Identities

Start with discovery across SaaS, cloud, code repositories, automation tools, developer environments, and internal platforms.

Include AI assistants used by developers, sales teams, finance, marketing, support, HR, and operations. Many risky agents are not created by security teams; they appear when business teams connect tools for speed.

Map Agents to Human Owners and Workflows

Next, map each AI agent to a human owner and workflow.

A Dubai mobile commerce team, for example, may allow an AI agent to update product catalog descriptions. That does not mean the same agent should approve refunds, change pricing rules, or access payment dashboards.

Ownership keeps responsibility clear when the agent behaves unexpectedly.

Apply Access Controls and Compliance Evidence

Apply practical controls first.

Least privilege

Short-lived credentials

Token rotation

PAM for sensitive workflows

Centralized logging

Anomaly detection

Quarterly access reviews

Automated expiry dates

Deprovisioning after project closure

For broader digital maturity, explore Mak It Solutions’ GCC cybersecurity startup guide, AI agent IAM guide, and Arabic web design strategy.

Common AI Agent Identity Management Mistakes

Even mature teams can get agent identity wrong. The most common mistakes are simple, but costly.

Using Employee Accounts for AI Workflows

An AI agent should not run through a personal employee login. If the employee leaves, changes role, or loses access, the agent’s permissions become messy and hard to audit.

Giving Agents Permanent Access

Long-lived credentials are convenient, but they create risk. Short-lived tokens and expiry dates reduce the damage if credentials are leaked or misused.

Skipping Business Ownership

Technical ownership is not enough. A business owner should approve why the agent exists, what it is allowed to do, and whether it still supports a valid workflow.

Treating AI Agents Like Basic Service Accounts

AI agents can reason, plan, call tools, and act across systems. That makes them more dynamic than traditional service accounts. Their governance should be stricter, not lighter.

Concluding Remarks

AI will scale faster than manual security reviews. That is why AI agent identity management needs to become part of enterprise IAM, cloud security, and compliance planning now.

For GCC CISOs, CIOs, compliance teams, and digital transformation leaders, the goal is not to slow AI adoption. The goal is to make AI adoption secure, auditable, and trusted.

The right time to assess AI agent identity management is before incidents, audits, or uncontrolled automation growth.

Mak It Solutions can help your GCC team assess AI agent identity risk, secure non-human identities, and design compliant digital workflows. Book a consultation through the contact page or explore Mak It Solutions’ company expertise to request a custom GCC strategy.

FAQs

Q : How is AI agent identity different from service account governance?

A : Service account governance usually focuses on machine accounts used by applications, scripts, or backend systems. AI agent identity management goes further because agents may reason, act across tools, trigger workflows, and make decisions based on business context. Each AI agent needs a named owner, scoped permissions, review evidence, and activity logs.

Q : Should every AI agent have a human owner?

A : Yes. Every AI agent should have a human owner because accountability cannot sit with software alone. The owner should approve the agent’s purpose, access level, data use, and review cycle. This helps security and compliance teams answer who approved the agent and who must respond if it behaves unexpectedly.

Q : What access permissions should AI agents receive by default?

A : AI agents should receive no access by default. Access should be granted only after business approval, risk review, and technical validation. A customer-support AI agent may read ticket history, but it should not export full databases, change payment details, or approve refunds unless those actions are explicitly controlled.

Q : How often should AI agent identities be reviewed?

A : AI agent identities should be reviewed at least quarterly, and more often for privileged, financial, healthcare, or government workflows. Reviews should check whether the agent is still needed, whether permissions remain appropriate, and whether logs show unusual behavior.

Q : Can AI agent identity management support SAMA, TDRA, and QCB compliance audits?

A : Yes. AI agent identity management can support audits by producing evidence of unique identities, human ownership, access approvals, least privilege, logs, reviews, and deprovisioning. It helps show that AI-enabled workflows are governed through effective identity and access management, not hidden behind shared credentials or unmanaged automation.