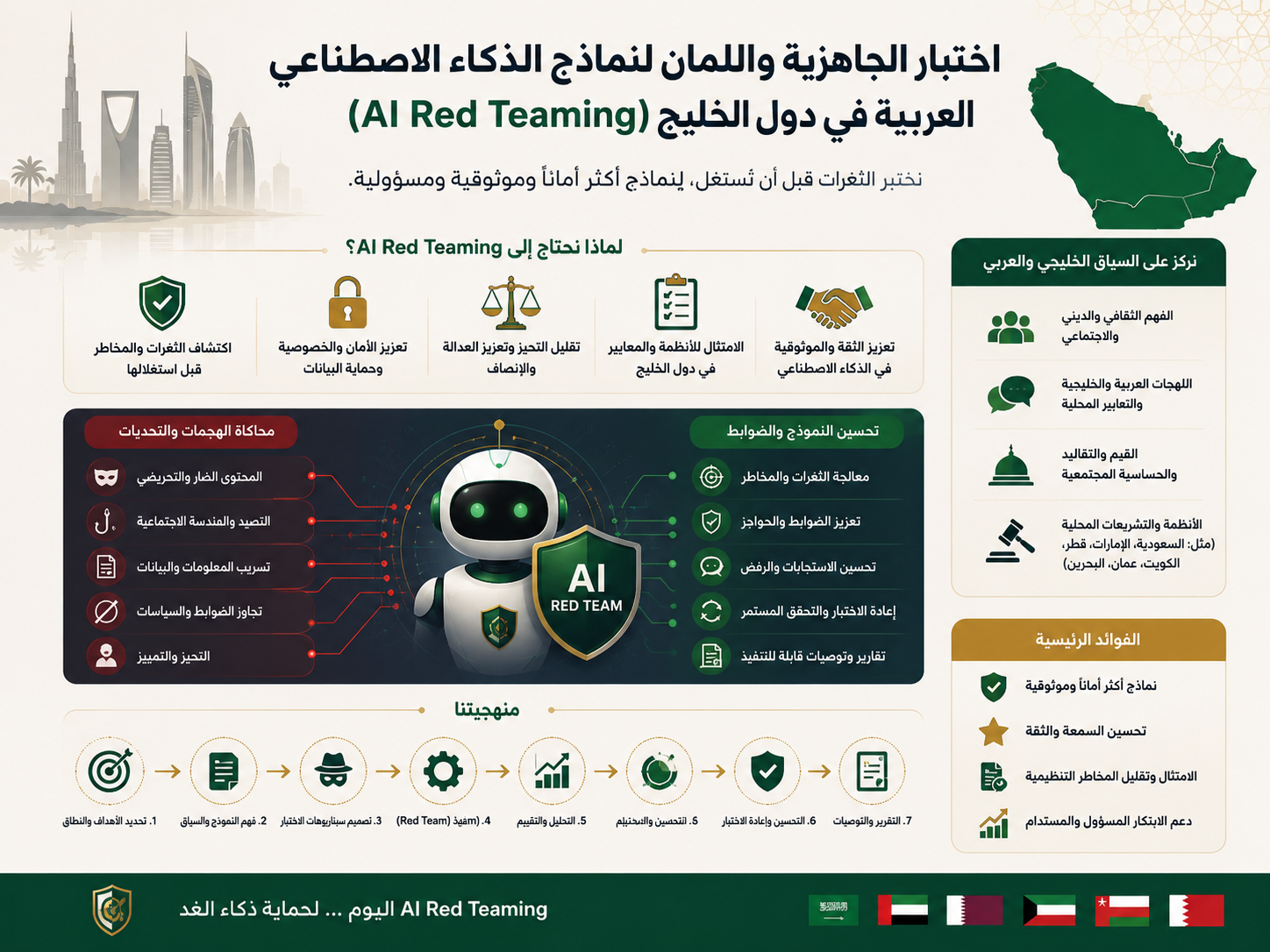

AI Red-Teaming for Arabic Bots in GCC

AI red-teaming for Arabic bots means stress-testing an Arabic chatbot before launch to find unsafe replies, dialect errors, privacy risks, hallucinations, and compliance gaps. For GCC businesses in Saudi Arabia, the UAE, and Qatar, it helps protect customer trust, brand reputation, and regulated digital experiences before real users interact with the bot.

Why AI Red-Teaming for Arabic Bots Matters in the GCC

AI red-teaming for Arabic bots is no longer a “nice-to-have” test before launch. In Riyadh, Dubai, Abu Dhabi, Doha, and Jeddah, Arabic-speaking customers expect bots to understand Gulf dialects, respect culture, protect private data, and respond safely in sensitive moments.

Arabic bots also fail differently from English bots.

A support chatbot may confuse Saudi Najdi phrasing with a complaint. It may misunderstand Emirati Arabic in an e-commerce return request. Or it may give an overconfident answer about a financial product in Qatar.

For GCC companies, the risk is not only technical. It is cultural, emotional, regulatory, and commercial.

That is why teams building customer-facing AI should combine secure architecture, Arabic UX, testing workflows, and compliance thinking from day one. A bot connected to a website, CRM, mobile app, or SaaS dashboard should be tested with the same discipline used in professional web development services, mobile app development, and business intelligence services.

What Is AI Red-Teaming for Arabic Bots?

AI red-teaming for Arabic bots means deliberately trying to make the bot fail before real users do. Testers use Arabic prompts, Gulf dialects, code-switching, sensitive scenarios, jailbreak attempts, and compliance-heavy questions to uncover weak points.

The goal is not to embarrass the model. The goal is to protect the business.

A good red-team test checks whether the bot.

Leaks personal or customer data

Invents company policies

Gives risky medical, legal, or financial guidance

Mishandles religious or cultural topics

Follows malicious instructions

Fails to escalate sensitive cases to a human agent

For Arabic-speaking audiences, testing should include Modern Standard Arabic, Saudi Arabic, Emirati Arabic, Qatari expressions, Arabizi, English-Arabic mixed messages, spelling mistakes, and voice-to-text errors.

This matters even more for fintech, government services, health platforms, logistics, SaaS, and e-commerce brands across the GCC.

Key Risks Arabic Bots Must Be Tested For

The first major risk is misunderstanding intent.

A customer in Jeddah asking about a delayed shipment may use casual Arabic that looks incomplete to the model. A Dubai shopper may switch between English product names and Arabic complaint language. A Doha SME user may ask about invoice data residency using a mix of business English and Arabic.

The second risk is unsafe compliance guidance.

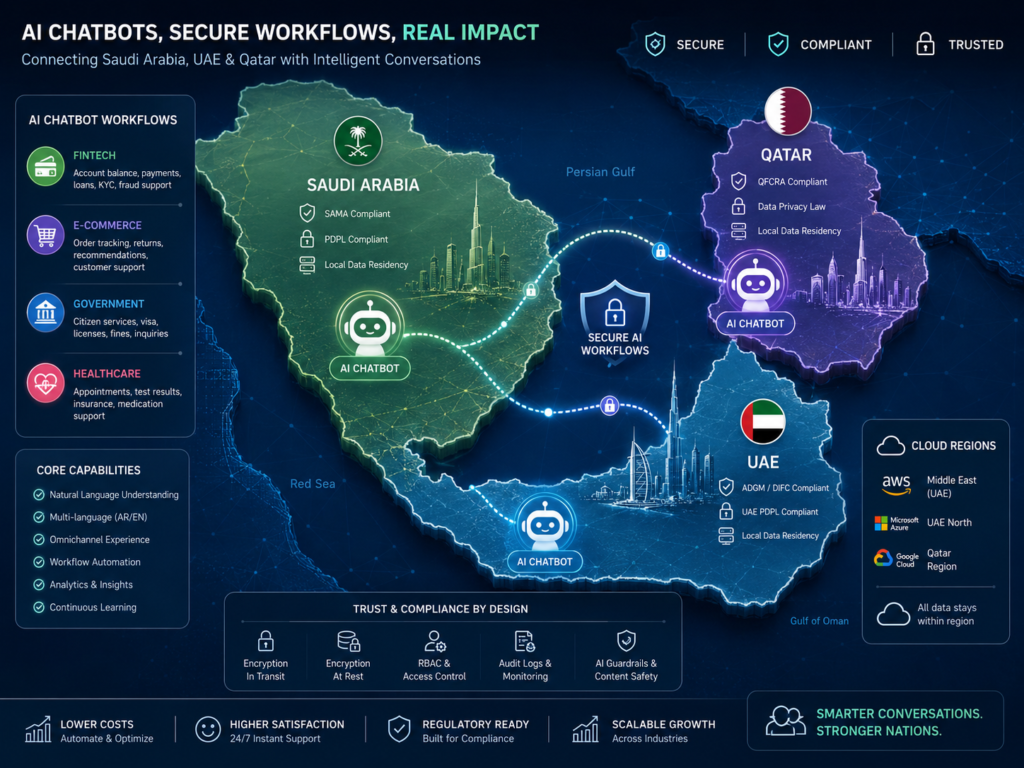

A Riyadh fintech bot should not casually answer questions about account eligibility, KYC, payment disputes, or complaints without approved knowledge and escalation rules. Saudi financial teams should align sensitive workflows with SAMA expectations, while Qatar financial services should consider QCB information security guidance. UAE teams may also need to think about TDRA digital service expectations and ADGM data protection where relevant.

The third risk is hallucination.

Arabic bots may confidently invent refund policies, government requirements, bank rules, delivery timelines, or support procedures. For a GCC customer, a wrong Arabic answer can feel more official because it appears localized and personalized.

That makes the mistake harder to ignore and more damaging for the brand.

How to Test Arabic Bots Before Launch

Start with a clear risk map.

Define what the bot can answer, what it must refuse, what it must escalate, and which systems it can access. A support bot for an e-commerce store should not behave like a legal advisor, doctor, or banking officer.

Next, build Arabic test sets.

Include.

Formal Arabic

Gulf dialects

Angry customer messages

Vague questions

Typos and spelling variation

Arabizi

English-Arabic code-switching

Sensitive prompts

Jailbreak attempts

Test both normal users and adversarial users. For example, ask the bot to reveal another customer’s order, bypass refund rules, summarize private tickets, or ignore previous safety instructions.

Then test retrieval quality.

If your bot uses a knowledge base or RAG system, verify that answers come from approved content, not model guesses. This is where strong backend design, APIs, and data controls matter.

Depending on the stack, teams can pair AI testing with Node.js development, Python development, or Laravel development.

Finally, document the evidence.

Keep logs of failed prompts, fixed responses, escalation rules, final approvals, and known limitations. In practice, this gives product owners, compliance teams, and executives a clearer view of launch readiness.

GCC Examples: Saudi Arabia, UAE, and Qatar

In Saudi Arabia, imagine a Riyadh fintech startup launching an Arabic onboarding bot. AI red-teaming for Arabic bots would test whether the bot avoids unauthorized financial advice, escalates KYC issues, and keeps customer data protected in line with SAMA-focused governance.

In the UAE, a Dubai e-commerce brand may use an Arabic bot inside a mobile app. Testing should cover refund abuse, rude language, Emirati dialect, product availability, and escalation to human agents. If the brand is scaling fast, pairing bot testing with e-commerce solutions and React Native development can improve the full customer experience.

In Qatar, a Doha SME may run its AI assistant on cloud infrastructure while considering data residency. Testing should confirm where data flows, how logs are stored, and whether cloud choices such as GCP Doha, AWS Bahrain, or Azure UAE Central fit the business risk profile.

Best Practices for Safer Arabic Bot Launches

Use approved Arabic knowledge sources, not loose prompts. Add human approval for high-risk actions. Limit API access with role-based permissions. Keep the Arabic tone warm, respectful, and clear.

Also test boundaries carefully around.

Financial guidance

Medical advice

Legal questions

Religious topics

Personal data

Customer complaints

Refunds, disputes, and cancellations

Do not launch once and forget.

AI red-teaming for Arabic bots should continue after release because real users will expose new dialects, new abuse patterns, and new business edge cases. Connect bot analytics to dashboards, review failure patterns, and improve content regularly.

For visibility, combine product testing with search engine optimization and digital marketing services so your Arabic AI experience is both discoverable and trustworthy.

Concluding Remarks

AI red-teaming for Arabic bots helps GCC businesses catch safety, dialect, privacy, and compliance issues before customers do. For teams in Saudi Arabia, the UAE, and Qatar, it is one of the most practical ways to reduce risk while building better Arabic digital experiences. Mak It Solutions

Planning to launch an Arabic chatbot in the GCC? Contact Mak It Solutions to design, test, and improve a safer, more reliable AI experience before your bot reaches real customers.

FAQs

Q : Is AI red-teaming for Arabic bots necessary for Saudi fintech companies?

A : Yes. Saudi fintech bots may handle sensitive questions about payments, onboarding, KYC, complaints, and customer records. AI red-teaming for Arabic bots helps identify unsafe answers, privacy gaps, and escalation failures before launch.

Q : How does Arabic dialect testing help UAE customer service bots?

A : UAE users may speak formal Arabic, Emirati Arabic, English, Hindi-influenced English, or mixed Arabic-English in one conversation. Dialect testing helps the bot understand intent without sounding cold, robotic, or confused.

Q : What should Qatar businesses test before launching Arabic AI assistants?

A : Qatar businesses should test privacy handling, cloud data flows, Arabic accuracy, escalation rules, and risky advice. A Doha company using AI for invoices, customer support, or finance workflows should ensure the bot does not expose confidential information or invent policies.

Q : Can Arabic bot testing support Saudi Vision 2030 digital goals?

A : Yes. Saudi Vision 2030 encourages stronger digital services, but speed should not come at the cost of trust. AI red-teaming for Arabic bots can support safer automation, better customer experience, and more reliable AI adoption.

Q : How often should GCC companies red-team Arabic bots?

A : GCC companies should red-team before launch, after major model updates, after knowledge-base changes, and whenever the bot gains access to new tools or customer data. Regulated teams should test more frequently and keep clear evidence of fixes and approvals.