AI Vulnerability Detection Workflow Guide

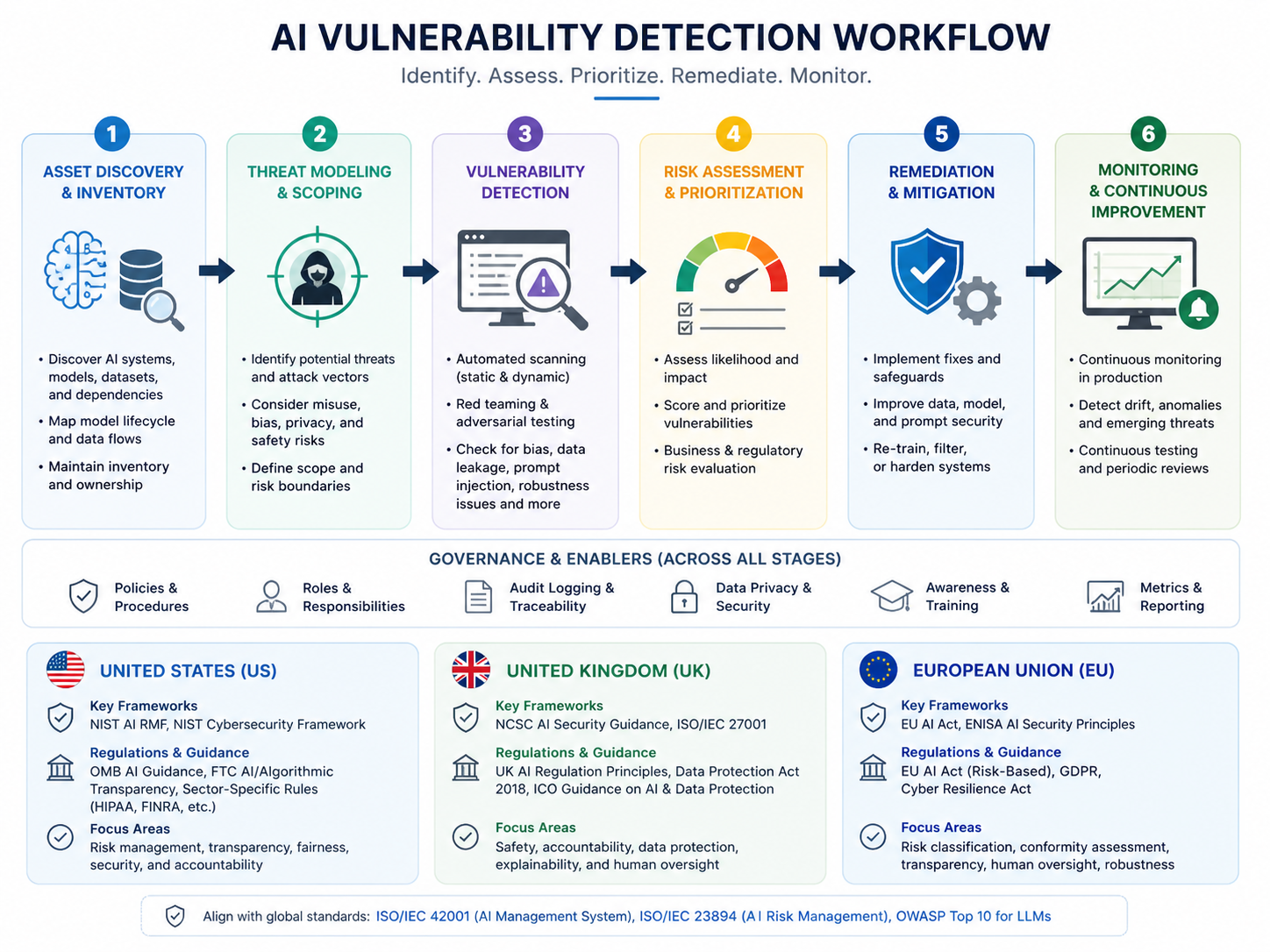

An AI vulnerability detection workflow helps engineering and security teams find, prioritize, fix, and verify software vulnerabilities without drowning developers in noisy alerts. It combines AI-assisted code analysis, secure SDLC practices, DevSecOps automation, ticket ownership, compliance evidence, and human review.

For US, UK, Germany, and EU software teams, the real value is not “faster scanning” alone. The goal is safer software delivery: clear risk context, accountable remediation, and proof that security issues were handled properly.

Modern SaaS, fintech, healthcare, cloud, and public-sector suppliers in cities like New York, London, Berlin, Austin, Manchester, and Munich are under pressure to ship quickly while keeping code secure. That pressure is real. GitHub reported more than 39 million secret leaks across GitHub in 2024, IBM’s 2025 breach research lists the global average data breach cost at USD 4.4 million, and Synopsys reported that 74% of audited codebases contained high-risk open-source vulnerabilities in its 2024 OSSRA findings.

What Is an AI Vulnerability Detection Workflow?

An AI vulnerability detection workflow is a repeatable process for finding, triaging, assigning, fixing, validating, and reporting software security issues across the development lifecycle.

It connects scanners, AI-assisted analysis, developer tools, ticketing systems, CI/CD pipelines, runtime signals, and audit evidence into one practical operating model.

Vulnerabilities rarely sit in one place. They can appear in.

Custom application code

APIs and authentication flows

Open-source dependencies

Containers and cloud settings

Infrastructure-as-code files

Hardcoded secrets

AI-generated pull requests

Third-party SDKs and packages

A proper workflow turns scattered alerts into clear action.

How AI Detects, Scores, and Routes Vulnerabilities

AI can analyze code patterns, dependency metadata, runtime exposure, exploitability, historical fixes, and business context. It then helps score findings by severity, asset importance, compliance impact, and ownership.

For example, a low-severity issue in an unused test package may be less urgent than a medium-severity authentication flaw in a public payment API. AI helps make that distinction faster by connecting signals from SAST, SCA, DAST, secrets scanning, SBOM, and runtime monitoring.

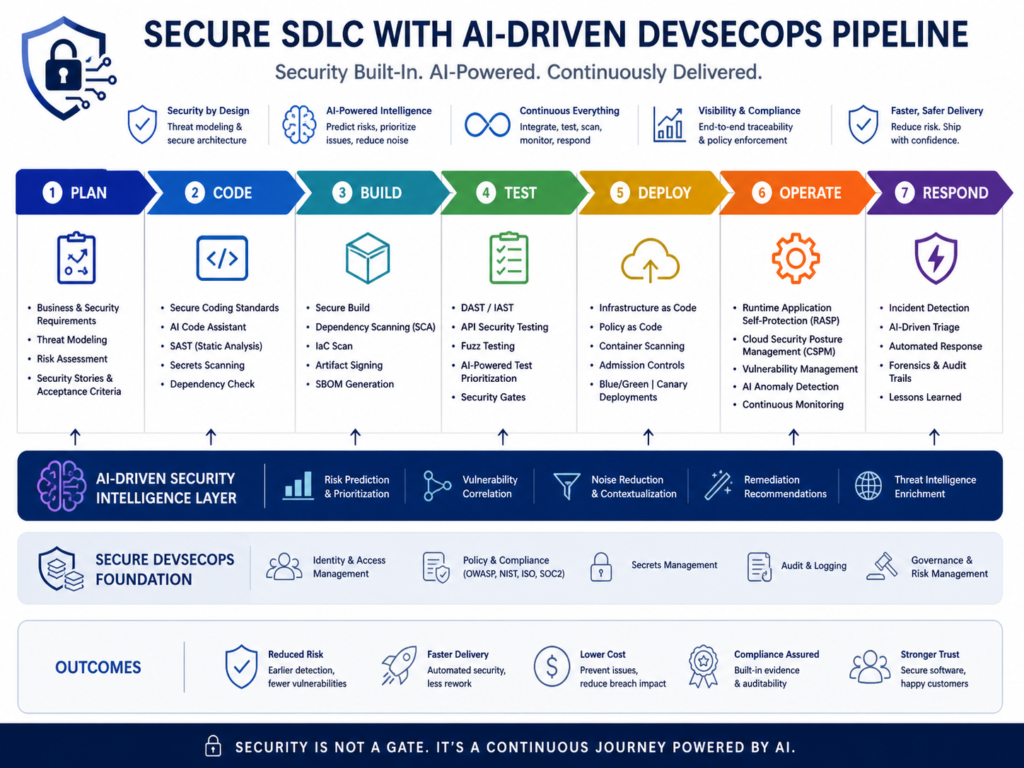

Where AI Fits Inside the Secure SDLC

AI can support the secure software development lifecycle from planning to production. Teams can use it for threat modeling, secure coding guidance, pull request checks, CI/CD gates, runtime monitoring, remediation suggestions, and compliance reporting.

NIST SSDF is a useful reference point here because it gives software producers a structured way to prepare, protect, produce, and respond during secure development. In practice, a SaaS company might use AI to draft threat models, scan code during pull requests, prioritize vulnerabilities in CI/CD, and create evidence for SOC 2 or ISO 27001 reviews.

AI Vulnerability Scanner vs. Full Workflow

An AI vulnerability scanner finds and explains security issues. A full workflow goes further.

It answers questions like.

Who owns this issue?

How urgent is it?

Does it block release?

What fix is recommended?

Who validates closure?

What evidence proves it was handled?

This distinction matters. Buying a scanner without workflow design often creates alert fatigue. Building the workflow first helps teams use tools like Snyk, Checkmarx, Veracode, Cycode, Apiiro, Invicti, OX Security, GitHub, GitLab, Jira, and CI/CD platforms more effectively.

Core Stages of a Secure SDLC with AI

A secure SDLC with AI usually includes requirements review, threat modeling, secure coding, automated scanning, vulnerability triage, remediation, validation, and audit reporting.

AI can speed up these stages, but it should not replace human security judgment. This is especially important for fintech, healthcare, e-commerce, and regulated SaaS platforms where vulnerabilities may affect privacy, uptime, payment data, or patient information.

Planning and Threat Modeling with AI Assistance

AI can help teams identify likely abuse cases, trust boundaries, data flows, and OWASP Top 10 risks before code is written. It can also compare product requirements against security controls such as OWASP ASVS, NIST SSDF, and internal secure coding standards.

For example, a healthcare platform in Boston may use AI to identify where protected health information enters the system. Security and engineering leaders should still validate the output against HIPAA and internal risk expectations.

AI Code Security Scanning in IDEs and Pull Requests

AI code security scanning works best when developers see findings inside their normal workflow. IDE plugins and pull request comments can flag insecure authentication, weak input validation, hardcoded secrets, vulnerable APIs, and risky dependencies before code is merged.

Teams building JavaScript APIs, Python services, or mobile backends can connect this scanning layer with Node.js development services Python development services or custom software delivery standards.

CI/CD Security Gates for SAST, SCA, DAST, and Secrets

CI/CD security gates automate checks for SAST, SCA, DAST, secrets, containers, infrastructure-as-code, dependency risk, and license risk.

The best gates are risk-based. They block critical, exploitable issues while routing lower-risk findings into backlog triage.

For example, a London fintech may block a release if a payment API fails PCI DSS-related security checks. A low-risk internal dependency issue, however, may move into a sprint backlog with documented acceptance criteria.

AI Vulnerability Detection Workflow for DevSecOps Triage

An AI vulnerability detection workflow becomes most useful when it reduces noise. Security teams do not need hundreds of unfiltered alerts. They need clear priorities.

AI can combine severity, exploitability, asset exposure, runtime usage, compliance scope, and business impact. That makes DevSecOps vulnerability management more practical for teams that cannot manually inspect every finding.

AI Vulnerability Triage and Risk Prioritization

AI vulnerability triage ranks findings using context. It can consider whether a vulnerable function is reachable, whether the package runs in production, whether the asset is internet-facing, and whether active exploitation is known.

A San Francisco SaaS company may prioritize an exposed API flaw over several internal UI warnings. A Berlin financial services team may escalate findings tied to BaFin expectations, DORA resilience, or GDPR/DSGVO data processing.

Reducing False Positives with Business Context

AI reduces false positives when it has useful business and technical context. Strong signals include.

Repository ownership

Architecture diagrams

Cloud environment tags

Production telemetry

SBOM data

Jira components

Runtime exposure

Service catalogs

Still, AI should not silently dismiss findings. A better pattern is: recommend, explain, and log.

Security teams should be able to see why a vulnerability was downgraded, accepted, escalated, or marked as fixed.

Assigning Fixes Through Jira, GitHub, or GitLab

A strong workflow routes each issue to the right developer or team. Ownership can come from CODEOWNERS files, commit history, Jira components, GitHub teams, GitLab groups, or service catalogs.

This makes remediation faster and less political. Instead of security teams sending generic emails, the workflow creates actionable tickets with affected files, reproduction notes, recommended fixes, severity rationale, and due dates.

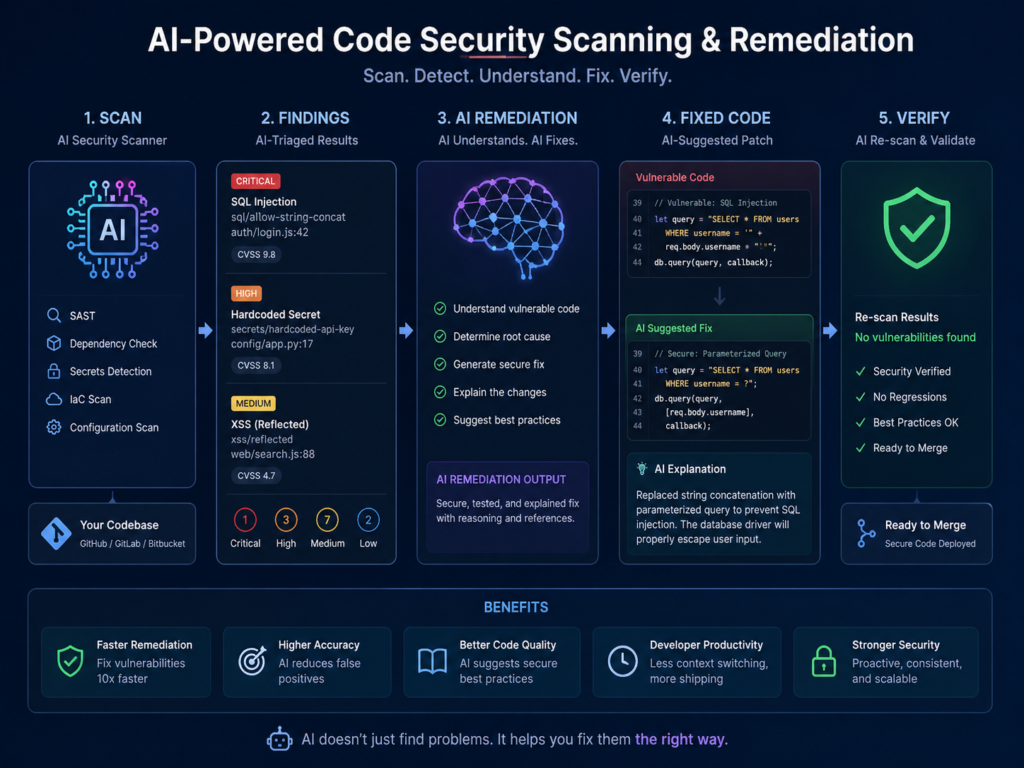

AI Code Security Scanning and Remediation Workflow

AI-generated code can introduce insecure patterns, outdated dependencies, hardcoded secrets, weak authentication, and vulnerable API handling. It should be reviewed and scanned before merge, then checked again in CI/CD.

This matters because AI-generated code often looks clean even when it contains hidden security flaws. Treat it as untrusted input until it passes review, testing, and automated security checks.

Scanning Human-Written and AI-Generated Code

Human-written and AI-generated code should go through the same workflow.

IDE feedback

Pull request review

SAST checks

SCA dependency scanning

Secrets scanning

Unit and integration tests

CI/CD security gates

Post-fix validation

For mobile products, this also means checking platform-specific risks such as insecure storage, weak API authentication, and unsafe third-party SDKs.

AI Secure Code Review and Remediation Suggestions

AI secure code review can explain why a finding matters and suggest a safer fix. It may recommend parameterized queries for injection risk, stronger token validation for API security, or safer dependency versions for known CVEs.

But developers should verify AI remediation. Suggested patches can break business logic, miss edge cases, or rely on outdated libraries. The strongest model is AI speed plus human accountability.

Validation, Regression Testing, and Evidence Collection

Validation proves that a fix works and does not break the product. Teams can use regression testing, exploit replay, dependency rescans, DAST verification, and manual review to confirm closure.

Evidence collection is just as important. SOC 2, ISO 27001, PCI DSS, HIPAA, UK-GDPR, GDPR, and DORA programs often need proof that vulnerabilities were identified, assigned, fixed, approved, and reviewed.

A mature AI vulnerability detection workflow stores this evidence automatically.

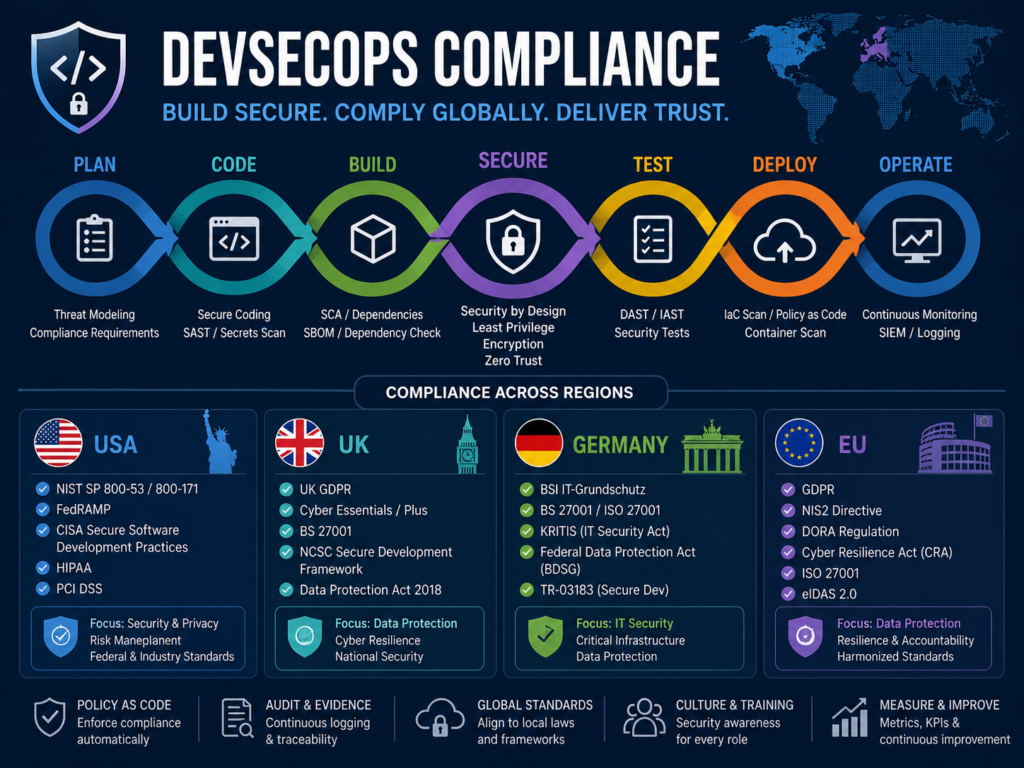

Compliance Considerations for USA, UK, Germany, and EU Teams

US and European teams can use AI in AppSec workflows safely when they control sensitive data, document AI-assisted decisions, validate recommendations, and align workflows with relevant frameworks.

Security leaders should define what code, logs, customer data, secrets, prompts, and vulnerability details can be shared with AI tools. They should also review data residency, retention, model training policies, access controls, and audit trails.

USA.

US teams often map AppSec controls to SOC 2, HIPAA, PCI DSS, and NIST SSDF.

A healthcare software vendor in New York may need HIPAA-aligned access control and audit logging. An Austin payments startup may prioritize PCI DSS evidence for secure development and vulnerability management.

NIST SSDF is especially useful because it gives teams a structured secure development baseline.

UK.

UK teams should align AI DevSecOps workflows with UK-GDPR, FCA expectations, Open Banking security needs, and NHS supplier security where relevant.

A London fintech or Manchester health tech company should document how AI recommendations are reviewed, how sensitive data is protected, and how vulnerabilities are tracked to closure.

For regulated buyers, this evidence can become part of procurement, due diligence, and vendor security reviews.

Germany and EU.

Germany and EU teams must consider GDPR/DSGVO, BaFin expectations, NIS2, DORA, ISO 27001, and data residency.

DORA has applied from 17 January 2025 for many EU financial entities, increasing pressure around ICT risk, third-party providers, incident handling, and operational resilience.

A Munich insurer, Frankfurt bank, Berlin SaaS provider, or Amsterdam cloud platform should design AI vulnerability workflows with clear evidence, human oversight, and careful data transfer controls.

How to Build an AI Vulnerability Detection Workflow

To build an AI vulnerability detection workflow, connect repositories, CI/CD pipelines, scanners, ticketing tools, runtime signals, and compliance reports into one repeatable process.

Start with ownership and data flows before adding advanced automation. The goal is not to buy every tool. The goal is to make security findings visible, prioritized, assigned, fixed, validated, and measurable.

Map Assets, Repositories, APIs, and Owners

Start by mapping repositories, applications, APIs, cloud accounts, databases, third-party services, and owners.

Add context such as.

Production criticality

Data classification

Internet exposure

Compliance scope

Deployment frequency

Business owner

Engineering owner

This foundation helps AI prioritize accurately. Without asset context, even a strong scanner may treat a public authentication service and an internal prototype as equal risk.

Connect AI Scanning to IDE, PR, CI/CD, and Runtime Signals

Next, connect scanning to developer environments, pull requests, CI/CD pipelines, package registries, container builds, API tests, and runtime telemetry.

Feed results into GitHub, GitLab, Jira, or your vulnerability management platform.

For teams modernizing security reporting, can help build dashboards for MTTR, open critical findings, SLA breaches, and compliance evidence.

Prioritize, Remediate, Validate, and Report

Define severity rules, escalation paths, SLA targets, exception workflows, and reporting templates.

Critical exploitable issues should trigger immediate ownership and release decisions. Lower-risk findings should still be tracked, grouped, reviewed, and closed properly.

Finally, validate fixes and preserve evidence. That is what turns AppSec from scattered alerts into a reliable secure SDLC.

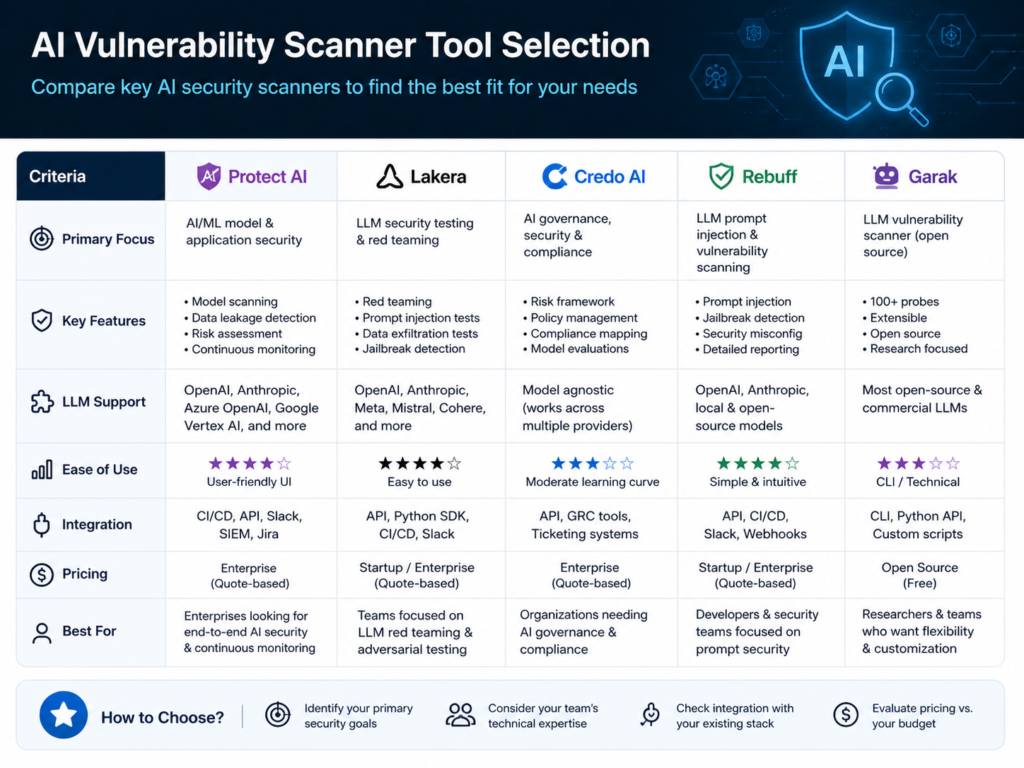

Choosing an AI Vulnerability Scanner and DevSecOps Tools

The best AI vulnerability scanner should support code security scanning, dependency analysis, secrets detection, CI/CD integration, contextual prioritization, remediation guidance, compliance reporting, and developer-friendly workflows.

The right choice depends on your stack, risk profile, team maturity, and regulatory environment.

A regulated enterprise may need strong audit trails and policy controls. A startup may need fast pull request feedback and simple GitHub integration. A multinational platform may need regional data controls for the US, UK, Germany, and EU.

Must-Have Capabilities for AI-Powered DevSecOps

Look for tools that support.

SAST

SCA

DAST

Secrets scanning

SBOM generation

API security testing

Infrastructure-as-code scanning

CI/CD integration

Policy-as-code

Remediation guidance

Evidence exports

Strong tools also support mappings to OWASP Top 10, OWASP ASVS, NIST SSDF, PCI DSS, SOC 2, ISO 27001, GDPR, and UK-GDPR.

Good UX matters too. Developers are more likely to fix issues when findings are clear, contextual, and close to the code.

Questions to Ask Vendors Before Buying

Before choosing a vendor, ask how they handle customer code, prompts, logs, secrets, and vulnerability data.

Key questions include.

Does customer data train the vendor’s models?

Where is data processed?

How long is data retained?

Is EU or UK data residency available?

How are secrets protected?

Can the tool integrate with Jira, GitHub, GitLab, and CI/CD?

Does it support SBOMs and compliance mapping?

Can it prove that AI-generated recommendations were validated?

These answers matter, especially for regulated US, UK, Germany, and EU teams.

When to Use Snyk, Checkmarks, Veracode, Cyc ode, Apiiro, Invicti, or OX Security

Use Snyk when developer-first dependency and code scanning are central. Consider Checkmarx or Veracode for mature enterprise AppSec programs.

Look at Cycode, Apiiro, or OX Security when software supply chain security, pipeline visibility, and ASPM-style prioritization matter. Use Invicti when DAST and web application scanning are major needs.

Many teams use more than one tool. The workflow not the logo is what determines whether findings turn into secure releases.

Concluding Remarks

An AI vulnerability detection workflow gives security and engineering teams a practical way to move from noisy alerts to measurable risk reduction. It helps teams detect issues earlier, prioritize what matters, assign fixes clearly, validate remediation, and keep evidence ready for audits.

Need help turning scattered AppSec alerts into a practical AI vulnerability detection workflow? Mak It Solutions can help scope repositories, CI/CD gates, dashboards, compliance evidence, and developer workflows for US, UK, Germany, and EU delivery teams. Start with web development services or business intelligence services to build a safer software delivery process.

Key Takeaways

An AI vulnerability detection workflow connects scanning, prioritization, ownership, remediation, validation, and audit evidence.

AI is most valuable when it reduces alert noise using business context, runtime exposure, exploitability, and compliance impact.

AI-generated code should be scanned before merge and again in CI/CD.

US, UK, Germany, and EU teams should align workflows with relevant frameworks such as SOC 2, HIPAA, PCI DSS, NIST SSDF, UK-GDPR, GDPR/DSGVO, BaFin, NIS2, DORA, and ISO 27001.

Tool choice matters, but workflow design matters more.

Developer-friendly integrations with IDEs, pull requests, Jira, GitHub, GitLab, and CI/CD pipelines improve fix rates.

FAQs

Q : Can AI vulnerability detection replace manual secure code review?

A : No. AI vulnerability detection can speed up secure code review, but it should not fully replace human judgment. AI is useful for pattern detection, dependency analysis, triage, and remediation suggestions. Human reviewers are still needed for business logic, abuse cases, architecture decisions, regulatory risk, and final acceptance.

Q : How accurate are AI vulnerability scanners compared with traditional SAST tools?

A : Accuracy depends on the tool, language, context, and integration quality. Traditional SAST tools are strong at rule-based detection, while AI can help interpret risk, reduce noise, and suggest fixes. Teams should measure false positives, fix acceptance, escaped vulnerabilities, and developer feedback.

Q : What data should not be shared with AI security tools?

A : Avoid sharing production secrets, private keys, credentials, customer personal data, protected health information, payment card data, sensitive logs, and proprietary code unless the vendor contract and technical controls explicitly allow it. Review retention, model training, data residency, encryption, access controls, and audit logging before connecting AI tools.

Q : How do AI DevSecOps workflows support SOC 2 or ISO 27001 audits?

A : They create consistent evidence: scan results, ticket ownership, severity decisions, remediation timestamps, approvals, exceptions, and validation records. For SOC 2 and ISO 27001, this helps prove vulnerability management is repeatable, assigned, reviewed, and controlled.

Q : What is the difference between AI code security scanning and AI remediation?

A : AI code security scanning identifies potential vulnerabilities in code, dependencies, APIs, secrets, or configuration. AI remediation suggests how to fix those issues, such as changing code, upgrading packages, tightening validation, or improving authentication. Scanning answers “what is wrong?” while remediation answers “how might we fix it?”