AI-Assisted QA vs Traditional QA for Teams

AI-Assisted QA vs Traditional QA for Teams

AI-Assisted QA vs Traditional QA for Teams

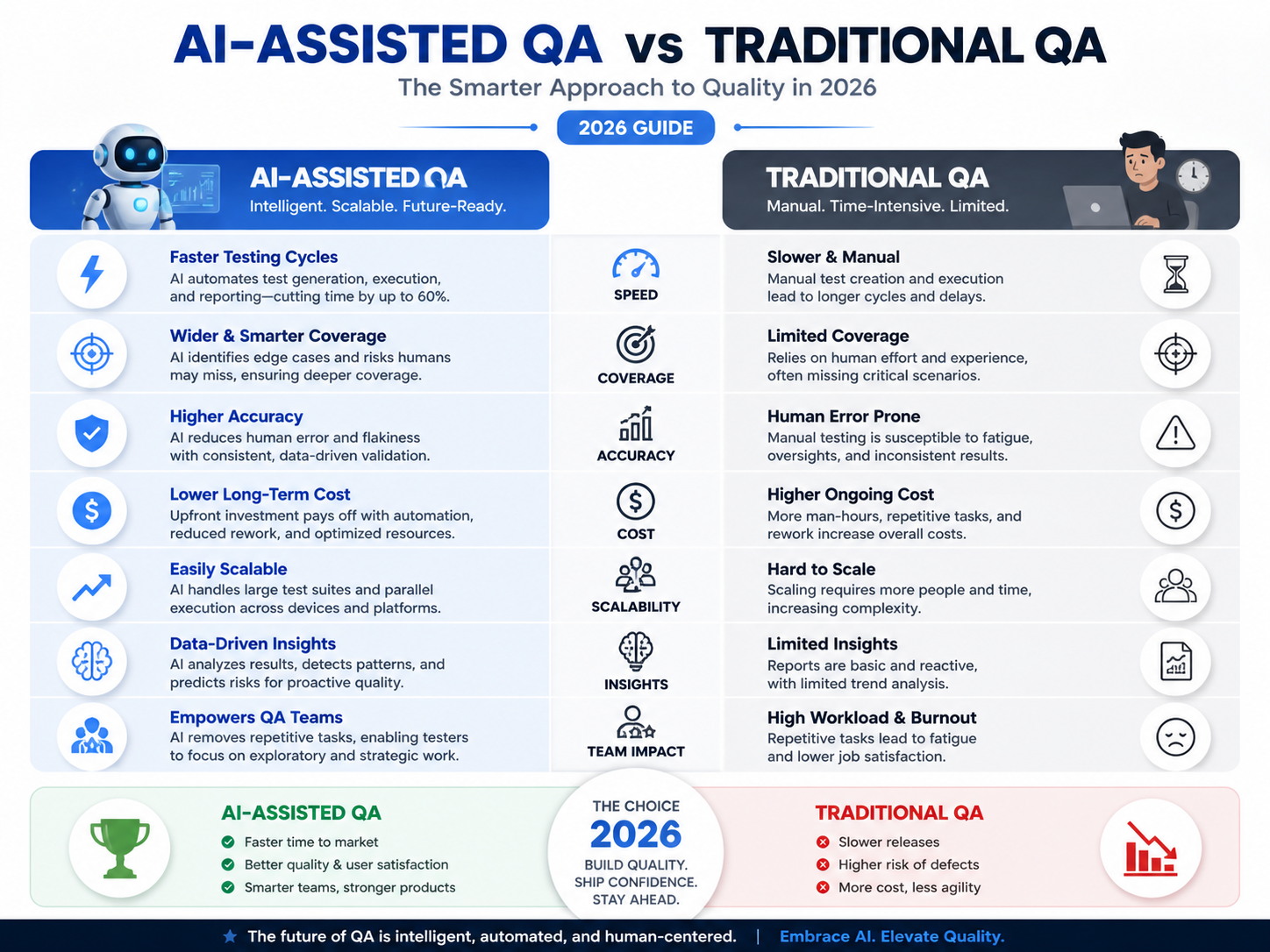

AI–assisted QA vs traditional QA is now a practical decision for software teams that care about speed, quality, compliance, and release confidence. The right choice is not usually “AI or humans.” It is how to combine both without creating new risk.

AI-assisted QA helps automate repetitive testing work, such as test generation, regression testing, flaky test detection, reporting, and risk analysis. Traditional QA still matters because human testers are better at judgment, usability, unclear requirements, edge cases, and business impact. In 2026, the strongest QA teams use a hybrid model: AI for scale, humans for accountability.

For SaaS, fintech, healthtech, and enterprise teams in the USA, UK, Germany, and wider EU, this shift is happening alongside heavy AI investment. Gartner forecasts worldwide AI spending to reach $2.52 trillion in 2026, while overall IT spending is expected to reach $6.31 trillion in 2026.

Why QA Leaders Are Rethinking Testing Workflows

Modern applications change too quickly for old testing cycles. A SaaS team in Austin or San Francisco may deploy several times per week. A bank in London or Frankfurt may need slower, more controlled releases because auditability and compliance matter more.

Traditional QA still creates a reliable quality baseline. But high-volume regression testing, test maintenance, log review, and release-risk scoring often need more automation.

That is where AI-assisted testing fits best.

For broader digital delivery support, Mak It Solutions offers software and IT services across web, mobile, business intelligence, and enterprise development.

What Is Traditional QA?

Traditional QA is a software testing approach built around manual testing, scripted automation, regression cycles, exploratory testing, and human-led defect review. It gives teams a structured way to check whether software works as expected before users experience problems.

Manual Testing, Scripted Automation, and Regression Cycles

Traditional QA teams usually combine manual test cases with automation frameworks such as Selenium, Playwright, or Cypress. Testers document test runs, manage defects, review requirements, and connect automated suites to CI/CD pipelines.

Regression testing is a major part of this model. When developers update a feature, QA checks whether existing functionality still works.

Where Traditional QA Still Works Best

Traditional QA performs well when software requires interpretation. A human tester can notice confusing flows, missing context, awkward UX, accessibility issues, or a business rule that technically “passes” but still feels wrong.

That matters in healthcare workflows, fintech onboarding, ecommerce checkout, mobile apps, and enterprise dashboards.

The Limits of Traditional QA

The downside is speed. Manual testing can slow down releases, and scripted automation can break when interfaces change.

Large regression suites also become expensive to maintain, especially across web apps, APIs, mobile devices, and cloud platforms. Companies modernizing front-end systems may need stronger QA alignment with front-end development services and backend architecture.

What Is AI-Assisted QA in Software Testing?

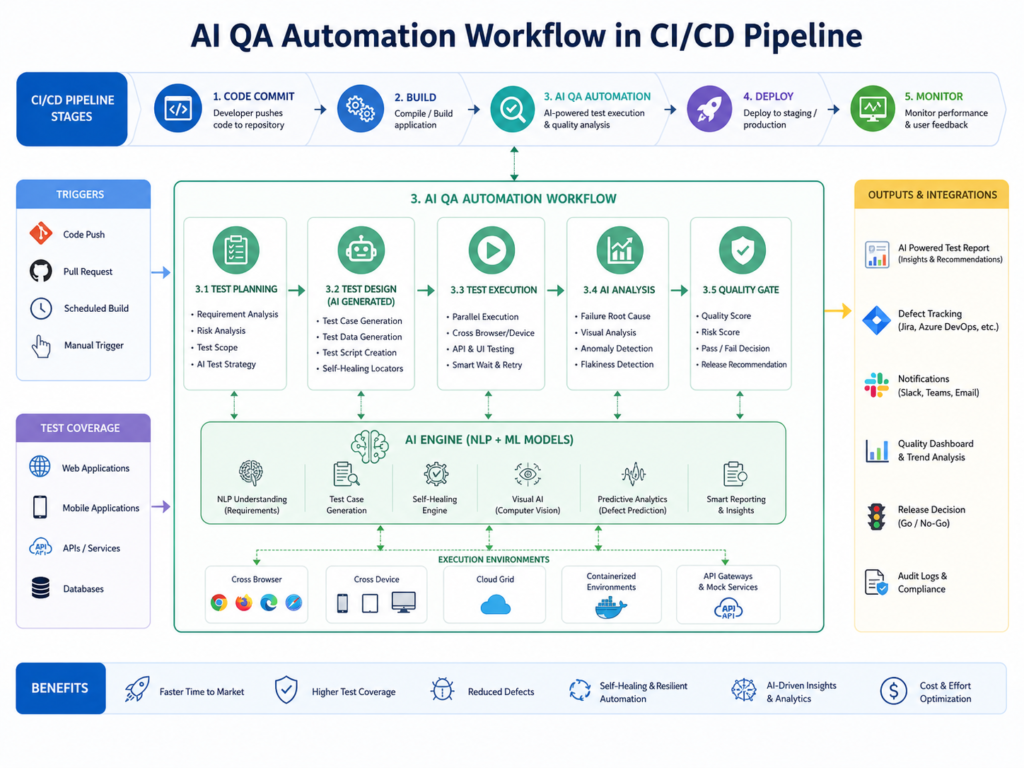

AI-assisted QA uses artificial intelligence to support test creation, execution, maintenance, reporting, and risk analysis. It does not remove QA work. It changes where human effort is spent.

How AI Is Used in QA Today

AI is now used for test case generation, defect clustering, log analysis, visual testing, natural-language test creation, flaky test detection, and release-risk scoring.

In modern teams, AI QA automation may connect with GitHub Actions, Azure DevOps, AWS environments, cloud testing tools, and continuous testing workflows.

AI Test Generation and Test Prioritization

AI can analyze user stories, code changes, bug history, and production incidents to suggest test cases. It can also prioritize tests that are more likely to catch defects.

That helps teams avoid running massive test suites blindly.

In business intelligence products, for example, AI can help flag dashboard logic, reporting filters, API changes, and data workflows that need extra validation. Mak It Solutions’ business intelligence services support analytics, reporting, integration, and secure data workflows.

Self-Healing Tests and Maintenance Support

Self-healing tests can reduce maintenance by adapting to small UI changes. If a button label or selector changes slightly, the AI may still identify the intended element instead of failing the test immediately.

Useful? Yes.

Magic? No.

Teams still need review rules, logs, and human approval for important test changes.

AI-Assisted QA vs Traditional QA.

The main difference between AI-assisted QA vs traditional QA is scale versus judgment. Traditional QA depends on human-led test design, execution, and review. AI-assisted QA uses automation and machine learning to speed up repetitive work, expand coverage, and highlight risk.

A practical comparison.

| Area | Traditional QA | AI-Assisted QA |

|---|---|---|

| Speed | Slower for large regression cycles | Faster for repeatable test suites |

| Coverage | Limited by team capacity | Broader scenario generation |

| Maintenance | Scripts can be brittle | Self-healing can reduce rework |

| Judgment | Strong human interpretation | Requires human validation |

| Compliance | Strong if documentation is disciplined | Needs audit trails and explain ability |

| Best use | Usability, exploratory testing, edge cases | Regression, prioritization, reporting, log analysis |

Where Traditional QA Performs Better

Traditional QA is stronger when trust, accessibility, emotional context, or regulatory interpretation is involved.

A human tester is better suited to asking.

Does this healthcare workflow make sense for a patient?

Would this loan journey confuse a first-time applicant?

Is the dashboard technically correct but misleading?

Does this mobile experience feel natural on a real device?

For mobile-first products, human testing remains critical across real devices, app-store expectations, and regional user behavior. QA should align early with mobile app development services.

Where AI-Assisted QA Creates the Biggest Advantage

AI-assisted QA works best in high-volume, repeatable, data-rich environments. That includes automated regression testing, test prioritization, release-risk scoring, log analysis, and defect pattern detection.

A New York SaaS team can use AI to shorten regression cycles before weekly releases. A Berlin fintech team can use AI to identify high-risk payment flows before audit review. A Manchester health tech team can use AI to summarize test evidence while keeping clinical workflow validation human-led.

Benefits and Limitations of AI QA Automation

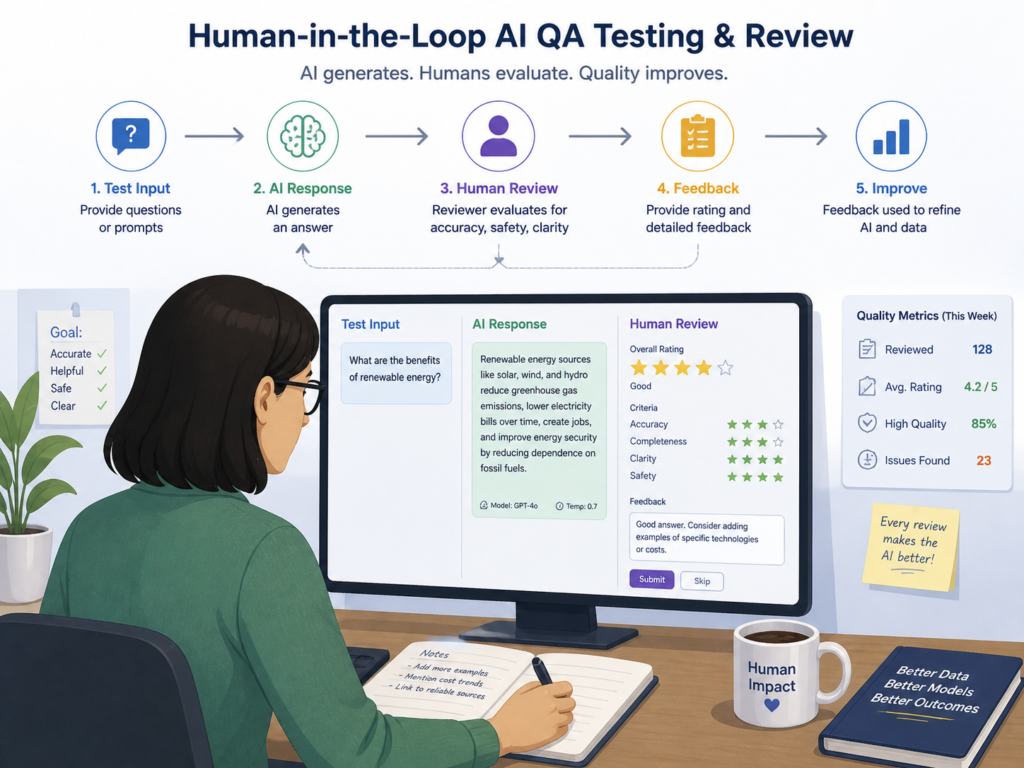

AI QA automation can improve speed and coverage, but it should not fully replace human testers. Teams still need people to validate business logic, usability, compliance risk, and customer experience.

Benefits of AI-Assisted QA

AI-assisted QA can help teams.

Generate test cases faster

Prioritize high-risk areas

Reduce repetitive regression effort

Detect flaky tests earlier

Summarize logs and test evidence

Support continuous testing in CI/CD pipelines

Reduce some maintenance work through self-healing tests

The biggest practical benefit is not just faster testing. It is better use of QA time.

Instead of spending hours fixing brittle scripts, QA engineers can focus on test strategy, risk analysis, data quality, and release confidence.

Limitations and Risks

AI can also create problems.

It may generate irrelevant tests, miss edge cases, produce false positives, or suggest changes based on incomplete requirements. If training data, test data, or requirement quality is poor, the output will also be weak.

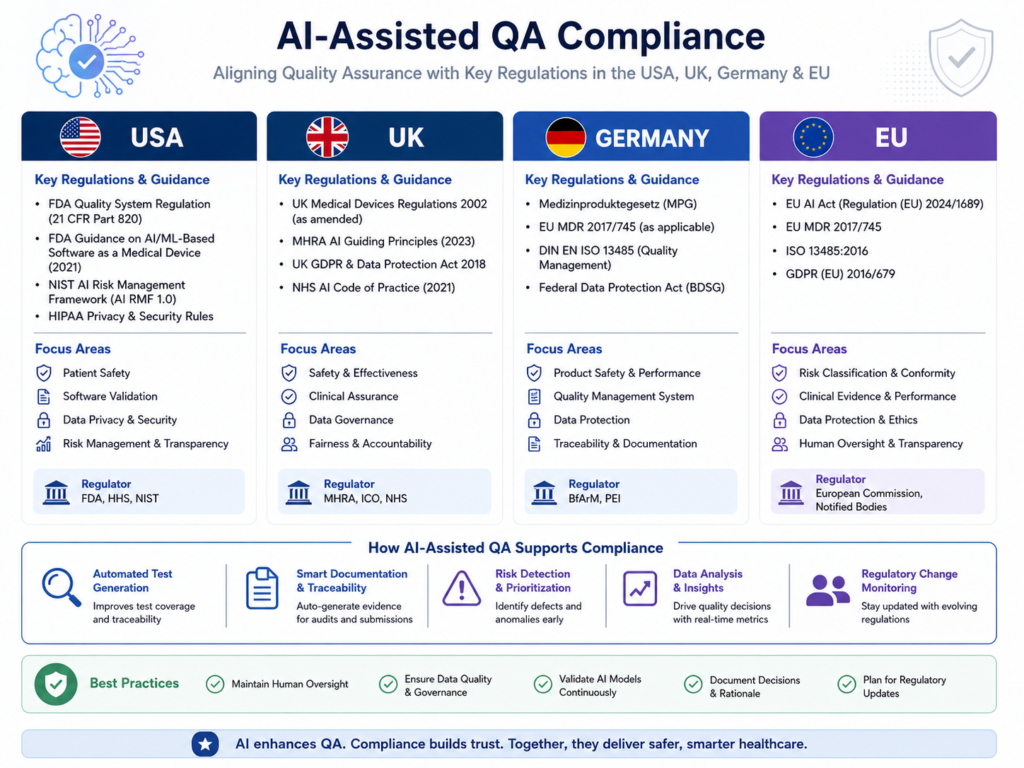

Explain ability is another concern. Regulated teams need to understand why a test was prioritized, changed, skipped, or marked as risky.

That matters in SOC 2, ISO 27001, PCI DSS, HIPAA, UK-GDPR, GDPR, BaFin, and DORA-sensitive environments.

Why Human-in-the-Loop QA Still Matters

Human-in-the-loop QA keeps accountability clear.

Humans should review AI-generated test cases, approve changes to critical suites, validate business logic, and challenge suspicious results. AI can recommend. It should not quietly make final quality decisions in high-risk systems.

Mak It Solutions has also published guidance on human-in-the-loop AI workflows, including governance patterns for US, UK, German, and EU organizations.

GEO Considerations for USA, UK, Germany, and EU QA Teams

AI-assisted QA vs traditional QA also looks different by region. A fast-growth SaaS company in the USA may care most about CI/CD speed. A UK fintech may focus more on payment flows and regulatory evidence. A German or EU enterprise may place heavier emphasis on data protection, auditability, and explainability.

USA.

In the USA, SaaS teams in New York, Austin, Seattle, and San Francisco often focus on release velocity, customer retention, and platform scalability.

Health tech teams must consider HIPAA expectations, while payment platforms may need PCI DSS controls. HHS OCR investigates large breaches of protected health information affecting 500 or more individuals, which keeps healthcare data security under strong scrutiny.

UK.

In the UK, London fintech teams often test around Open Banking APIs, FCA expectations, payment flows, and fraud controls.

Manchester health tech or public-sector vendors may also need NHS-style governance and UK-GDPR alignment. AI-assisted QA can help summarize evidence, but teams should avoid sending sensitive production data into AI tools without approved privacy, legal, and security controls.

Germany and EU.

Germany and the wider EU place strong emphasis on GDPR/DSGVO, transparency, data residency, and auditability.

The EU AI Act entered into force on 1 August 2024 and follows a phased application timeline, with full applicability generally from 2 August 2026 and some high-risk AI system rules extending later.

For QA teams using AI in regulated software delivery, that means tool purpose, data handling, human oversight, validation controls, and audit logs should be documented from the start.

The Future of QA Testing With AI

AI is unlikely to fully replace QA testers in complex or regulated software environments. It will reshape the role.

Will AI Replace QA Testers?

AI will replace some repetitive QA tasks, especially manual script execution, basic regression support, and routine reporting.

But QA as a discipline is bigger than clicking through test cases. Mature QA requires product understanding, risk judgment, customer empathy, technical awareness, and compliance thinking.

Testers who only execute scripts may feel pressure. QA professionals who understand automation, systems thinking, AI validation, observability, and risk-based testing will become more valuable.

QA Engineer Skills Needed in 2026

QA engineers now need a broader skill set, including.

Automation frameworks

API testing

CI/CD workflows

Test data management

Prompt evaluation

AI output review

Risk-based testing

Observability and logs

Secure software delivery

Compliance documentation

Backend-heavy products may also benefit from QA planning connected to back-end development services and API architecture.

Agentic Testing and Continuous QA

The future is moving toward continuous QA, where test design, execution, monitoring, and release-risk analysis happen throughout development.

Agentic testing may allow AI agents to explore workflows, propose tests, detect anomalies, and recommend follow-up checks.

Still, final ownership should remain with accountable human teams.

How to Adopt AI-Assisted QA Without Increasing Risk

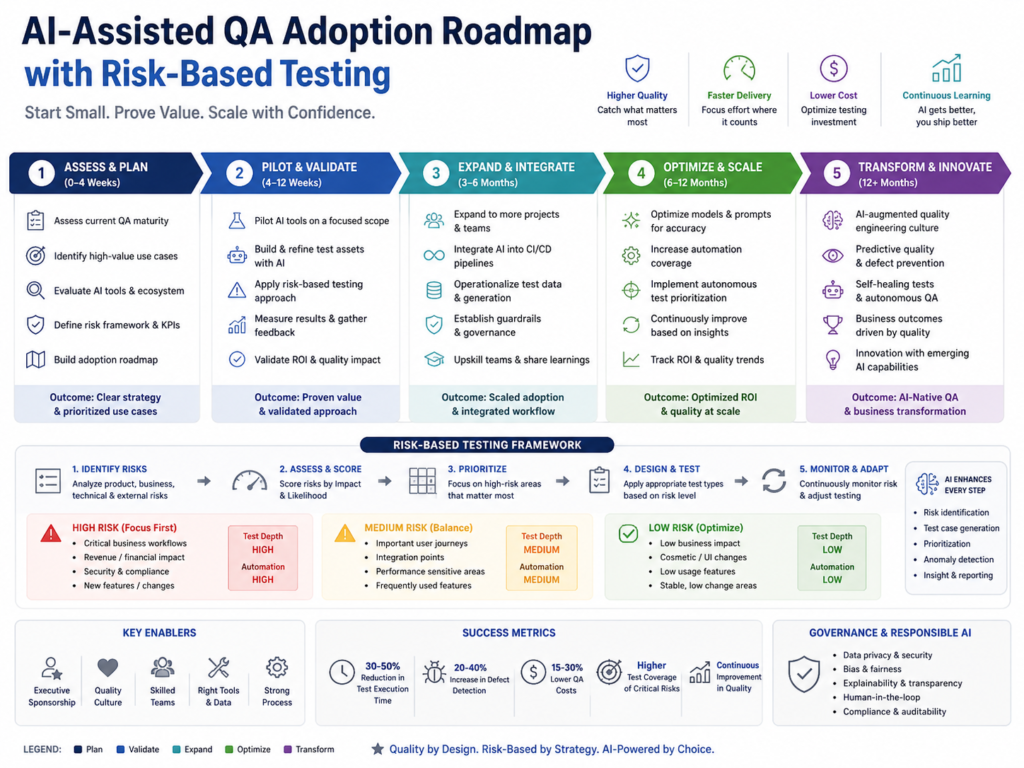

The safest way to adopt AI-assisted QA is to start with low-risk, high-volume tasks, keep human review in place, document decisions, and align automation with compliance requirements.

Do not begin with your riskiest regulated workflows.

Start With Regression, Maintenance, and Prioritization

Start with automated regression testing, flaky test detection, and test maintenance. These areas usually deliver visible value without handing critical judgment to AI.

A sensible first pilot might cover.

Login

Search

Dashboards

Checkout

API regression

Notification flows

Common user journeys

Build Governance Around Data and AI Outputs

Before scaling AI QA automation, define the rules.

Teams should decide.

What data AI tools can access

Who approves AI-generated tests

How outputs are logged

Which workflows require human review

How sensitive test data is protected

Which vendors need security review

For GDPR, UK-GDPR, HIPAA, and PCI DSS environments, avoid exposing sensitive production data unless legal, privacy, and security teams approve the architecture.

Use an Audit, Pilot, or Roadmap

Use an AI QA audit when your test suite is noisy, slow, or poorly documented.

Use a pilot when one product team has repeatable regression pain.

Use a roadmap when multiple teams need shared standards, tooling, governance, and reporting.

For custom automation or AI workflow integration, Mak It Solutions’ Python development services can support secure backend logic, integrations, and QA tooling.

Wrap It Up

AI-assisted QA vs traditional QA is not a replacement story. It is a quality strategy decision.

AI can help software teams test faster, cover more scenarios, and manage repetitive work with less friction. Traditional QA keeps the human judgment that software quality still depends on.

Planning to compare AI-assisted QA vs traditional QA inside your current delivery process? Mak It Solutions can help you assess test maturity, identify safe AI automation opportunities, and design a practical roadmap for SaaS, fintech, healthtech, or enterprise platforms.

Start with a scoped estimate or consultation through the Mak It Solutions contact page.

Key Takeaways

AI-assisted QA is best for repetitive, high-volume testing.

Traditional QA remains stronger for judgment, usability, and edge cases.

The best QA model in 2026 is hybrid.

US teams often prioritize release velocity.

UK, Germany, and EU teams usually need stronger governance and documentation.

AI QA automation can reduce maintenance effort, but false positives and explainability gaps require human oversight.

Regulated teams should start with low-risk regression testing before applying AI to sensitive workflows.

FAQs

Q : Is AI-assisted QA better than manual testing?

A : AI-assisted QA is better for repetitive, high-volume tasks such as regression testing, test prioritization, log analysis, and maintenance support. Manual testing is better for usability, exploratory testing, emotional context, and unclear requirements. Most mature teams use both.

Q : What QA tasks should not be fully automated with AI?

A : AI should not fully automate final release approval, regulated workflow validation, accessibility judgment, security exception approval, clinical risk decisions, financial risk decisions, or customer-impact assessments. AI can support analysis, but people should review critical outcomes.

Q : Can AI testing tools support GDPR, HIPAA, or SOC 2 compliance?

A : AI testing tools can support compliance by improving documentation, test evidence, audit trails, and risk-based coverage. They do not make a system compliant by themselves. Teams still need data controls, access management, vendor review, retention policies, human oversight, and security documentation.

Q : What skills do QA engineers need for AI testing tools?

A : QA engineers need automation knowledge, API testing, CI/CD awareness, test design, risk-based testing, and strong review skills. They also need to understand AI limitations, data privacy, explainability, and false positives.

Q : Should regulated teams use AI-assisted QA?

A : Yes, but carefully. Regulated teams should start with low-risk regression testing, keep humans in the approval loop, document AI-generated outputs, and avoid exposing sensitive production data without approved safeguards.