AI Code Review Guide for Quality Gates

AI code review helps teams catch bugs, security gaps, policy issues, and maintainability risks before code reaches production. It is especially useful when developers use AI coding assistants, because AI-generated code can look correct while still missing edge cases, secure patterns, or compliance evidence.

The strongest AI code review workflow combines automated checks, secure SDLC guardrails, and human approval for high-risk changes. Done well, it gives engineering teams faster delivery without losing control of quality, security, or audit readiness.

Why AI Code Review Matters Now

AI-assisted development is no longer a side experiment. Many engineering teams now use AI tools to draft functions, tests, APIs, migrations, UI components, and documentation.

That speed is useful. It also creates a new problem: verification debt.

When more code is produced in less time, teams need better ways to check it. Manual review alone can miss duplicated logic, unsafe dependencies, weak access control, missing tests, or code that does not follow the existing architecture.

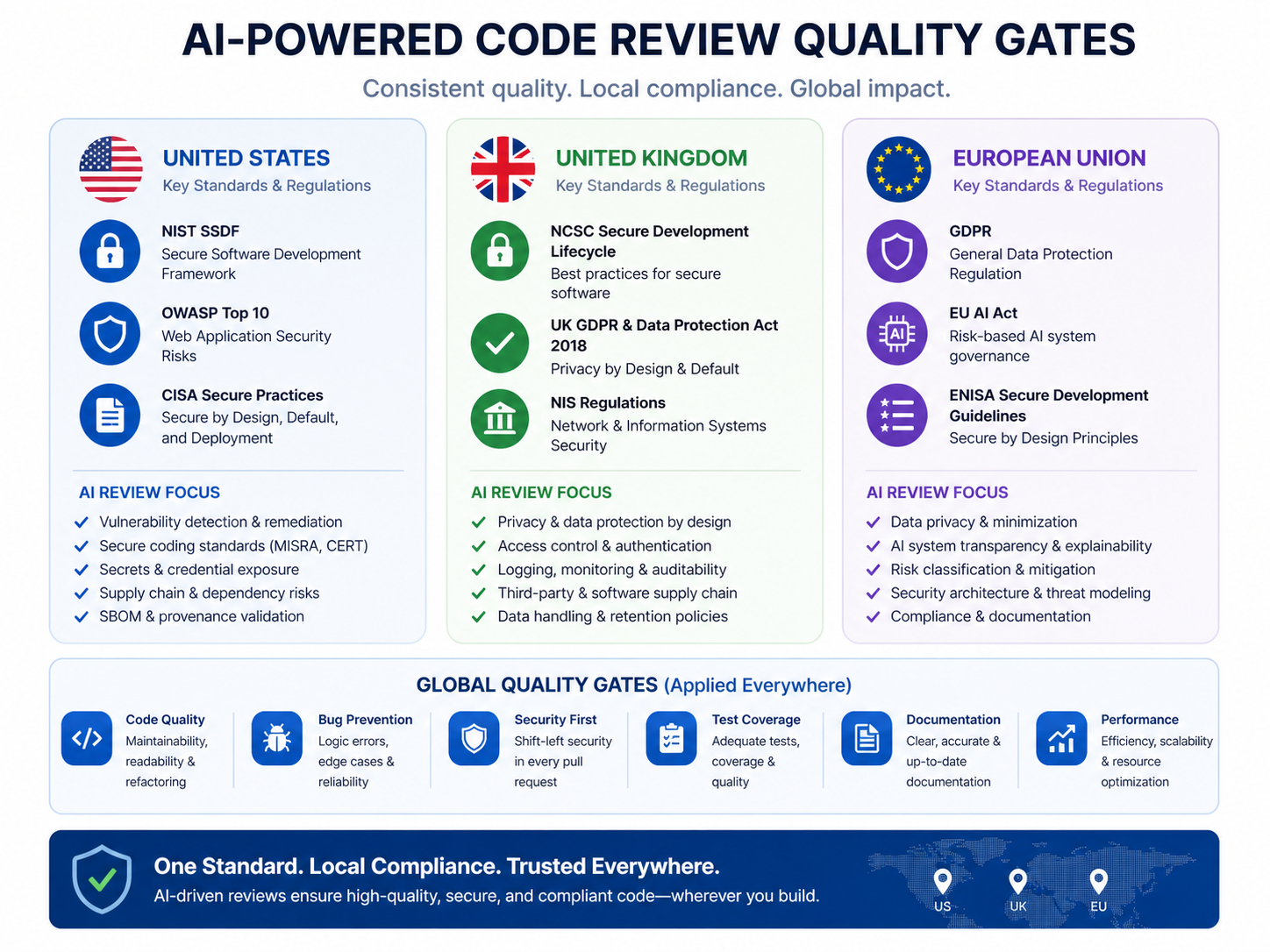

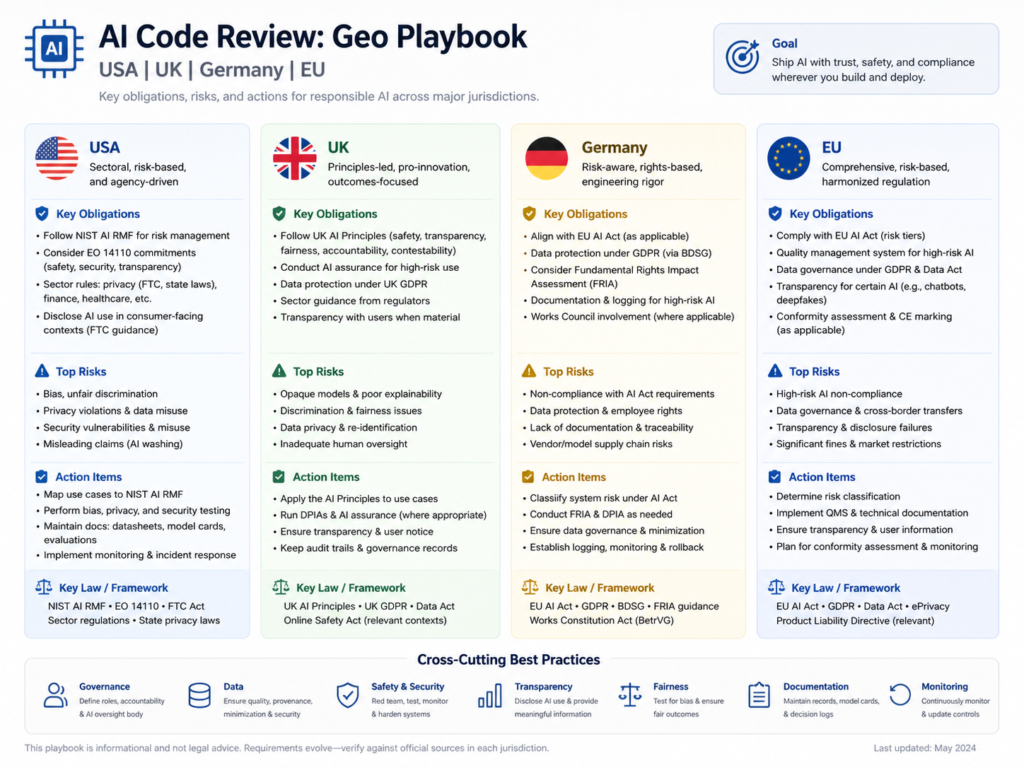

For companies in the US, UK, Germany, and the wider EU, the real question is not only “Should developers use AI?” It is “How do we review AI-assisted code before it reaches production?”

What Is AI Code Review?

AI code review is the process of inspecting human-written and AI-generated code using automated tools, reviewer workflows, and clear quality standards.

It helps teams identify.

Bugs and logic errors

Security vulnerabilities

Unsafe dependencies

Secrets or sensitive data exposure

Code smells and duplicated logic

Missing tests

Policy or compliance gaps

Maintainability issues

Traditional peer review depends mainly on developers reading the code. AI code review adds automated support through static analysis, AI PR comments, SAST, SCA, secrets scanning, and CI/CD checks.

That does not remove the need for senior reviewers. It gives them cleaner pull requests and lets them focus on architecture, risk, business logic, and production impact.

AI Code Review vs Traditional Peer Review

Traditional peer review is still valuable because humans understand product context, customer impact, and long-term architecture better than tools.

AI code review improves the process by catching repeatable issues earlier. For example, automated checks can flag missing tests, risky dependency updates, insecure input handling, or inconsistent formatting before a senior engineer even opens the pull request.

A good workflow uses both.

| Review Type | Best For |

|---|---|

| Automated AI code review | Repeated patterns, test gaps, security hints, maintainability checks |

| Static analysis | Code quality, complexity, duplication, style, deterministic rules |

| SAST/SCA tools | Security flaws, vulnerable dependencies, license issues |

| Human review | Architecture, business logic, privacy impact, production risk |

For backend-heavy teams using Python development services or Node.js development, this mixed approach can reduce review noise and make standards easier to enforce.

The Biggest Risks of AI-Generated Code Quality

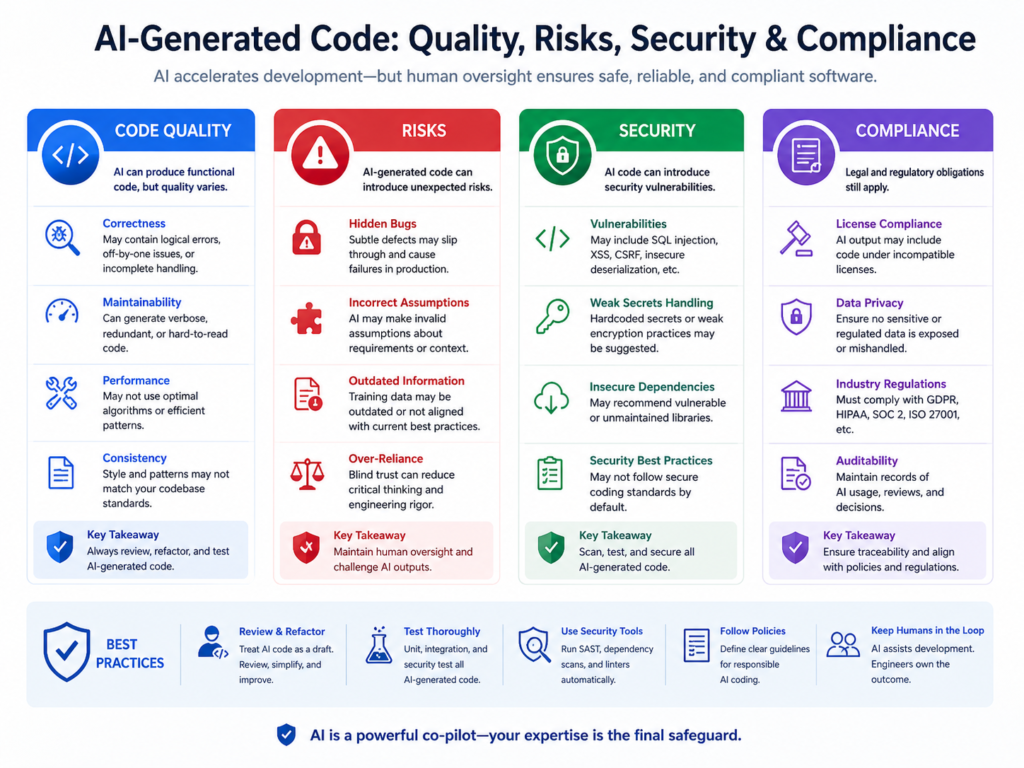

AI-generated code should never be shipped without review. The risk is not that AI is always wrong. The risk is that AI can be confidently incomplete.

Security defects and unsafe dependencies

AI tools may suggest outdated libraries, weak authentication logic, poor input validation, or insecure API patterns. They can also produce code that works in a simple test but fails under real production conditions.

That is why software composition analysis, secrets scanning, and SAST should be part of every serious AI-assisted development workflow.

Technical debt and maintainability problems

AI can generate code that solves the immediate task but does not fit your codebase.

Common issues include.

Duplicated helper functions

Inconsistent error handling

Bloated components

Weak naming

Missing observability

Poor separation of concerns

Tests that cover only the happy path

Over time, these small issues slow delivery. Good AI code review checks whether the change follows existing architecture, not just whether it compiles.

Compliance and audit exposure

Regulated teams need review evidence. A New York healthtech company may need HIPAA-aligned controls. A London fintech may need UK GDPR and FCA-ready audit trails. A Berlin SaaS company may need GDPR, BaFin, DORA, and data residency evidence.

For product teams building dashboards or audit reporting, business intelligence services can help turn review events, test results, and security findings into management-ready reporting.

Quality Gates for AI Code Review

Quality gates are the automated and manual checks a pull request must pass before it can merge.

For AI-generated code, quality gates should be stricter around high-risk areas such as authentication, authorization, payments, encryption, infrastructure, data deletion, and privacy-sensitive workflows.

Core CI/CD quality gates

A practical AI code review pipeline should include.

Unit tests

Integration tests

Coverage thresholds

Linting

Static code analysis

SAST

SCA

Secrets scanning

Container scanning

Infrastructure-as-code scanning

License checks

Human approval for high-risk changes

The goal is not to slow developers down. The goal is to make safe delivery the default path.

Pull request quality gates for AI-assisted development

Every AI-assisted PR should include a short reviewer note covering.

What was AI-generated

What was manually changed

What tests were run

What risk tier applies

Which files or modules need extra attention

For example, a San Francisco SaaS team may allow AI-assisted UI copy changes with normal review. But changes to auth, tenant isolation, payments, or encryption should require senior engineering or AppSec approval.

Enterprise DevOps quality gates

Enterprise teams should enforce.

Protected branches

Required approvals

Signed commits where appropriate

Mandatory CI/CD checks

Security scan completion

Release evidence collection

Role-based access controls

Audit logs

This matters for SOC 2, ISO 27001, PCI DSS, HIPAA, GDPR, UK GDPR, and internal risk committees.

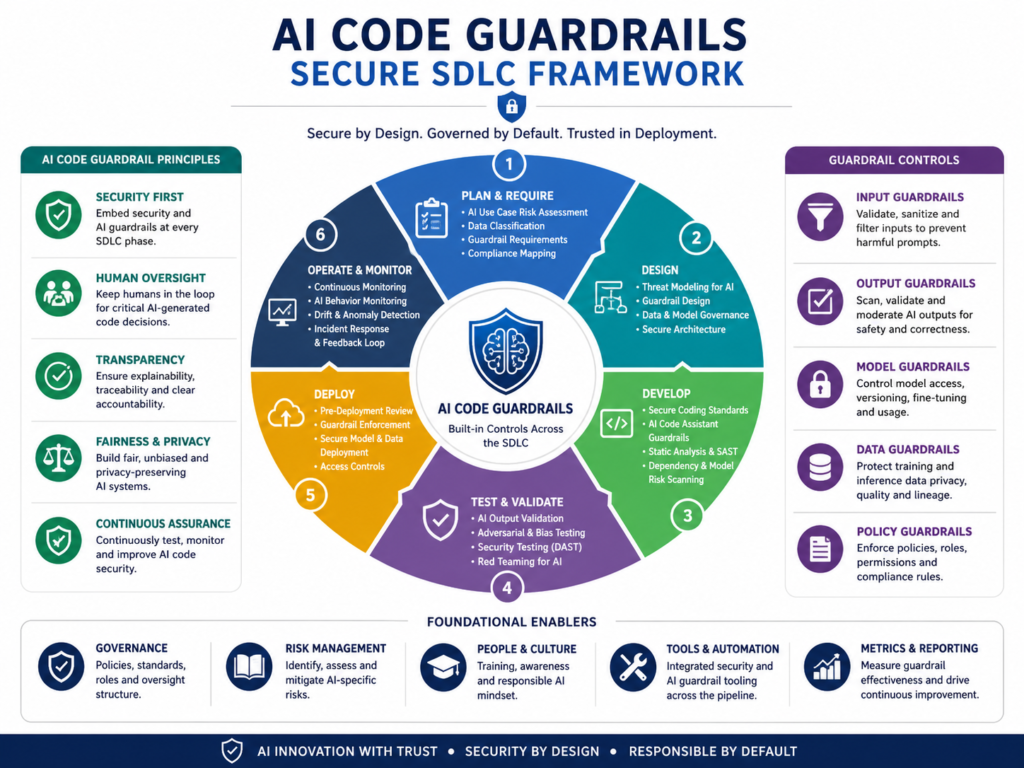

AI Code Guardrails for Secure SDLC

AI code guardrails define what developers can generate, which tools they can use, how code must be reviewed, and what evidence must be captured before release.

A vague “use AI responsibly” policy is not enough. Guardrails need to be specific, enforceable, and developer-friendly.

Developer and reviewer policies

A practical AI coding policy should define.

Approved AI tools

Prohibited data inputs

Code ownership rules

Review standards

Risk tiers

Escalation paths

Data retention expectations

Vendor approval requirements

Developers should not paste secrets, customer data, regulated health data, payment data, or proprietary source code into unapproved tools.

Secure SDLC controls

Secure SDLC controls should map AI-assisted changes to known frameworks and internal policies.

For application security, OWASP Top 10 and OWASP ASVS are useful foundations. For EU and financial-sector organizations, NIS2 and DORA may also influence resilience, vendor risk, incident reporting, and operational controls.

In Germany and the wider EU, teams in Munich, Frankfurt, Amsterdam, Paris, Dublin, and Zurich should also consider GDPR, DSGVO, cloud region selection, vendor auditability, and data residency requirements.

Human-in-the-loop review

Human-in-the-loop review is essential when code affects identity, privacy, finance, healthcare, or safety.

A London NHS supplier, Manchester fintech, or Berlin insurance platform should require named reviewers, documented approvals, and traceable CI/CD evidence for high-risk pull requests.

Mak It Solutions also covers related AI governance thinking in its article on human-in-the-loop AI workflows.

Best AI Code Review Tools and Platforms

The best AI code review tools combine deterministic checks, AI-assisted explanations, security scanning, and enforceable quality gates inside pull requests and CI/CD pipelines.

The right choice depends on your stack, risk level, compliance needs, and developer workflow.

What to compare before choosing a tool

Compare tools based on.

Accuracy

False-positive handling

Explain ability

Repository permissions

CI/CD integration

Audit logs

Policy controls

Language support

Developer experience

Security depth

A good tool should help developers act faster. It should not bury them under vague comments.

Common tool categories

| Tool Category | Use Case |

|---|---|

| Static analysis | Maintainability, complexity, code smells, duplication |

| AI PR review | Context-aware comments, explanations, reviewer assistance |

| SAST | Security flaws in custom code |

| SCA | Vulnerable dependencies and license risks |

| Secrets scanning | Exposed keys, tokens, and credentials |

| CI/CD policy tools | Merge rules, approvals, release gates |

Examples often used by engineering teams include SonarQube, SonarSource, CodeRabbit, Checkmarx, Snyk, JetBrains Qodana, and GitHub-native workflows.

Teams building React, TypeScript, Laravel, Python, Node.js, Flutter, or cloud-native systems should connect review tools directly to PRs and CI/CD.

GEO Playbook.

AI code review requirements vary by region because compliance expectations, buyer concerns, cloud hosting needs, and audit standards vary.

A single global workflow can work, but the evidence pack may differ by market.

USA

US SaaS teams in Austin, Seattle, New York, Boston, and San Francisco often focus on fast delivery plus audit evidence.

Common concerns include.

SOC 2

HIPAA

PCI DSS

FedRAMP, where relevant

Customer security reviews

Vendor risk questionnaires

For healthcare, access control and logging need extra care. For payment-related systems, PCI DSS evidence matters.

UK

UK teams in London, Manchester, and Cambridge should align AI code review with privacy, financial services, and public-sector expectations where relevant.

Common concerns include.

UK GDPR

FCA expectations

Open Banking security

NHS digital assurance

Privacy impact evidence

Pull request history, reviewer approvals, test results, and security scan records can help support audit and buyer confidence.

Germany and EU

Teams in Berlin, Munich, Hamburg, Frankfurt, Amsterdam, Paris, Dublin, and Zurich often need stronger documentation around data processing, hosting, vendor access, and employee-facing tooling.

Common concerns include.

GDPR

DSGVO

BaFin expectations

DORA

NIS2

EU AI Act principles

Data residency

Works council considerations, where applicable

For cloud deployments, review AWS, Microsoft Azure, and Google Cloud regions against privacy, security, and customer requirements.

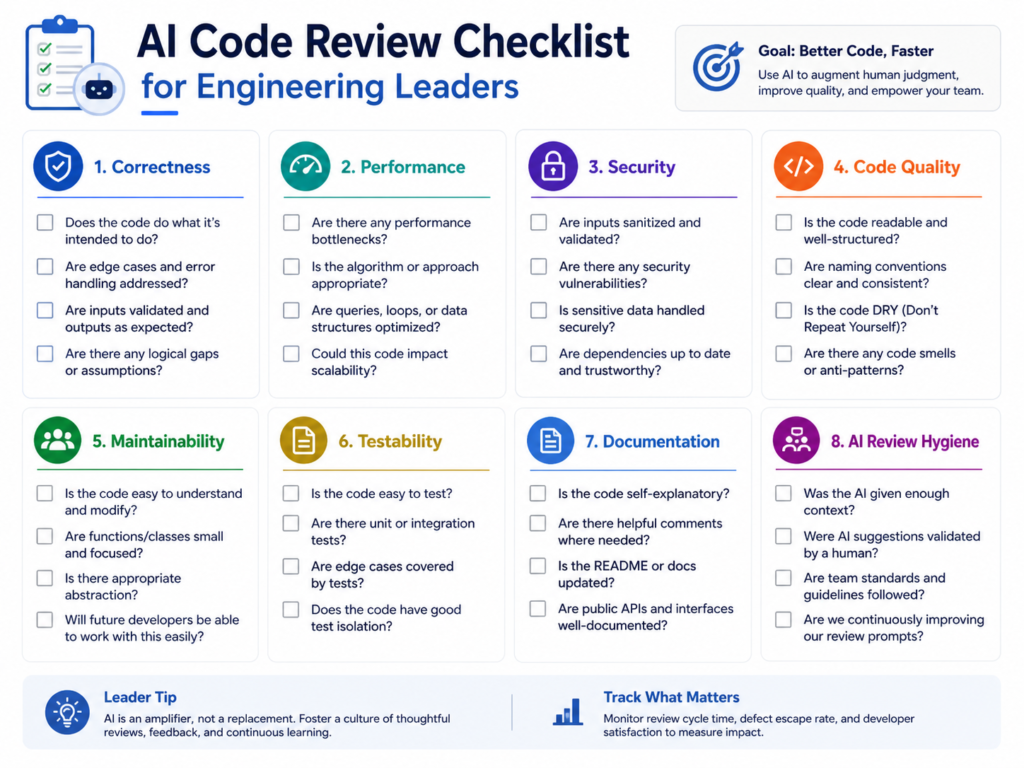

AI Code Review Checklist for Engineering Leaders

An AI code review checklist helps leaders standardize safe delivery without blocking useful AI adoption.

Governance checklist

Define approved AI coding tools

Set clear data handling rules

Assign reviewer ownership

Create risk tiers

Document escalation rules

Train developers on safe usage

Review vendor terms and access controls

Technical checklist

Require automated tests

Set coverage thresholds

Run static code analysis

Run SAST and SCA

Enable secrets scanning

Review dependencies and licenses

Protect main branches

Require human approval for high-risk changes

Store CI/CD evidence with the PR or release record

Buyer and compliance checklist

Confirm audit log availability

Check reporting features

Review role-based access controls

Validate language support

Confirm CI/CD integration

Review hosting and data retention settings

Make sure developers will actually use the tool

A tool that developers ignore will not improve AI-generated code quality. Adoption matters as much as vendor selection.

Final Thoughts

AI code review gives engineering teams a practical way to use AI-assisted development without letting quality, security, or compliance slip.

The best approach is simple: define guardrails, connect review tools to CI/CD, enforce quality gates, and keep human approval for high-risk changes. That is how AI code review becomes a reliable engineering control instead of another loose process.

Planning to introduce AI-assisted development without losing control of quality, security, or compliance? Mak It Solutions can help you design a practical AI code review workflow, connect it to CI/CD, and build the right evidence trail for US, UK, German, and EU buyers.

Start with a scoped consultation through the Mak It Solutions contact page and request an AI code review readiness assessment.

Key Takeaways

AI code review is now essential because AI-assisted development increases code volume and verification pressure.

Strong quality gates should include automated tests, static analysis, SAST, SCA, secrets scanning, dependency checks, and human approval for high-risk changes.

Regulated teams need review evidence for frameworks such as SOC 2, HIPAA, PCI DSS, GDPR, UK GDPR, ISO 27001, DORA, and NIS2.

Tools such as SonarQube, CodeRabbit, Checkmarx, Snyk, JetBrains Qodana, and GitHub-native workflows work best when they are integrated into pull requests and CI/CD.

Vendor choice matters, but governance and developer adoption matter more.

FAQs

Q : Can AI code review replace human code reviewers?

A : No. AI code review can reduce manual effort, catch repeatable issues, and explain common risks, but it should not replace human reviewers. Senior engineers still need to validate architecture, business logic, privacy impact, security tradeoffs, and production risk.

Q : How do AI code review tools reduce technical debt?

A : AI code review tools reduce technical debt by flagging duplicated logic, inconsistent patterns, weak naming, missing tests, and maintainability problems before merge. They work best when connected to quality gates, so repeated problems must be fixed before code enters the main branch.

Q : What should be included in an AI-generated code review checklist?

A : An AI-generated code review checklist should include test results, coverage, static code analysis, SAST, SCA, secrets scanning, dependency review, license checks, data handling review, and human approval for high-risk areas.

Q : Are AI code review tools safe for regulated industries?

A : AI code review tools can be safe for regulated industries when deployed with approved vendors, access controls, data protection rules, audit logs, and human oversight. Healthcare, fintech, banking, and public-sector teams should review vendor data processing terms, hosting regions, retention settings, and security certifications before using AI tools on sensitive repositories.

Q : How can teams prove AI-generated code was reviewed for compliance?

A : Teams can prove review by keeping pull request history, CI/CD logs, test results, SAST/SCA reports, reviewer approvals, risk-tier labels, and release evidence. This evidence should show who reviewed the code, which checks ran, what failed, what was fixed, and when approval happened.