AI-Generated Code Security: Enterprise Guide

AI-Generated Code Security: Enterprise Guide

AI-Generated Code Security: Enterprise Guide

AI-generated code security is the discipline of reviewing, testing, scanning, and governing AI-written code before it reaches production. For enterprise teams in the US, UK, Germany, and wider EU, the safest rule is simple: treat every AI output as untrusted until humans, automated tools, and CI/CD security gates validate it.

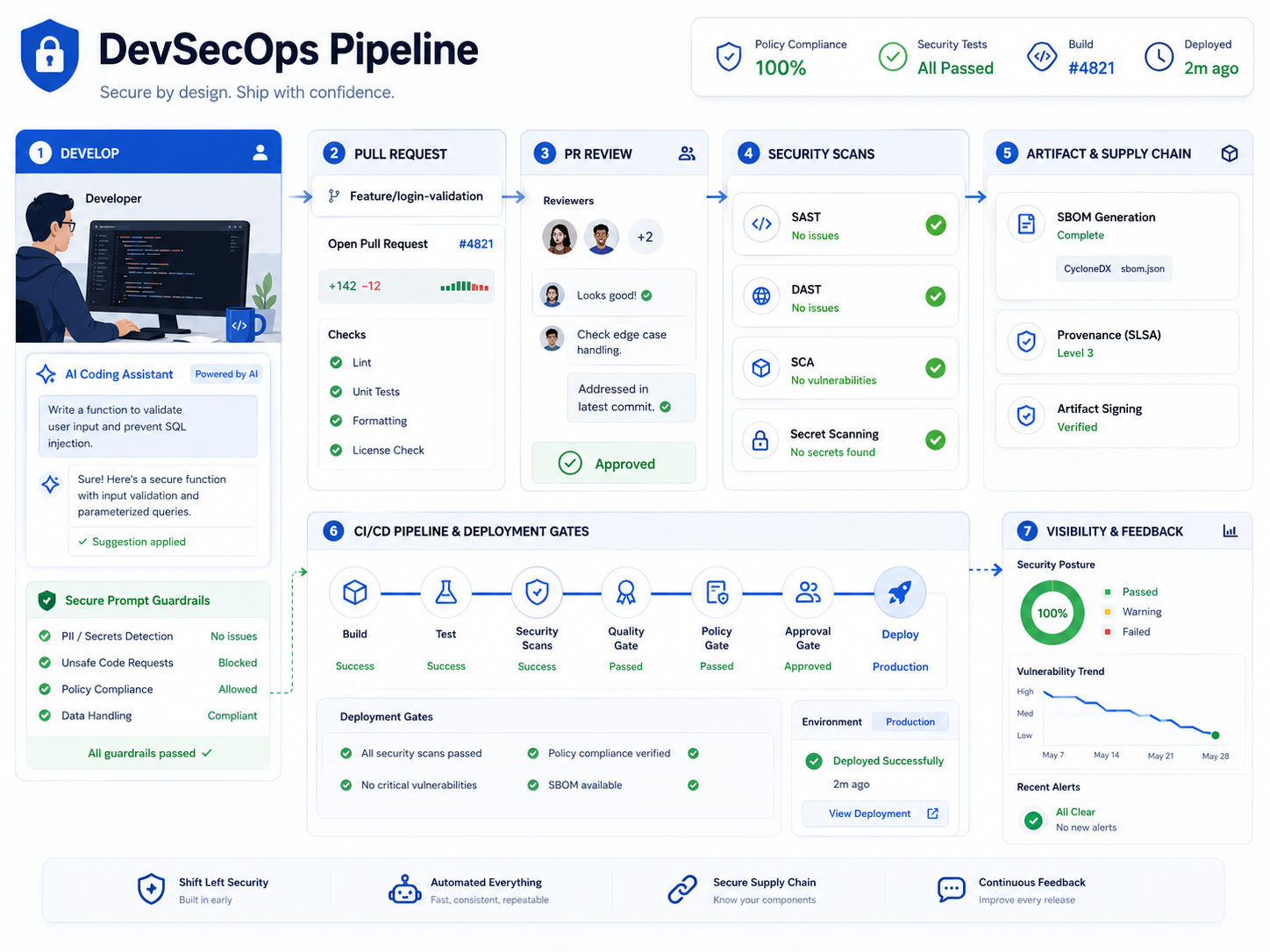

That does not mean blocking AI coding assistants. It means using them inside a controlled workflow, with secure prompts, approved tools, peer review, SAST, DAST, SCA, secret scanning, SBOMs, and audit-ready evidence.

The adoption curve is already too large to ignore. Stack Overflow’s 2024 Developer Survey reported that 76% of respondents were using or planning to use AI tools in development, up from 70% the previous year. Active AI-tool usage also rose to about 62%, compared with 44% the year before.

What Is AI-Generated Code Security?

AI-generated code security means protecting software from risks introduced when tools like GitHub Copilot, Cursor, Claude Code, Gemini Code Assist, or other LLM coding assistants generate code, tests, configs, scripts, or infrastructure files.

Think of AI-written code like fast third-party code or junior-developer code: useful, often productive, but never automatically safe.

AI-Assisted Development vs. Traditional Coding Risk

Traditional coding risk usually comes from rushed releases, weak reviews, poor dependency management, or human mistakes. AI-assisted development adds another layer: the code may look clean, but still contain outdated logic, weak validation, broken authorization, or a package nobody should be using.

For example, a New York SaaS team might ask AI to scaffold a payment workflow. A London fintech team might use it to generate Open Banking API logic. A Berlin healthtech team might ask for a patient-facing portal component. In every case, the output still needs secure design review, threat modeling, compliance checks, and production-grade testing.

Where LLM Code Generation Security Fits in Secure SDLC

LLM code generation security belongs inside the Secure SDLC, not beside it. The safest teams connect AI-assisted coding to requirements, architecture review, branch protection, pull requests, test coverage, dependency review, and deployment gates.

Mak It Solutions’ work across backend development services, React development services, and Node.js development services can support this practical “secure by workflow” approach.

Biggest AI-Generated Code Security Risks

The biggest AI-generated code security risks include insecure logic, vulnerable dependencies, leaked secrets, license uncertainty, prompt injection, and overreliance on unverified output.

These risks become more serious when developers paste credentials, customer records, regulated data, proprietary architecture, or confidential business logic into unapproved AI tools.

Prompt Injection, Insecure Output, and Hallucinated Logic

Prompt injection can manipulate an AI assistant through hidden instructions in comments, README files, tickets, documentation, or dependency content. OWASP lists prompt injection, insecure output handling, and supply chain vulnerabilities among the major risks for LLM applications.

Hallucinated logic can be just as risky. An AI tool may invent a function, misunderstand a library, skip authorization checks, or generate error handling that leaks too much information.

Vulnerable Dependencies, Secrets, and Supply Chain Exposure

AI coding assistants often suggest packages that are outdated, unnecessary, poorly maintained, or risky. A San Francisco SaaS team might generate a Node.js API in minutes, but if that output includes a vulnerable package or hardcoded token, speed turns into liability.

GitHub reported that developers used secret scanning to detect more than 39 million secret leaks in 2024. That scale shows why secret scanning, dependency review, and software supply chain security should be standard for AI-assisted work.

Teams building automation, dashboards, or analytics workflows can also align security with Python development services and business intelligence services.

Compliance, Licensing, and Data Leakage Risks

Compliance risk appears when teams cannot explain how AI-generated code was reviewed, tested, or approved. Licensing risk appears when generated output resembles open-source code patterns with unclear obligations.

For GDPR, UK-GDPR, HIPAA, PCI DSS, SOC 2, ISO 27001, and ISO/IEC 42001 environments, keep prompts clean, retain review evidence, document tool settings, and map AI coding controls to internal policy.

This article is not legal advice. Regulated teams should confirm obligations with legal, compliance, and security stakeholders before rollout.

Secure Prompt-to-Code Pipeline Best Practices

A secure prompt-to-code pipeline limits sensitive context, standardizes prompts, requires human review, scans generated code, checks dependencies, and blocks risky changes before merge.

The goal is not to slow developers down. The goal is to make unsafe AI-written code difficult to ship.

Start with Secure Prompts and Approved Context

Define what developers can and cannot share with AI tools. Approved prompts should avoid.

Secrets, API keys, passwords, and private keys

Customer data, PHI, payment data, or production records

Confidential architecture and unreleased business logic

Proprietary algorithms or sensitive contracts

Real incident data unless it has been sanitized

A Berlin healthtech team, for example, should not paste patient data into an AI coding assistant. It can use sanitized schemas, mock data, and approved design patterns instead.

Add Human Review Before Merge

Human review connects business intent, security judgment, and architecture context. Reviewers should ask.

Does the code enforce authorization correctly?

Is input validated and output encoded safely?

Are secrets, tokens, or keys exposed?

Did the AI introduce unnecessary dependencies?

Does the logic match the actual product requirement?

For mobile app development services, this is especially important because generated client-side code may expose API keys, trust the device too much, or weaken authentication flows.

Build CI/CD Gates for AI-Generated Code

CI/CD gates should run SAST, DAST, SCA, secret scanning, IaC testing, test coverage, and policy checks before merge or deployment. Mak It Solutions’ guide on AI CI/CD automation for safer releases is a strong companion resource for this workflow.

Clear blocking rules help developers move faster with fewer debates:

| Control Area | Recommended Gate |

|---|---|

| Vulnerabilities | No critical issues before merge |

| Secrets | No exposed credentials or private keys |

| Dependencies | No risky packages without review |

| Licenses | No unapproved licenses |

| Tests | No failing required tests |

| Infrastructure | No unsafe IaC changes without approval |

Tools and Testing for AI Code Security

The best AI code security stack combines human review, SAST, DAST, SCA, secret scanning, SBOM generation, CI/CD policy gates, and developer education.

No single tool solves AI-generated code security. Teams need layered validation.

SAST, DAST, SCA, Secret Scanning, and IaC Testing

SAST checks source code. DAST tests running applications. SCA reviews open-source dependencies. Secret scanning detects exposed credentials. IaC testing checks cloud templates and infrastructure changes.

Together, these controls catch different failure modes before they become production incidents.

Common options include GitLab security features, Snyk, Veracode, SonarSource, Black Duck, GitHub Advanced Security, and cloud-native tooling across AWS, Azure, and Google Cloud.

SBOMs and Dependency Review for AI-Written Code

SBOMs help teams understand what components ship with an application. They are especially useful when AI generates package files, Dockerfiles, Terraform modules, CI scripts, or deployment manifests.

Dependency review should check package age, maintainer activity, license, known vulnerabilities, transitive dependencies, and whether the package is even necessary.

Secure AI Coding Assistants and Enterprise Controls

AI coding assistants differ in data handling, admin governance, IDE integration, context controls, and audit features. Before adoption, compare retention settings, SSO, logging, policy enforcement, audit trails, IP protections, and vendor security posture.

Mak It Solutions’ guide on AI-native development platforms can help teams compare productivity benefits with governance requirements.

Governance and Compliance for Enterprise AI Coding

Enterprise AI coding governance should define who can use AI tools, what data can be shared, how generated code is reviewed, and which evidence is stored for audits.

This turns AI coding from informal experimentation into a controlled engineering practice.

AI Coding Assistant Acceptable Use Policy

An acceptable use policy should define.

Approved AI coding tools

Prohibited data in prompts

Approved prompt patterns

Pull request disclosure rules

Required security scans

Review and approval workflows

Logging and evidence retention

Exception handling

Vendor review requirements

Developer training expectations

Disclosure in pull requests is useful because reviewers know to check for hallucinated logic, copied-looking code, risky package changes, and weak security patterns.

US Controls.

US teams in Austin, Seattle, New York, and San Francisco often prioritize SOC 2 evidence, HIPAA safeguards, PCI DSS scope reduction, vendor risk, and cloud governance.

The HIPAA Security Rule requires administrative, physical, and technical safeguards to protect electronic protected health information. PCI DSS provides baseline technical and operational requirements for protecting payment account data.

UK, Germany, and EU Controls

UK teams should align AI coding workflows with UK-GDPR, ICO guidance, FCA expectations for fintech, NHS data handling where relevant, and the UK AI Cyber Security Code of Practice. The UK code sets baseline cyber security principles for AI systems and the organizations that develop or deploy them.

German and EU teams should consider DSGVO/GDPR, BaFin expectations for financial services, BSI guidance, ENISA practices, NIS2, DORA, data residency, and EU AI Act timelines. The EU AI Act entered into force on 1 August 2024, with phased application including prohibited AI practices and AI literacy obligations from 2 February 2025, GPAI obligations from 2 August 2025, wider applicability from 2 August 2026, and extended transition for some high-risk systems until 2 August 2027.

NIST’s Generative AI Profile can also help enterprises structure risk management for generative AI across governance, mapping, measurement, and management activities.

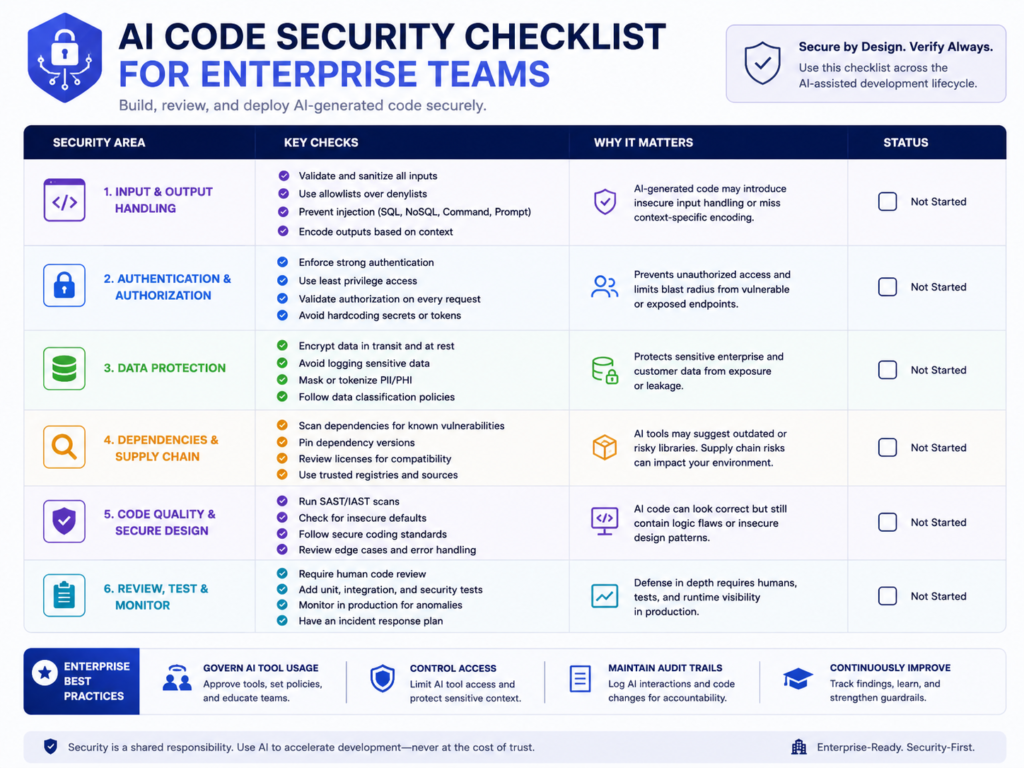

10-Point AI-Generated Code Security Checklist

Use this checklist before scaling AI coding assistants across an engineering team.

Approve AI coding assistants before use.

Ban secrets, credentials, customer data, PHI, and payment data in prompts.

Use sanitized examples and approved context.

Require AI disclosure in pull requests.

Run human review before merge.

Enforce SAST, DAST, SCA, IaC testing, and secret scanning.

Generate and review SBOMs.

Block risky dependencies and licenses.

Store logs and evidence for audits.

Train developers on secure prompt-to-code workflows.

When to Use a DevSecOps Partner

Use a security platform or DevSecOps partner when AI coding is moving faster than your governance, testing, or compliance evidence.

This is especially important for SaaS, healthcare, fintech, cloud, and mobile products where regulated users, customer data, or payment flows are involved.

Mak It Solutions can help connect AI coding workflows with Laravel development services, cloud architecture, secure APIs, analytics, and CI/CD governance.

To Sum Up

AI-generated code security is not about saying no to faster development. It is about giving developers safe tools, clear boundaries, automated checks, and expert support so speed does not become avoidable risk.

Planning to adopt AI coding assistants across your engineering team? Mak It Solutions can help you design a secure prompt-to-code workflow, map controls to compliance needs, and build CI/CD gates that support faster releases.

Request a scoped estimate through the Mak It Solutions contact page and start with a practical AI code security readiness review.

Key Takeaways

AI-generated code security requires policy, review, automation, and evidence.

AI output should be treated as untrusted until validated.

The highest-risk areas are prompt injection, insecure output, vulnerable dependencies, secrets, licensing, and data leakage.

CI/CD gates should include SAST, DAST, SCA, secret scanning, IaC testing, and SBOM review.

US, UK, Germany, and EU teams need region-specific governance for compliance and audit readiness.

FAQs

Q : Can AI-generated code pass a security audit?

A : Yes. AI-generated code can pass a security audit when it is reviewed, tested, scanned, and documented like any other production code. Auditors will care about evidence: pull request review, vulnerability scans, dependency checks, SBOMs, access controls, test results, and policy compliance.

Q : Should developers disclose AI-generated code?

A : Yes, many enterprise teams should require AI-assisted code disclosure in pull requests, especially for regulated systems. Disclosure improves review quality and supports audit trails for SOC 2, ISO 27001, GDPR, UK-GDPR, HIPAA, and PCI DSS environments.

Q : Is GitHub Copilot safe for enterprise software teams?

A : GitHub Copilot and similar assistants can be safe when configured with the right admin controls, data settings, review rules, and security gates. The bigger issue is not one tool alone; it is the workflow around the tool.

Q : What data should never be shared with an AI coding assistant?

A : Developers should never share secrets, API keys, private keys, passwords, customer records, payment data, PHI, confidential contracts, proprietary algorithms, unreleased architecture, or regulated production data with unapproved AI tools.

Q : How often should AI-generated code be reviewed or rescanned?

A : AI-generated code should be reviewed before every merge and rescanned whenever dependencies, models, libraries, build scripts, prompts, or infrastructure files change. CI/CD should run scans automatically on pull requests and before deployment.