AI Pair Programming ROI: CTO Measurement Guide

AI Pair Programming ROI: CTO Measurement Guide

AI Pair Programming ROI: CTO Measurement Guide

AI pair programming ROI is now a board-level question, not just a developer productivity experiment. As GitHub Copilot, Cursor, Claude Code, and enterprise AI coding tools become part of daily engineering workflows, CTOs need a clear way to prove whether these tools are saving time, improving delivery, or quietly adding review and governance overhead.

AI pair programming ROI measures whether AI-assisted development creates more business value than it costs. The best model looks beyond “faster coding” and includes license fees, developer capacity, DORA metrics, code quality, security controls, and compliance risk.

Stack Overflow’s 2025 Developer Survey found that 84% of respondents were using or planning to use AI tools in their development process, up from 76% in 2024. It also reported that 51% of professional developers use AI tools daily. GitHub/MIT research found that developers using Copilot completed a controlled coding task 55.8% faster than the control group.

Useful numbers, yes. But speed alone is not ROI.

Real ROI comes from measuring the full engineering system: people, process, quality, security, compliance, and delivery outcomes.

What Is AI Pair Programming ROI?

AI pair programming ROI is the measurable return a software team gets from AI-assisted coding after accounting for license fees, enablement, governance, security controls, and review effort.

It should include.

Developer productivity

Delivery speed

Code quality

Developer experience

Security and compliance risk

Total adoption cost

In simple terms, AI pair programming ROI answers one question: did AI-assisted software development create more business value than it cost?

AI Pair Programming vs. AI Coding Assistants

AI pair programming is the workflow. A developer collaborates with an AI tool during planning, coding, testing, debugging, documentation, and refactoring.

AI coding assistants are the tools that support that workflow. Examples include GitHub Copilot, Cursor, Claude Code, and model-enabled IDE plugins.

The difference matters because ROI should not be measured only by accepted code suggestions. A New York fintech team may use AI for unit tests and documentation, while a Berlin SaaS team may use it for legacy refactoring under GDPR and DSGVO expectations.

Why CTOs Need ROI Proof Now

CTOs need ROI proof because AI coding spend has moved from individual experimentation to enterprise procurement.

Finance teams want cost justification. CISOs want security review. Legal teams want IP and data-handling controls. Engineering leaders want to know whether the tools actually improve delivery or simply create more code to review.

For Mak It Solutions clients building SaaS, custom platforms, and enterprise apps, the practical approach is simple: define a baseline, run a controlled pilot, measure outcomes, then scale only where the evidence is strong.

Relevant internal resources include web development services and business intelligence services.

How to Calculate AI Pair Programming ROI

To calculate AI pair programming ROI, compare measurable value gains against total adoption costs over a defined period.

A practical formula is.

ROI = (AI-assisted value created − AI adoption cost) ÷ AI adoption cost × 100

Start with a 60- to 90-day pilot. Measure baseline throughput before adoption, then compare similar teams, repositories, or work types after rollout.

AI Coding Assistant ROI Formula for Software Teams

Use this model.

Value created = recovered developer hours + faster delivery value + reduced rework + avoided defects + lower documentation effort

Then subtract.

Total cost = licenses + onboarding + governance + security review + tool administration + extra code review

A San Francisco SaaS team might calculate recovered engineering capacity by measuring pull request cycle time and sprint completion. A London healthtech company may weigh compliance review more heavily because NHS governance and UK-GDPR controls affect tool selection.

Cost Inputs CTOs Should Include

Do not stop at license cost. That gives an incomplete picture.

Common cost inputs include.

Monthly or annual licenses

IDE and repository integration

Developer onboarding

Internal usage policy creation

Legal and procurement review

Vendor risk management

Security testing

Tool administration

Extra senior review time

Audit evidence for regulated teams

Hidden cost matters. If senior engineers spend more time correcting low-quality AI output, the apparent productivity gain can disappear.

Value Outputs That Actually Matter

Value outputs should connect to business outcomes, not vanity metrics.

Useful examples include.

Shorter lead time for changes

Faster test creation

Lower documentation backlog

Fewer reopened tickets

Reduced onboarding time

More stable sprint completion

Better code review preparation

Less repetitive engineering work

AI coding tools are most valuable when they remove real engineering friction. They are less valuable when they simply generate more code into an already slow delivery pipeline.

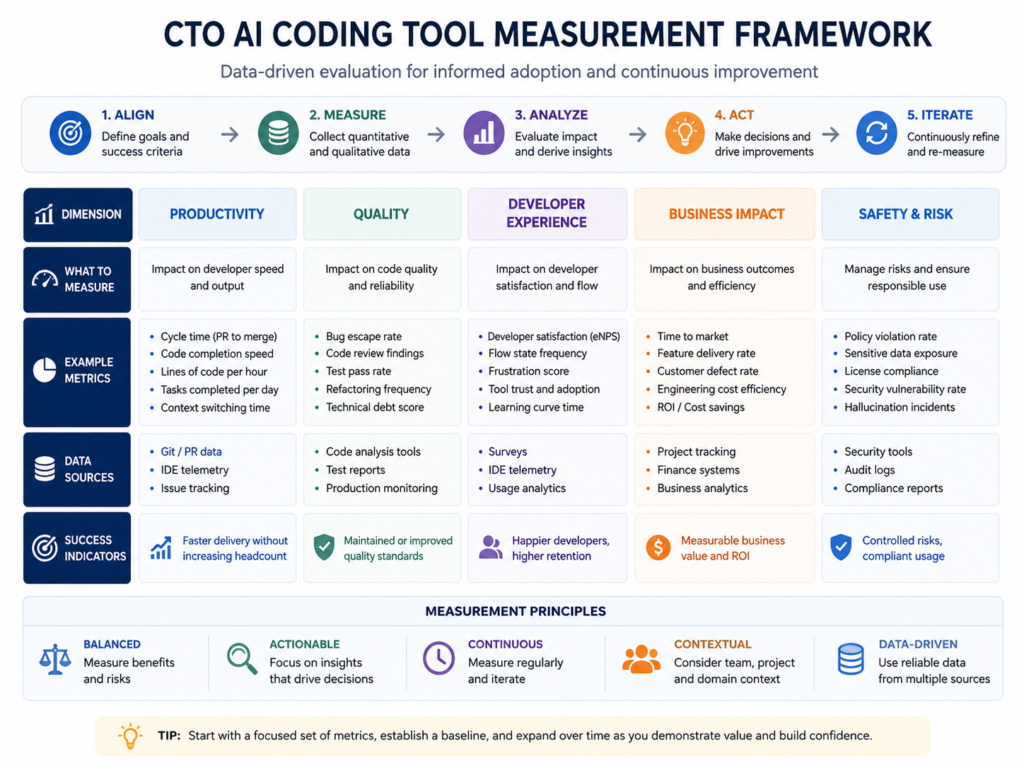

Developer Productivity AI Metrics That Prove Impact

Developer productivity AI metrics should prove whether teams ship better software faster without harming quality, security, or morale.

The strongest CTO dashboard combines DORA, SPACE, DevEx, and AI-specific usage data.

| Metric Area | What to Measure | Why It Matters |

|---|---|---|

| DORA | Deployment frequency, lead time, change failure rate, recovery time | Shows delivery performance |

| SPACE | Satisfaction, performance, activity, collaboration, efficiency | Balances output with team health |

| DevEx | Wait time, context switching, unclear requirements, cognitive load | Shows developer friction |

| AI Usage | Adoption, prompt patterns, generated tests, review burden | Shows whether AI is helping workflows |

DORA Metrics for AI-Assisted Software Development

DORA metrics help connect AI coding work to delivery performance. Track deployment frequency, lead time for changes, change failure rate, and time to restore service.

Google Cloud describes deployment frequency and lead time as velocity indicators, while change failure rate and time to restore service measure stability.

AI-assisted development is valuable when lead time improves without increasing incidents, rollback rates, or production defects.

SPACE and DevEx Metrics for Developer Experience

SPACE metrics look at satisfaction, performance, activity, communication, and efficiency. DevEx focuses on friction: wait time, context switching, unclear requirements, and cognitive load.

This matters because AI can make developers feel faster while creating downstream review burden.

A Manchester engineering team may see happier developers after AI adoption, but still needs to verify whether production quality improved.

AI Code Assistant Productivity Metrics

Track AI-specific metrics, but keep them in context.

Useful signals include.

Activation rate

Weekly active users

Suggestion acceptance rate

Prompt patterns

Generated test coverage

Pull request size

Code review comments

Reopened tickets

Time spent in review

Time spent in flow

Avoid using acceptance rate alone. Accepted code can still be insecure, hard to maintain, or misaligned with architecture.

For teams using React, Node.js, Laravel, Flutter, or data platforms, Mak It Solutions’ React development, Node.js development, and Flutter development pages are useful next-step resources.

Measuring GitHub Copilot ROI and AI Coding Tool Value

Engineering leaders can measure GitHub Copilot ROI by comparing AI-enabled teams against a clear baseline across speed, quality, cost, and developer experience.

The important part is measuring the workflow, not just the tool.

GitHub Copilot ROI.

GitHub Copilot ROI should be measured through.

Developer adoption

Task completion time

Pull request throughput

Test coverage

Code review time

Defect trends

Deployment stability

Developer satisfaction

The 55.8% faster completion result from the GitHub/MIT controlled study is useful evidence, but each enterprise should still run its own pilot. ROI varies by language, codebase maturity, team seniority, architecture, and governance requirements.

Comparing Cursor, Claude Code, GitHub Copilot, and Enterprise AI Coding Tools

Compare AI coding tools on workflow fit, not hype.

Key evaluation areas include.

IDE experience

Repository context

Model quality

Admin controls

Enterprise SSO

Auditability

Data retention settings

IP protections

Secure coding support

Vendor risk posture

Fit with cloud and DevOps stack

Cursor may appeal to AI-native product teams. Claude Code may support complex reasoning workflows. GitHub Copilot often fits Microsoft and GitHub-heavy engineering environments.

The best choice depends on your codebase, compliance requirements, and engineering operating model.

When AI Coding Tools ROI Does Not Show Up

AI coding tool ROI may not appear when the real bottleneck is outside coding.

Common blockers include.

Ambiguous product backlog

Slow security review

Flaky CI/CD

Manual QA delays

Long release approvals

Poor documentation

Architecture debt

Too many review handoffs

In that case, AI is not always failing. The measurement model is incomplete.

Fix the delivery system before scaling licenses across every team.

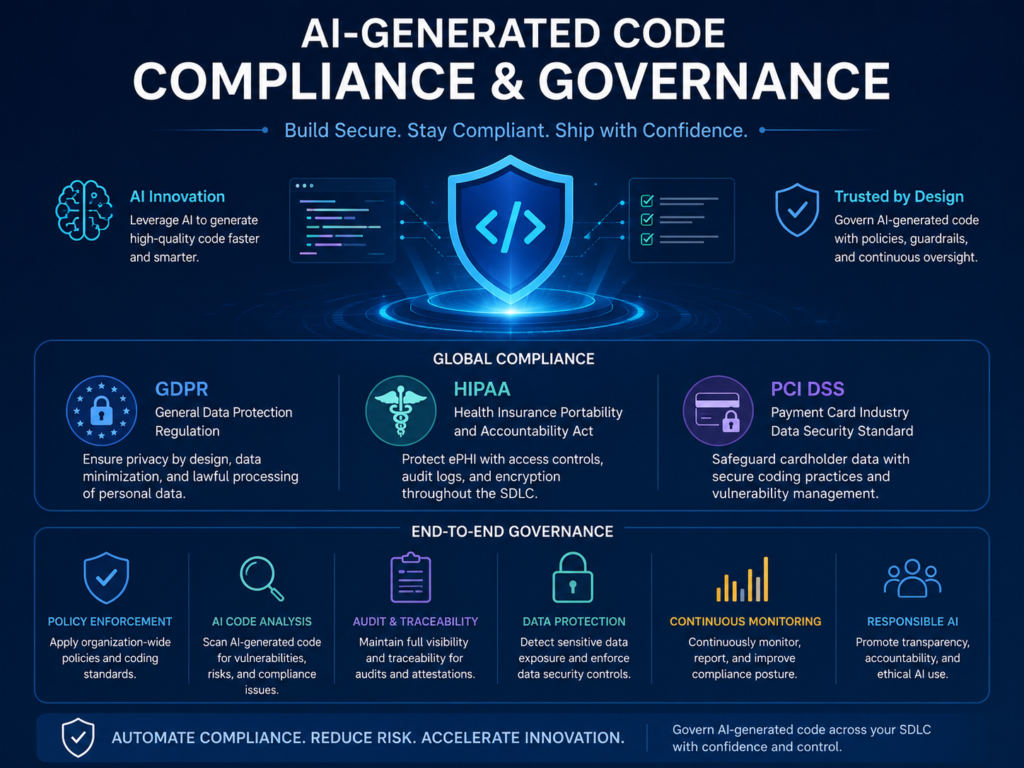

Quality, Security, and Compliance Impact

AI-generated code quality must be measured through review outcomes, defect trends, vulnerability scans, and maintainability signals.

A simple policy works well:

AI may draft, but humans own the code.

AI-Generated Code Quality Metrics

Track quality with the same seriousness you apply to human-written code.

Useful metrics include.

Code review comments

Defect density

Static analysis findings

Duplicate logic

Test pass rate

Maintainability index

Production incidents

Escaped defects

Reopened tickets

AI-generated code should follow the same engineering standards as the rest of the codebase. No exceptions.

Security Risks CTOs Should Watch

Security teams should assess whether prompts, repository context, secrets, customer data, or proprietary logic can leave controlled environments.

Key risks include.

IP exposure

Open-source license risk

Vulnerable code patterns

Unsafe dependencies

Secret leakage

Over-permissioned plugins

Poor logging and auditability

Use SAST, SCA, dependency scanning, secrets detection, and human code review.

For secure software programs, Mak It Solutions’ AI vulnerability detection workflow guide is a relevant internal read.

Compliance Considerations for Regulated Teams

Compliance depends on industry and region.

GDPR governs personal data protection in the EU, while UK organizations follow UK-GDPR and ICO expectations. The European Commission describes EU data protection rules as a framework for protecting personal data and privacy rights.

US healthcare teams must consider HIPAA when protected health information may be involved. HHS explains that the HIPAA Privacy Rule protects medical records and other individually identifiable health information.

Payment companies should align with PCI DSS, which sets technical and operational requirements for protecting payment account data.

For EU teams, the AI Act also matters. The European Commission states that the AI Act entered into force on August 1, 2024, and is designed to support responsible AI development and deployment in the EU.

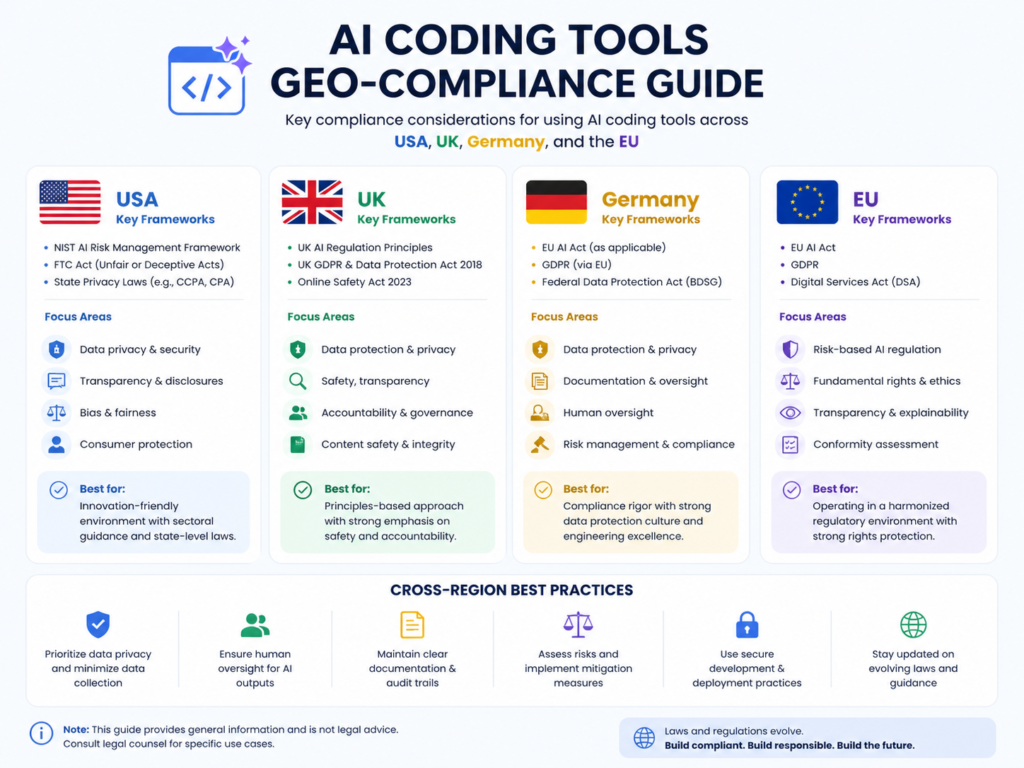

GEO Considerations for USA, UK, Germany, and EU Teams

GEO requirements change how AI pair programming ROI is approved, measured, and scaled.

The same tool may pass quickly in Austin but require extra controls in London, Frankfurt, or Munich.

USA.

US teams in New York, Seattle, Austin, or San Francisco often focus on SOC 2, HIPAA, PCI DSS, FINRA, SEC, vendor risk, and cloud security.

A healthcare SaaS company should restrict AI use around PHI unless contracts, access controls, data boundaries, and logging are clear.

UK.

UK companies in London, Manchester, and Cambridge should assess UK-GDPR, FCA expectations for financial services, NHS governance for healthcare systems, and procurement risk.

AI coding tools should be documented in vendor risk registers and software development policies.

Germany and EU

Germany and EU teams in Berlin, Munich, Frankfurt, Amsterdam, Paris, Dublin, and Stockholm should consider DSGVO/GDPR, BaFin oversight, EU AI Act obligations, NIS2 cybersecurity expectations, data residency, and works council approvals.

For regulated EU teams, AWS, Azure, or GCP region strategy may influence which AI tools are acceptable.

Building the Business Case for AI Coding Tools

A strong business case links AI coding tools to measurable engineering outcomes.

Not hype. Not demos. Evidence.

When to Scale AI Pair Programming

Scale when pilot teams show.

Faster delivery

Stable or improved quality

Positive developer experience

Acceptable compliance risk

Lower rework

Clear adoption patterns

No increase in security findings

Avoid blanket rollout if benefits only appear in isolated coding tasks.

How to Present ROI to Finance, Security, and Executives

Different stakeholders care about different outcomes.

Finance wants cost savings and delivery acceleration.

Security wants controls, audit logs, data boundaries, and secure SDLC evidence.

Executives want faster roadmap delivery without reputational or compliance risk.

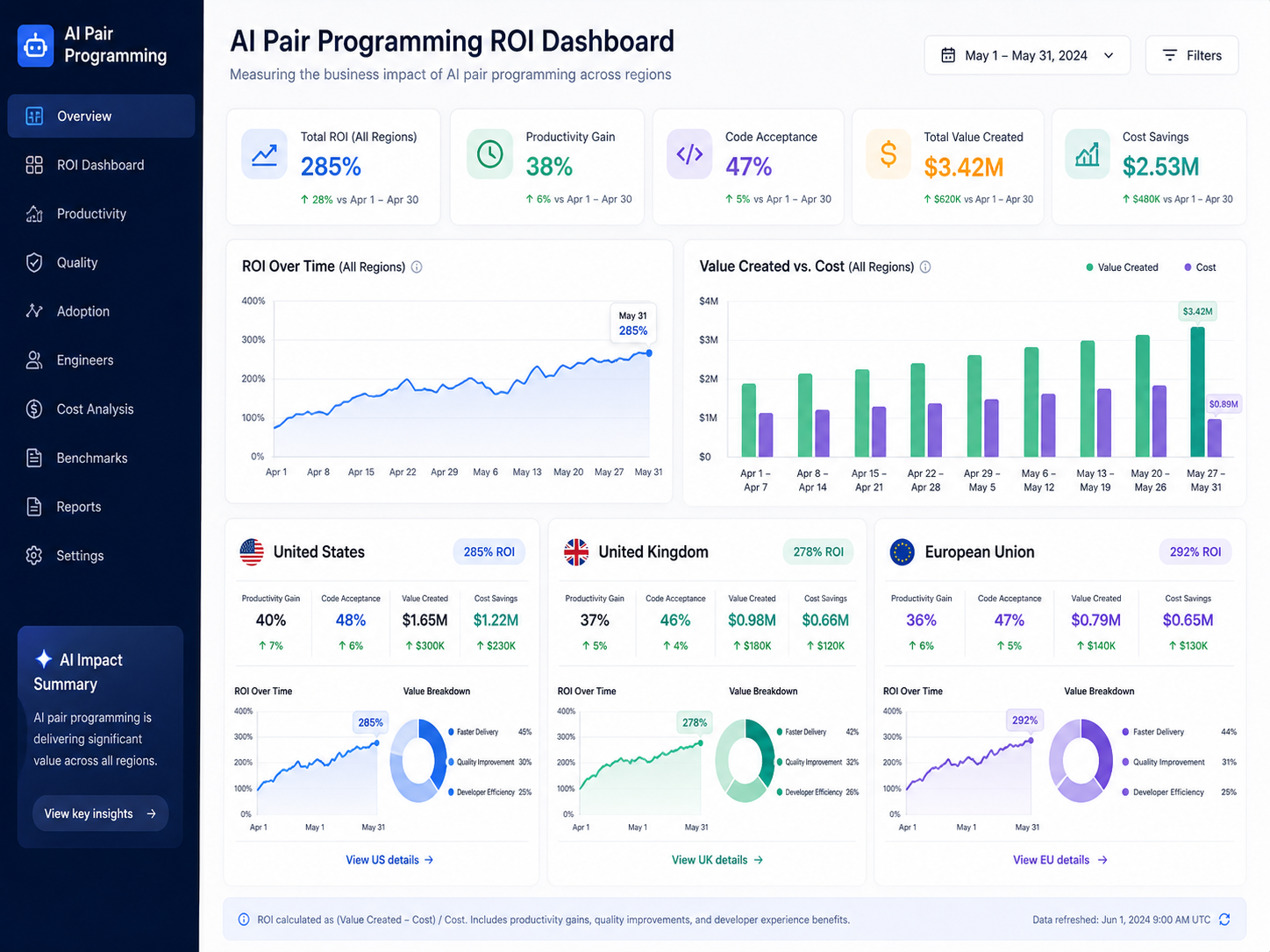

A practical dashboard should show.

Total cost

Adoption rate

Delivery improvement

Quality signals

Security findings

Compliance readiness

Forecasted capacity gain

That gives everyone the same operating view.

Key Takeaways

AI pair programming ROI should include productivity, delivery speed, quality, compliance, and security not just accepted code suggestions.

DORA, SPACE, DevEx, and AI-specific usage metrics give CTOs a balanced measurement framework.

GitHub Copilot, Cursor, Claude Code, and enterprise AI coding tools should be compared by workflow fit, governance, and risk controls.

US, UK, Germany, and EU teams need region-specific compliance review before broad rollout.

ROI is strongest when AI removes real engineering bottlenecks, not when it simply generates more code.

A pilot-first approach gives finance, security, and executives the evidence they need.

Want to know whether AI pair programming ROI is realistic for your engineering team?

Book a scoped AI coding ROI assessment with Mak It Solutions to benchmark delivery metrics, identify compliance risks, and build a practical rollout plan for your SaaS, cloud, web, or mobile app team.

Start with web development services, business intelligence services, or a custom productivity benchmark for your engineering team.( Click Here’s )

FAQs

Q : What is a good ROI benchmark for AI coding assistants?

A : A good benchmark is any measurable gain that survives quality and security review. For many teams, that may mean a 10–30% improvement in cycle time, documentation speed, test creation, or developer capacity after costs are included. Do not compare only raw code output.

Q : How long does it take to measure AI coding tool productivity?

A : Most teams need 60 to 90 days to measure AI coding tool productivity responsibly. The first few weeks show adoption and training patterns, but reliable ROI needs enough completed work to compare pull requests, sprint flow, defects, review time, and delivery outcomes.

Q : Which developer productivity metrics should not be used alone?

A : Lines of code, suggestion acceptance rate, commits, and story points should not be used alone. They can reward activity instead of value. Pair them with DORA, SPACE, DevEx, code quality, escaped defects, and deployment stability.

Q : Can AI pair programming improve code quality as well as speed?

A : Yes, AI pair programming can improve code quality when used for tests, refactoring, documentation, code review preparation, and edge-case discovery. It can also reduce quality when developers accept output without understanding it.

Q : How should regulated companies govern AI-generated code?

A : Regulated companies should define allowed use cases, restricted data types, approved tools, prompt handling rules, logging requirements, and code review standards. They should also involve legal, security, privacy, and compliance teams early.