Confidential Computing for Sensitive Cloud Workloads

Confidential Computing for Sensitive Cloud Workloads

Confidential Computing for Sensitive Cloud Workloads

Confidential computing is a cloud security approach that uses hardware-based trusted execution environments (TEEs) to keep code and data encrypted and isolated while they are being processed, not just at rest or in transit. For sensitive cloud workloads in highly regulated sectors, it adds a new zero-trust layer that protects memory and runtime from insiders, compromised infrastructure and cross-border risk.

Introduction

AI, advanced analytics and multi-cloud architectures mean more sensitive data is processed in public clouds across the United States, United Kingdom, Germany and the wider European Union than ever before. At the same time, regulations like GDPR/DSGVO, UK-GDPR, HIPAA and PCI DSS keep tightening expectations around confidentiality, integrity and sovereignty of that data.

Traditional cloud security has focused on encrypting data at rest and in transit, backed by strong identity and network controls. But most breaches now happen while data is in use when applications decrypt it in memory for processing, training models or running analytics. Around 45% of data breaches now involve cloud environments, and the average cost of a cloud breach is over $5 million.

Confidential computing aims to close this gap. In this guide, you’ll see what confidential computing is, how TEEs, enclaves and confidential virtual machines work, which workloads benefit most, how it supports GDPR/HIPAA/PCI DSS, and how to design a practical pilot across AWS, Azure, Google Cloud and European providers.

What Is Confidential Computing in Cloud Security?

Confidential computing in cloud security means running code and data inside hardware-based trusted execution environments (TEEs) where they stay encrypted and isolated in memory during processing. In other words, it brings “data-in-use encryption” and runtime isolation into your cloud security stack, alongside encryption at rest and in transit.

Simple Definition of Confidential Computing and Data-in-Use Protection

In simple terms, when someone asks “what is confidential computing in cloud security?”, the answer is: it’s a way to protect data while applications are using it, not only when it’s stored or moving across networks. Confidential computing uses secure enclaves and TEEs so your most sensitive data stays encrypted inside the CPU and memory, even from cloud operators, hypervisors or privileged insiders.

That’s why you’ll often see it described as “confidential computing for sensitive data” or “hardware-based isolation in cloud environments” it protects data in use, which used to be the weakest link.

How Confidential Computing Fits Zero-Trust Cloud Security Architectures

Zero-trust cloud security architecture assumes that networks, identities, devices and even infrastructure layers can be compromised. Confidential computing extends that mindset into memory and runtime by treating the host OS, hypervisor and cloud admin plane as untrusted.

In practice, TEEs provide a hardware root of trust and cryptographic attestation so workloads can prove they’re running in genuine secure enclaves before keys are released.This complements your identity, network micro-segmentation, API security and storage encryption controls turning “zero trust” from a network principle into an end-to-end execution model.

As adoption of zero-trust grows (over 60% of organisations report having at least partially implemented a zero-trust strategy).confidential computing is becoming the natural next layer for the most critical workloads.

Confidential Computing vs Traditional Cloud Encryption

Traditional cloud encryption focuses on.

At rest disk, object storage and database encryption

In transit TLS for APIs, VPNs and service-to-service traffic

Those controls are essential, but they don’t protect against:

Malicious or compromised cloud admins

Vulnerable or exploited hypervisors

Memory-scraping malware or side-channel attacks

Rogue insiders with privileged OS access

Confidential computing tackles this gap by isolating sensitive workloads in TEEs where the CPU enforces boundaries and memory is encrypted. Even if the host OS, hypervisor or management plane is compromised, attackers can’t see plaintext data or code. The result is a more robust way to secure sensitive cloud workloads and reduce blast radius when other controls fail.

How Confidential Computing Works.

At its core, confidential computing relies on CPU-level isolation trusted execution environments to run code and data inside enclaves or confidential virtual machines that are cryptographically attested. TEEs prove to your apps and key management systems that they’re genuine before keys are released.

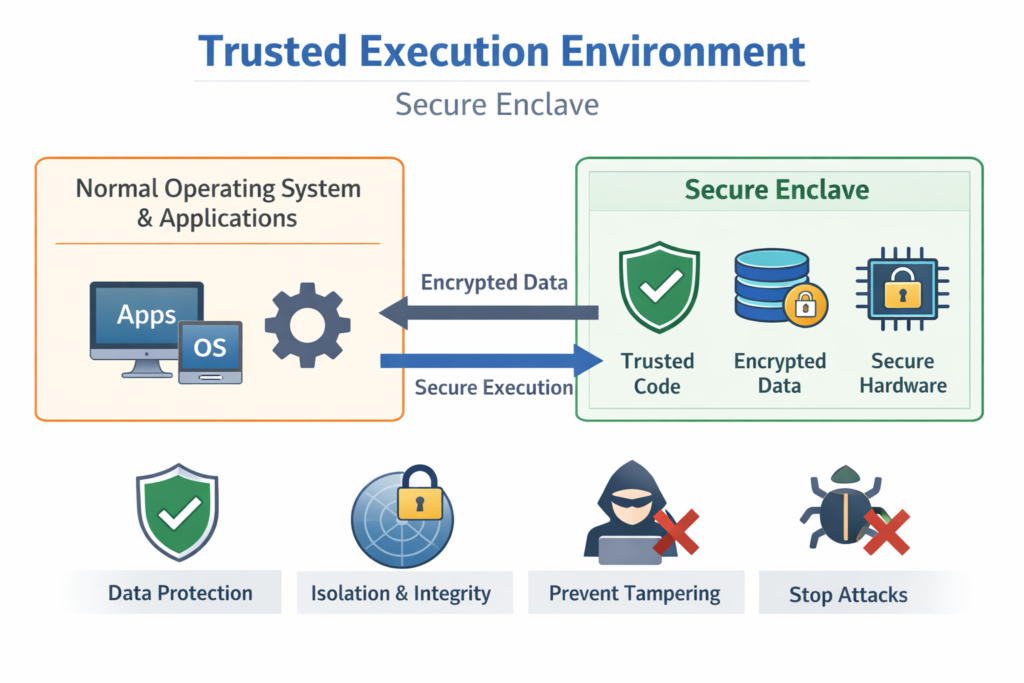

Trusted Execution Environment in the Cloud (TEEs and Hardware Isolation)

A trusted execution environment is a protected part of a processor that ensures code and data loaded inside it are:

Confidential (memory is encrypted and isolated)

Integrity-protected (tampering is detectable)

Measurable and attestable (remote parties can verify what’s running)

Cloud TEEs build on hardware capabilities like Intel SGX, AMD SEV-SNP or Arm TrustZone, combined with vendor-specific attestation services. These “secure enclaves and TEEs” underpin trusted execution environment cloud patterns: your sensitive microservices or analytics jobs run inside enclaves, and only release keys once attestation checks pass.

Confidential Virtual Machines from Major Cloud Providers

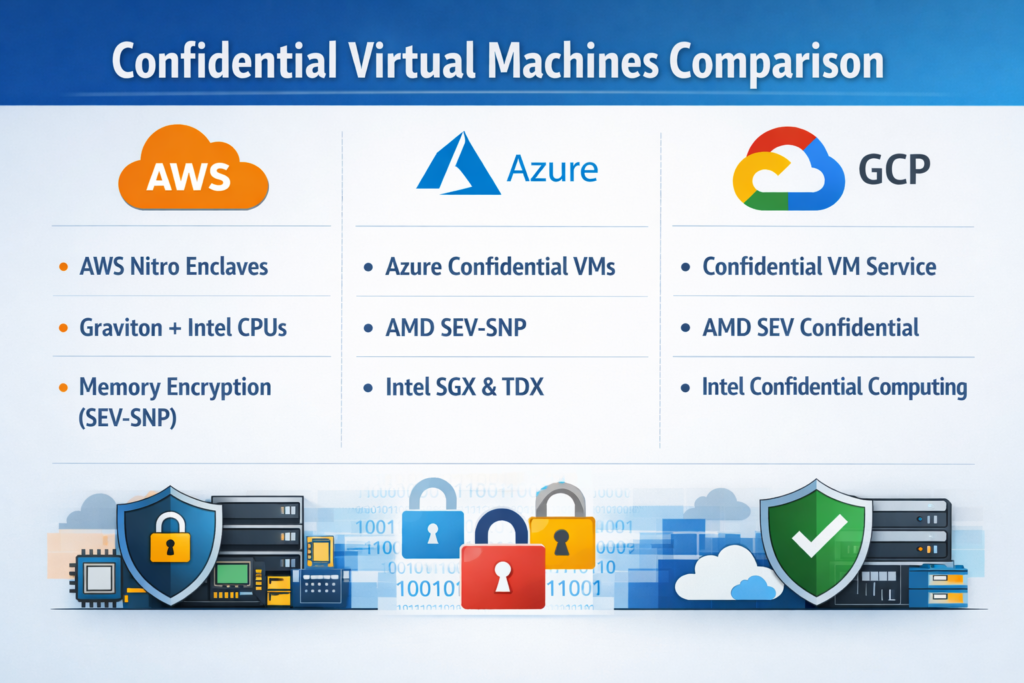

Major clouds now expose TEEs through “confidential virtual machines”.

Microsoft Azure offers Azure Confidential VMs, which encrypt guest memory and isolate it from the host and other tenants.

Amazon Web Services (AWS) provides AWS Nitro Enclaves isolated compute environments attached to EC2 instances for handling highly sensitive data and keys.

Google Cloud offers Confidential VMs and Confidential GKE Nodes, which use memory encryption and hardware attestation.

These confidential virtual machines let you lift-and-shift existing apps with minimal changes, then progressively introduce more fine-grained enclave patterns where needed. For architects comparing confidential computing solutions (AWS / Azure / GCP), this is often the first decision point: confidential VMs vs application-level enclaves.

Secure Enclaves for AI, Analytics and Multi-Party Computation

Beyond infrastructure hardening, enclaves enable secure multi-party analytics in the cloud. Multiple organisations can contribute encrypted datasets into a joint computation without exposing raw data to each other or the platform operator. The TEEs enforce isolation and policies so only aggregated insights or model updates leave.

For AI workloads, this unlocks secure AI model training on sensitive data for example, training on US healthcare data, UK banking transactions or German industrial telemetry without those datasets ever being visible in plaintext outside the enclave. In practice, you’ll see patterns like:

Secure AI model training on sensitive US data with confidential computing

Federated analytics across hospitals or banks in different regions

Encrypted feature engineering pipelines for regulated citizen data

Which Sensitive Cloud Workloads Benefit Most from Confidential Computing?

The highest-value candidates are regulated data workloads healthcare, financial services, public sector and AI/analytics pipelines processing sensitive citizen or industrial data. These are the workloads where regulators, boards and customers are most concerned about “who can really see this data?”

Regulated Financial and Healthcare Workloads (HIPAA, PCI DSS, NHS, BaFin)

In healthcare, confidential computing for HIPAA-compliant workloads can protect EHR/PHI systems, claims analytics and clinical research algorithms handling electronic protected health information (ePHI). HIPAA’s Security Rule requires robust administrative, physical and technical safeguards for ePHI; TEEs help strengthen the technical layer by ensuring data stays protected even during processing.

In financial services, confidential computing for UK financial services cloud workloads can harden Open Banking APIs, real-time fraud analytics and risk models against insider threats and cross-tenant leakage. In Germany and the EU, supervisors like BaFin increasingly expect banks to demonstrate strong controls over outsourced processing and cloud operations. (Mak it Solutions)

Payment processors bound by PCI DSS can also use data-in-use encryption to go beyond traditional cardholder data protections as PCI DSS v4.0 tightens expectations around encryption, monitoring and continuous security.

AI, Analytics and Secure Citizen Data Processing in the US, UK and EU

For governments and SaaS providers processing citizen data in New York, London or Berlin, confidential computing enables secure analytics on sensitive citizen data in cloud environments. Data controllers can prove that personal data is only processed inside attested TEEs, with strict access control and auditable key paths.

This is especially valuable as generative AI usage rises: recent research shows data policy violations involving AI tools more than doubled year-on-year, with over half involving regulated personal or financial data.Confidential computing, combined with strong DLP controls, helps contain that risk by strictly limiting where decrypted prompts and training data can be processed.

Industrial IP, OT and Industry 4.0 Workloads in Germany and the EU

In German industry 4.0 workloads, manufacturers increasingly stream operational technology (OT) telemetry into cloud platforms to power digital twins, predictive maintenance and advanced quality control. Protecting that industrial IP is a strategic priority, especially for export-heavy sectors.

Confidential computing can help protect industrial IP in German cloud environments by processing sensor data, CAD files and simulation outputs only inside attested enclaves hosted in regions like Frankfurt. Similar patterns apply to automotive and aerospace workloads elsewhere in the EU, where digital twins and AI inspection systems become core competitive assets rather than “just another workload”.

Compliance, Risk and GEO Requirements for Confidential Computing

Confidential computing helps demonstrate to regulators that sensitive data stays protected even in memory and during processing supporting GDPR/DSGVO, UK-GDPR, HIPAA, PCI DSS and SOC 2 expectations. It doesn’t replace these frameworks, but it can be a powerful way to reduce residual risk and strengthen your story to auditors.

How Confidential Computing Supports GDPR/DSGVO, UK-GDPR and EU Data Residency

GDPR/DSGVO and UK-GDPR require that personal data be processed lawfully, fairly and securely, with appropriate technical and organisational measures across storage, transmission and processing.

Confidential computing supports those principles by:

Ensuring personal data is protected from unauthorised access during processing, not only at rest

Providing attestation evidence that processing happens in specific regions and TEEs

Enabling controllers to demonstrate robust data-in-use security for high-risk processing and cross-border transfers

For EU data residency, combining TEEs with strict region pinning such as Frankfurt or Dublin helps show that decrypted personal data never leaves agreed jurisdictions, even when managed by global cloud providers.

Meeting HIPAA, PCI DSS and SOC 2 Expectations with Data-in-Use Encryption

For US healthcare, HIPAA’s Security Rule calls for safeguards that protect the confidentiality, integrity and availability of ePHI, including encryption where appropriate. (HHS.gov) Data-in-use encryption and TEEs give covered entities a stronger story when explaining how they protect PHI in cloud-hosted clinical systems and analytics pipelines.

PCI DSS v4.0 raises the bar on payment data security, with greater emphasis on continuous risk management, encryption and monitoring. Confidential computing can reduce scope for certain components by isolating cryptographic operations, cardholder data processing and tokenisation inside TEEs.

For SOC 2 reports, TEEs demonstrate advanced controls in the Security and Confidentiality categories of the Trust Services Criteria, helping cloud and SaaS providers differentiate on how they protect customer data in use.

Proving Controls to Auditors and Regulators in the US, UK and Germany

Attestation, hardware roots of trust and key management integrations give you concrete artefacts to show auditors in the United States, the UK and Germany. Typical evidence includes:

Attestation reports proving workloads ran in specific TEEs and regions

Key release policies that require successful attestation before decryption

Logs tying administrative actions to security controls over confidential workloads

Supervisors such as NHS commissioners or financial regulators expect not just “we encrypt” but how you prevent privileged misuse. Transparent confidential computing architectures can turn those discussions from theoretical to demonstrable. (Mak it Solutions)

Designing a Confidential Computing Architecture for Multi-Cloud

A robust confidential computing architecture balances TEEs, key management and attestation across multiple cloud providers and regions without hard lock-in. Many Mak It Solutions clients start from a multi-cloud cost or resilience strategy (as explored in our recent multi-cloud cost optimisation guide) and then layer confidential computing on top. (Mak it Solutions)

Comparing Azure, AWS, Google Cloud and European Providers

When you compare confidential computing solutions (AWS / Azure / GCP), focus on.

VM-level vs enclave-level isolation

Attestation APIs and ecosystem support

Integration with KMS/HSM and key brokers

Regional availability (US, UK, EU regions)

Alongside the big three, European providers like IBM Cloud, Red Hat OpenShift-based platforms and OVHcloud increasingly position confidential computing as part of their sovereignty and data residency story.

Your architecture should assume you may need to move a regulated workload from, say, a US East region to Dublin for EU-based processing or from a US-hosted enclave to a UK NHS-aligned region over time, without rewriting the entire stack.

Key Design Patterns.

Common design patterns include.

Centralised attestation broker a service that validates TEE measurements from different clouds and issues short-lived tokens your apps can trust

Integrated KMS/HSM keys live in cloud-native KMS or dedicated HSMs; they are only released to workloads that present valid attestation evidence

Workload tiering classifying “secure sensitive cloud workloads” that must always run in TEEs vs general workloads that can stay on standard VMs

DevSecOps integration CI/CD pipelines that automatically verify attestation policies and region placement for confidential workloads

Mak It Solutions often couples these patterns with data-platform and business-intelligence layers (see our Business Intelligence Services) so encrypted analytics and dashboards become standard, not special-case projects. (Mak it Solutions)

Regional Deployment Options.

Latency, sovereignty and regulator expectations all drive region choices.

US regions (e.g., East/West) for HIPAA and PCI DSS workloads close to American users and hospitals

UK regions (near London) for NHS and UK-GDPR sensitive workloads

Frankfurt for German banking and industry 4.0, where regulators emphasise DSGVO and local oversight

Dublin as a popular EU hub balancing latency, talent and regulatory clarity

Confidential computing must align with your wider data residency and localisation strategy not replace it. If you’re tackling GCC or country-specific localisation as well, Mak It Solutions’ work on data localisation and sovereign cloud architectures can help you build a coherent global picture. (Mak it Solutions)

How to Start Piloting Confidential Computing for High-Risk Workloads

The most effective path is not “encrypt everything in TEEs” but a focused pilot on one or two high-risk workloads where you can measure both risk reduction and business value.

Selecting High-Risk Workloads and Quick-Win Use Cases

Start by short-listing workloads that are.

Highly regulated HIPAA, PCI DSS, GDPR/DSGVO, UK-GDPR, SOC 2 scope

Business-critical material financial or operational impact if compromised

Under audit pressure recurring findings or tough regulator questions

Examples: an AI-powered claims analytics pipeline using US health data, an Open Banking transaction-scoring engine for UK banks, or an industrial telemetry analytics platform for a German manufacturer. Using a small checklist like this makes it easier to explain why confidential computing is being piloted here first.

Building the Business Case and Stakeholder Alignment

Your business case should blend.

Risk reduction less exposure to insider threats and advanced attackers

Compliance leverage stronger story for auditors, fewer repeat findings

Innovation unlocks being able to run AI/analytics on data you previously kept off-limits

For CISOs and DPOs, emphasise reduced residual risk and better control evidence. For data and product teams, focus on securely unlocking new datasets or data-sharing models. Mak It Solutions often helps clients frame this as “turning previously-dark data into value, without giving up control”.

Pilot Plan, Common Pitfalls and Next Steps

A simple pilot plan might look like.

Design choose workload, region, cloud provider and TEE pattern (confidential VMs vs enclaves).

Build integrate attestation, KMS/HSM, logging and basic monitoring.

Test validate performance overhead, resilience and failover patterns.

Evidence generate attestation logs and control mappings ready for auditors.

Scale decide how to extend patterns to additional workloads and regions.

Common pitfalls include over-estimating performance penalties, under-investing in attestation UX, and accidentally introducing new lock-in through proprietary control planes. Community work from the Confidential Computing Consortium aims to standardise concepts and APIs so portable patterns become easier over time. (Confidential Computing Consortium)

If you’d like expert guidance designing a pilot or mapping confidential computing to your current web, mobile or SaaS landscape, Mak It Solutions can help you connect cloud architecture, security and product teams from early design through to a production-ready rollout. (Mak it Solutions)

Key Takeaways

Confidential computing brings data-in-use protection into your cloud stack, hardening sensitive workloads against insiders and infrastructure compromise.

TEEs, enclaves and confidential virtual machines from major clouds let you protect workloads across AWS, Azure, GCP and European providers without fully rewriting applications.

Regulated workloads in healthcare, financial services, public sector and German/EU industry 4.0 gain the biggest risk and compliance benefits.

GDPR/DSGVO, UK-GDPR, HIPAA, PCI DSS and SOC 2 don’t mandate confidential computing yet, but TEEs can significantly strengthen your technical control evidence.

Multi-cloud architectures need a consistent approach to attestation, key management and region pinning (US, UK, Frankfurt, Dublin and beyond).

A focused, metrics-driven pilot on one or two high-risk workloads is the best way to prove value and build internal momentum.

If you’re evaluating confidential computing for your sensitive cloud workloads, you don’t have to design the architecture and pilot alone. Mak It Solutions already helps organisations in the US, UK, Germany and across Europe balance security, compliance and performance in complex multi-cloud estates.

Share one or two high-risk workloads with our team, and we’ll help you sketch a practical confidential computing pilot mapping TEEs, regions, compliance requirements and business outcomes. The next step is simple: book a short consultation and we’ll co-create a roadmap you can take to your CISO, DPO and engineering leads with confidence.( Click Here’s )

FAQs

Q : Does confidential computing replace or complement my existing cloud encryption controls?

A : Confidential computing complements, not replaces, your current encryption at rest and in transit. Disk/database encryption and TLS are still essential for protecting stored and in-flight data. Confidential computing adds a new layer by encrypting and isolating data in use within TEEs, so even if the OS, hypervisor or admin plane is compromised, attackers can’t read plaintext data in memory. The most mature organisations combine all three layers with strong identity, network and logging controls.

Q : Is confidential computing required to be GDPR or HIPAA compliant, or just “nice to have”?

A : Today, GDPR/DSGVO, UK-GDPR and HIPAA do not explicitly require confidential computing; they require appropriate technical and organisational measures, including encryption and access controls where risk warrants it. For high-risk workloads, confidential computing can be a powerful way to demonstrate state-of-the-art protection and satisfy regulators that you have gone beyond minimum expectations, especially where insider threat or cross-border processing is a concern.

Q : What performance impact should I expect when moving to confidential virtual machines or enclaves?

A : Performance overhead varies by provider, workload and TEE technology, but many real-world workloads see single-digit percentage overheads sometimes lower for IO-bound use cases. CPU-intensive cryptography or AI training may see higher costs, which is why pilots should include clear performance baselines and tuning. In most cases, organisations accept modest overhead in exchange for substantial risk reduction and compliance benefits, especially for narrow, high-value workloads.

Q : How do confidential computing solutions differ between AWS, Azure and Google Cloud in real projects?

A : AWS, Azure and Google Cloud all offer confidential virtual machines, but they differ in underlying hardware, attestation flows, supported OS images and integration with KMS/HSM. In real projects, teams typically choose based on existing cloud footprint, regulatory region support, ecosystem tooling and how cleanly TEEs integrate with their DevSecOps stack. Multi-cloud programmes often standardise on portable attestation and key-broker patterns so workloads can move between clouds with minimal rework.

Q : Can I run AI model training and inferencing on encrypted data using confidential computing in multi-cloud environments?

A : Yes this is one of the strongest use cases. Confidential computing lets you run AI model training and inferencing inside TEEs where data remains encrypted in memory and isolated from infrastructure operators. Combined with techniques like secure multi-party computation or federated learning, you can train models on US, UK and EU citizen data across multiple clouds without exposing raw datasets. Architecturally, you’ll still need shared policies for attestation, key management and audit logging across providers.

[…] FinOps cloud cost optimization means using shared data, governance and KPIs so engineering, finance and product teams continuously tune cloud spend to real business value. Done well, it typically cuts around 20–30% of waste from AWS, Azure and GCP while keeping you compliant with GDPR, SOC 2, PCI DSS and other regulations. […]