AI Testing Tools vs Traditional QA Guide

If your team is deciding between AI testing tools vs traditional QA, the real question is not which side is “better.” It is which model helps you ship faster without losing control, compliance, or release confidence.

In 2026, most teams do not need an all-or-nothing answer. The strongest setup for many SaaS companies and regulated enterprises is a hybrid one: keep proven frameworks like Playwright, Selenium, or Cypress for critical paths, then add AI testing where maintenance, visual validation, and test creation are slowing the team down.

That balance matters even more for teams across the US, UK, and EU, where release pressure is high but auditability, privacy, and operational clarity still matter.

What changes when comparing AI testing tools vs traditional QA in 2026?

A few years ago, this debate was mostly for QA leads and test engineers. Now it affects product leaders, engineering managers, and compliance teams too.

AI testing platforms are gaining attention because they promise faster test creation, lower maintenance, and better coverage across fast-changing applications. Traditional QA frameworks still matter because they offer precision, transparency, and full engineering control.

That is why the comparison has shifted. It is no longer just about automation. It is about operating model, cost of upkeep, and how well your quality process scales as the product changes.

The short answer

For most companies, AI testing tools vs traditional QA is really a choice between speed and control.

Traditional QA is usually the stronger option when you need custom logic, code review, and predictable automation behavior. AI testing tools are often the better fit when your biggest pain is brittle tests, slow regression cycles, or limited QA bandwidth.

The best-fit model for 2026 is often hybrid: keep your coded foundation for critical workflows, then use AI where it removes repetitive maintenance and speeds up release readiness.

What counts as traditional QA?

Traditional QA usually includes script-first automation and structured manual testing practices.

That often means teams are working with tools such as.

Playwright for modern end-to-end browser testing

Selenium for broad enterprise and legacy browser automation

Cypress for developer-friendly end-to-end and component testing

Manual exploratory testing for edge cases, UX issues, and release validation

CI/CD pipelines that run coded suites on every build or release candidate

This model gives teams deep control. Tests are written, reviewed, versioned, and maintained in code. For organizations with strong QA engineering capability, that is still a major advantage.

What counts as AI testing tools?

AI testing tools usually combine automation with features designed to reduce setup and maintenance effort.

Common capabilities include.

Natural-language or prompt-based test creation

Recorded flows with AI-assisted refinement

Self-healing locators

Visual regression analysis

AI-generated summaries of failures and release impact

Low-code or no-code collaboration for non-SDET contributors

Platforms in this category often position themselves around faster authoring, easier maintenance, and broader test participation across QA, product, and engineering teams.

That does not mean they remove the need for QA expertise. They simply change where human effort goes.

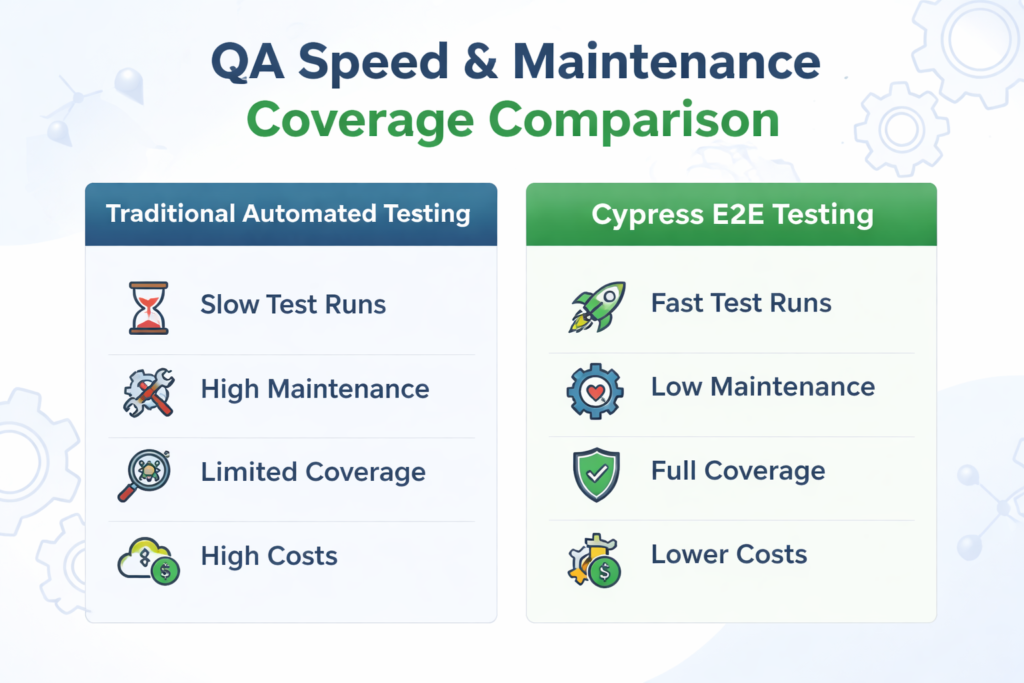

Core differences in speed, maintenance, and coverage

When teams compare AI testing tools vs traditional QA, three areas usually matter most: how quickly tests are created, how hard they are to maintain, and how much confidence they provide before release.

Test creation speed

Traditional QA is slower at the start because tests are written in code.

That is not always a bad thing. Coded automation gives you exact logic, stronger review processes, and clearer ownership. But it also means you depend more heavily on SDETs, automation engineers, or developers with testing experience.

AI testing tools try to remove that bottleneck. In many cases, they let teams generate a starting point from plain language, recorded behavior, or reusable workflows. That can reduce the time needed to build initial regression coverage.

For fast-moving SaaS teams, that speed can be a real advantage.

Test maintenance effort

This is where AI platforms often make their strongest case.

Traditional test suites can become expensive when the UI changes frequently. Even with modern locator strategies and stable frameworks, coded suites still need updates when selectors shift, flows change, or environments behave differently.

AI testing tools aim to reduce that burden through self-healing steps, smarter locator handling, and automated failure analysis.

In practice, that can mean.

Less time spent fixing brittle selectors

Fewer blocked regression cycles

Faster review of failures

Better stability for visual and UI-heavy workflows

If your team spends more time repairing tests than learning from them, AI-assisted maintenance becomes very attractive.

Coverage and release confidence

Traditional stacks can absolutely scale. Many mature teams already cover UI, API, integration, and end-to-end workflows with strong reliability.

But that scale often comes from a combination of tools, internal libraries, reporting layers, and disciplined pipeline design.

AI platforms try to centralize more of that experience. Some are especially strong in areas like.

Visual regression

Cross-browser UI monitoring

Failure clustering

Change impact summaries

Faster expansion of regression suites

For organizations managing multiple frontends, branded interfaces, multilingual experiences, or frequent UI updates, that broader visibility can improve release confidence.

Why traditional QA still matters

There is a tendency to talk about AI as if it makes traditional QA outdated. It does not.

Traditional QA is still the better fit in many real-world situations, especially when quality needs to be precise, reviewable, and tightly controlled.

Where Playwright works especially well

Playwright is a strong choice for modern web teams that want speed without giving up engineering discipline.

It is especially useful when teams need.

Cross-browser testing

Parallel execution

Reliable debugging

Strong isolation between tests

Clear code ownership in the repository

For product companies shipping modern web apps, Playwright remains one of the most practical foundations for test automation.

Where Selenium still earns its place

Selenium continues to matter in enterprise environments with broader browser support needs, legacy systems, or long-standing automation investment.

If an organization already has established WebDriver-based coverage, migration is not always the best use of time or budget. In those cases, maintaining and improving what already works can be smarter than chasing a trend.

Where Cypress remains useful

Cypress still fits teams that want a fast, developer-centered testing experience.

It is often a good match when frontend teams want approachable tooling, visible test feedback, and tight local development workflows. For the right team shape, that ease of use still matters.

When AI testing tools deliver better ROI

AI platforms are not automatically cheaper. Many come with licensing costs that traditional open-source frameworks do not.

But license cost is not the full picture.

The real ROI question is this: how much time, delay, and release risk is your current QA process creating?

If AI testing reduces maintenance hours, speeds up regression, and helps smaller teams cover more surface area, it can pay for itself quickly.

Best fit for fast-moving SaaS teams

A growth-stage SaaS company shipping UI changes every week often feels the pain of script maintenance more than the pain of license fees.

For those teams, AI testing can reduce friction by.

Shortening test creation time

Lowering upkeep on changing user flows

Expanding coverage without a large SDET team

Improving visual validation for frequent UI updates

That is often where AI tools create the clearest value.

Best fit for teams with limited QA engineering bandwidth

Not every company has a deep bench of automation engineers.

When QA headcount is lean, AI tools can help manual testers, product stakeholders, or analysts contribute more directly to the test process. That does not remove the need for strong QA leadership, but it can reduce dependency on a small number of specialists.

Best fit for fragmented enterprise operations

In larger enterprises, QA can become scattered across products, regions, devices, and teams.

AI platforms may help unify reporting, surface patterns faster, and reduce the overhead of maintaining automation at scale. That matters for organizations running web, mobile, and API releases across distributed teams in places like New York, London, Berlin, or Dublin.

Compliance, security, and data residency matter more in 2026

For US, UK, and EU teams, this is where the conversation gets more serious.

When evaluating AI testing tools vs traditional QA, features are only part of the decision. You also need to understand what the platform does with screenshots, logs, recordings, prompts, and test data.

That is especially important in regulated sectors such as.

Finance

Healthcare

Insurance

Public sector

Enterprise SaaS with sensitive customer data

US considerations

In the US, teams often need to review QA tools against internal security requirements and industry-specific obligations.

That may include.

Test data sanitization

Role-based access controls

Secure storage of screenshots and logs

Clear separation between test and production data

Support for enterprise review processes such as SOC 2 or sector-specific controls

For healthcare and payments, these checks are not optional.

UK considerations

UK teams need similar discipline, especially when testing touches personal data, financial workflows, or regulated digital services.

A vendor may look strong from a testing perspective but still fall short if governance, retention controls, or audit visibility are weak. For many UK buyers, those operational details are just as important as automation speed.

Germany and wider EU considerations

For Germany and the wider EU, privacy scrutiny is often even sharper.

Buyers may need to evaluate.

Data residency options

Cross-border data handling

Auditability

Access governance

Retention settings

Vendor subprocessors

For regulated teams, especially in finance or public-sector environments, these points can influence vendor selection as much as test performance does.

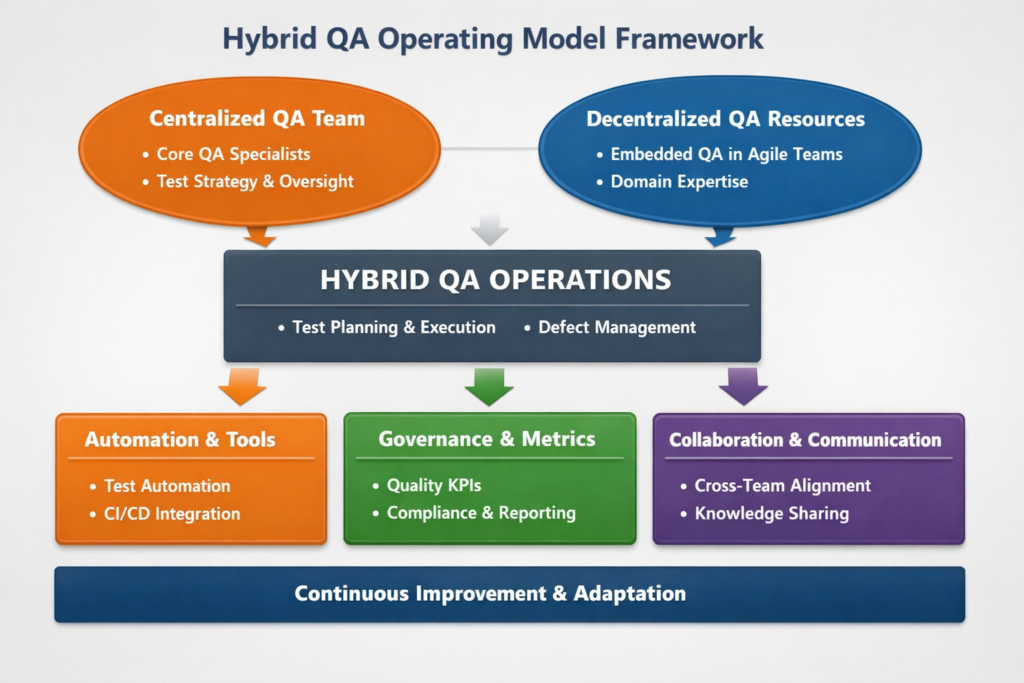

How to choose the right operating model

The smartest decision is rarely ideological. It is practical.

You want the testing model that matches your release speed, product complexity, team capability, and compliance burden.

Choose traditional QA when.

Your application has highly custom workflows

Engineering teams want complete control in code

Auditability and versioned review are essential

You already have strong automation capability

The business relies on predictable, deeply tailored test logic

Choose AI testing tools when.

UI changes are frequent

Test maintenance is slowing releases

QA engineering bandwidth is limited

Visual validation is important

You need broader collaboration across non-coders

Choose a hybrid model when.

Critical paths need coded precision

Broader regression needs faster scaling

The team wants to protect its existing investment

Maintenance costs are rising

You need both engineering control and operational speed

For many mid-market and enterprise teams, the hybrid path is the most realistic and most cost-effective.

A practical shortlist for evaluating QA stacks

When comparing tools, avoid buying into hype too early. Focus on how the stack will behave in your environment, with your people, and under your release pressure.

Use a shortlist that covers these areas.

Capability

Does it support web, API, mobile, or visual testing where needed?

Can it handle your app’s complexity?

How well does it manage dynamic elements and unstable selectors?

Maintenance

How much effort is needed after UI changes?

Does self-healing reduce real work or just mask issues?

How clearly does the tool explain failures?

Governance

Are audit logs available?

Can you enforce role-based access?

Is there enough transparency for engineering and compliance teams?

Integration

Does it fit your CI/CD pipeline?

Can it work with your issue tracking, reporting, and communication tools?

Will it coexist with your current framework if you choose a hybrid model?

Data handling

Where is test data stored?

How are screenshots, logs, and recordings managed?

Can you control retention and access by region?

These questions usually reveal more than a polished demo ever will.

So, which is better in 2026?

The honest answer is that AI testing tools vs traditional QA is the wrong fight if you frame it as a winner-takes-all decision.

Traditional QA still wins where control, transparency, and customization matter most.

AI testing wins where speed, maintenance reduction, and broader regression efficiency matter most.

In 2026, the strongest teams are not choosing sides blindly. They are building a QA operating model that uses each approach where it performs best.

From a practical business point of view, that usually means keeping your coded foundation for critical workflows and adding AI where it removes repetitive drag from the release process.

Final thought

If you are evaluating AI testing tools vs traditional QA, do not start with vendor promises. Start with your release bottlenecks.

Look at where your team is losing time, where maintenance is creating drag, and where compliance needs tighter control. Once that is clear, the right mix of traditional QA and AI-assisted testing usually becomes obvious.

For teams planning long-term quality strategy, it also helps to connect this decision with broader engineering priorities such as Web Development Services, React Native Development Services Flutter Development Services and FinOps Cloud Cost Optimization for US, UK & EU Teams.( Click Here’s )

Key takeaways

AI testing tools usually help most with speed, maintenance reduction, and visual coverage.

Traditional QA frameworks remain stronger for deep customization, precision, and code-level control.

Compliance, security, and data residency can be just as important as features for US, UK, and EU teams.

Playwright, Selenium, and Cypress still have clear value in 2026.

A hybrid QA model is often the most effective long-term choice.

FAQs

Q : Are AI testing tools replacing traditional QA?

A : No. In most organizations, they are extending QA rather than replacing it. Traditional automation still matters for critical paths, custom logic, and governance-heavy workflows.

Q : Is Playwright still worth using in 2026?

A : Yes. Playwright remains a strong option for modern web testing, especially for teams that want cross-browser coverage, parallel execution, and a code-first approach.

Q : Do AI testing tools reduce flaky tests?

A : They can help, especially when flakiness is caused by brittle selectors or rapidly changing UI elements. But they do not eliminate the need for good test design, stable environments, and human review.

Q : What is the best model for regulated teams?

A : Regulated teams often do best with a hybrid model. Keep script-based automation for sensitive or business-critical journeys, then add AI selectively in lower-risk or high-maintenance areas.

Q : How should teams measure ROI before switching?

A : Start with your baseline: maintenance hours, regression duration, flaky failures, escaped defects, and QA-related release delays. Then compare those metrics after a focused pilot instead of attempting a full migration too early.

[…] arrangements in a way that reduces operational disruption, and the NHS Data Security and Protection Tool kit is required for organizations with access to NHS patient data and […]